SEO for single page apps: The nuts and bolts

Historically, web developers have been using HTML for content, CSS for styling, and JavaScript (JS) for interactivity elements. JS enables the addition of features like pop-up dialog boxes and expandable content on web pages. Nowadays, over 98% of all sites use JavaScript due to its ability to modify web content based on user actions.

A relatively new trend of incorporating JS into websites is the adoption of single-page applications. Unlike traditional websites that load all their resources (HTML, CSS, JS) by requesting each from the server each time it’s needed, SPAs only require an initial loading and do not continue to burden the server. Instead, the browser handles all the processing.

This leads to faster websites, which is good because studies show that online shoppers expect websites to load within three seconds. The longer the load takes, the fewer customers will stay on the site. Adopting the SPA approach can be a good solution to this problem, but it can also be a disaster for SEO if done incorrectly.

In this post, we’ll discuss how SPAs are made, examine the challenges they present for optimization, and provide guidance on how to do single page application SEO properly. Get SPA SEO right and search engines will be able to understand your SPAs and rank them well.

-

Benefits of a single-page application

Faster browsing and seamless navigation, minimal layout shifts, and offline browsing capability due to cached resources.

-

SEO challenges

Search engines may struggle to render JavaScript-heavy content or dynamically added elements. SPAs often use a single URL, making it harder for search engines to recognize individual views. Analytics tools may not capture all pageviews due to dynamic routing.

-

Optimization solutions

Use server-side rendering to ensure crawlability. Use History API to avoid hash-based routing for distinct URLs that reflect actual content. Dynamically update meta tags for unique views and use JSON-LD for rich snippets.

-

SEO best practices

Regularly audit your SPA websites with tools like SE Ranking to identify crawlability and indexing issues. Track user behavior on SPA websites with GA4.

-

Essential SEO practices

Implement HTTPS to protect user data and maintain search engine prioritization. Develop keyword-rich, user-intent-focused content with optimized meta tags and organized visuals. Strengthen your backlink profile by focusing on quality over quantity to improve trust and rankings. Continuously analyze competitors’ strategies to identify opportunities and trends.

SPA in a nutshell

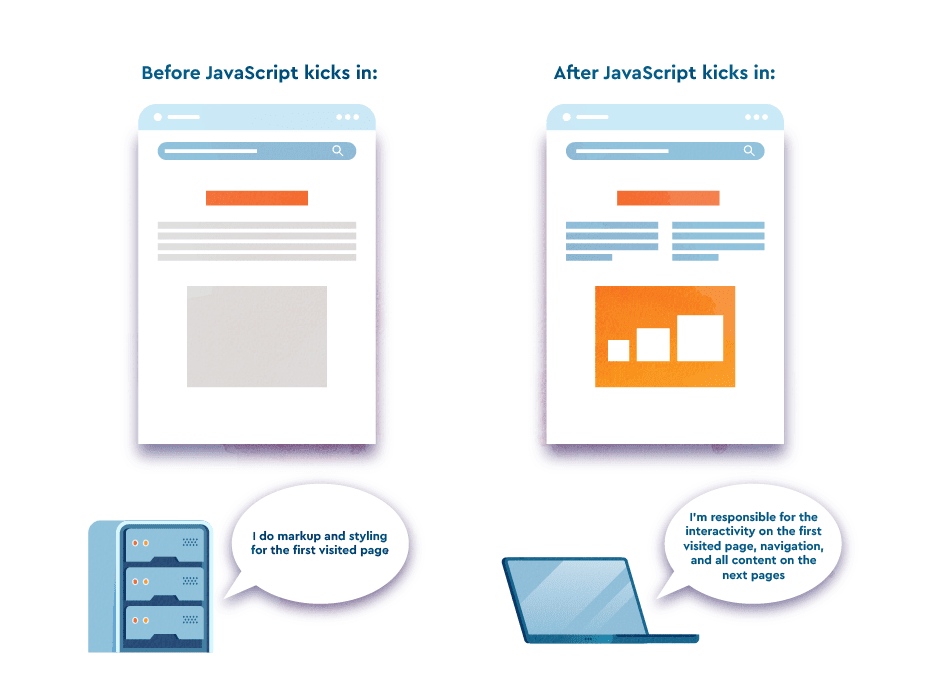

A single page application, or SPA, is a specific JavaScript-based technology for website development that doesn’t require further page loads after the first page view load. React, Angular, and Vue are the most popular JavaScript frameworks used for building SPA. They mostly differ in supported libraries and APIs but each serves fast client-side rendering.

SPAs greatly enhance site speed by eliminating requests between the server and browser. But search engines are not so thrilled about this JavaScript trick. The issue is that search engines don’t interact with sites like users do. This results in a lack of accessible content. If a single-page application is not managed correctly, search engines might fail to recognize dynamically loaded content, displaying only blank pages.

End users benefit from SPA technology because they can easily navigate through web pages without enduring extra page loads and layout shifts. Given that single page applications cache all the resources in local storages (after being loaded at the initial request), users can continue browsing them even with an unstable connection. The technology’s benefits for users keep it alive despite how demanding it can be SEO-wise.

Examples of SPAs

Many high-profile websites are built with single-page application architecture. Examples of popular ones include:

- Google Maps

Google Maps allows users to view maps and find directions. When users visit the site, a single page is loaded, and further interactions are handled dynamically through JavaScript. The user can pan and zoom the map to prompt the application to update the map view without reloading the page.

- Airbnb

Airbnb is a popular travel booking site that uses a single page design that dynamically updates as users search for accommodations. Users can filter search results and explore various property details without navigating to new pages.

When users log in to Facebook, they do not need to refresh the page. Instead, they are presented with a single page that allows them to interact with posts, photos, and comments.

- Trello

Trello is a web-based project management tool powered by SPA. Its single-page design allows you to create, manage, and collaborate on projects, cards, and lists without refreshing.

- Spotify

Spotify is a popular music streaming service. It lets you browse, search, and listen to music on a single page. No need for reloading or switching between pages.

Is a single-page application good for SEO?

Yes, if you implement it wisely.

The SPA approach is popular among web developers for its high-speed operation and rapid development. Developers can apply this technology to create different platform versions based on ready-made code. This speeds up the desktop and mobile application development process, making it more efficient.

While SPAs can offer numerous benefits for users and developers, they also present several challenges for SEO. As search engines traditionally rely on HTML content to crawl and index websites, they may have trouble accessing and indexing content on SPAs that rely heavily on JavaScript. This can result in crawlability and indexability issues.

This tends to be a good approach for both users and single page application SEO, but you must take the right steps to ensure your pages are easy to crawl and index. Proper single page app optimization can ensure your SPA website is as SEO-friendly as any traditional website.

We’ll go over how to optimize SPAs in the sections below.

Why it’s hard to optimize SPAs

Before JS became dominant in web development, search engines only crawled and indexed text-based content from HTML. As JS increased in popularity, Google recognized the need to JS resources and understand pages that rely on them. Google’s search crawlers have made major strides over the years toward accessing content on single-page applications.

Other search engines are also making efforts to crawl JavaScript websites. For example, Bing makes the same suggestions as Google, promoting server-side pre-rendering—a technology that allows bingbot (and other crawlers) to access static HTML as the most complete site version. Since DuckDuckGo and Yahoo! largely source from Bing, they can also crawl SPAs.

While there’s an ongoing debate about JavaScript’s impact on site visibility and rankings, it’s inaccurate to say that JavaScript or its frameworks threaten website optimization. However, improper use can harm your ranking potential, so always follow the best practices for SPA outlined in the section below.

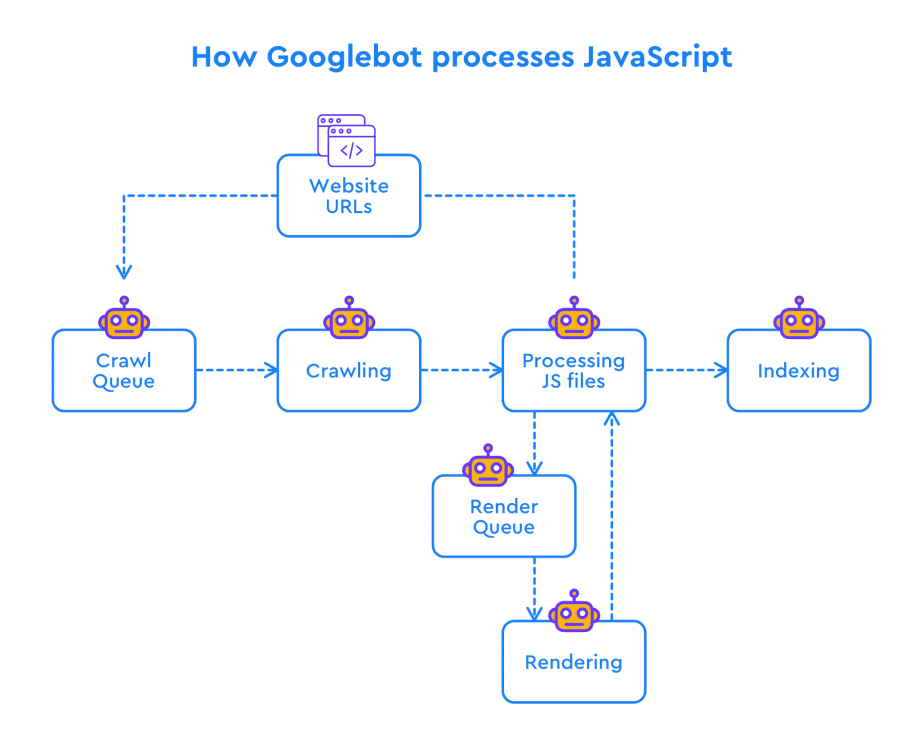

Crawling issues

Googlebot must parse, compile, and execute the JavaScript to see what’s hidden. It normally looks like a single link to the JS file. Only after completing this stage can Googlebot see all page content in the HTML tags. For a long time, there was a delay in how Google processed JavaScript on pages, and all JS content loaded on the client side wasn’t always seen as complete or properly indexed.

But these days, Google search handles JavaScript almost as well as normal web pages. This is proven by a study by Vercel and MERJ, which analyzed over 100,000 Googlebot fetches across various sites.

Google also continues to improve Googlebot’s ability to crawl and index websites by incorporating the latest web technologies. Google has even introduced the concept of an evergreen Googlebot, which operates on the latest Chromium rendering engine (currently version 114). Googlebot’s evergreen status gives it access to numerous new features available to modern browsers. This improves Googlebot’s ability to render and understand the content and structure of modern websites, including single-page apps. Website content can now be crawled and indexed more efficiently.

But, although Google can effectively render JS-heavy pages, doing so is more resource-intensive than static HTML. Downloading, parsing, and executing JavaScript in higher volumes requires substantial computing capacity.

URL and routing

While SPAs provide an optimized user experience, their confusing URL structure and routing make it difficult to create a good SEO strategy around them. Unlike traditional websites, which have distinct URLs for each page, SPAs typically have only one URL for the entire application and rely on JavaScript to dynamically update page content.

Developers must carefully manage the URLs, making them intuitive and descriptive, and accurately reflect the page’s visual content.

To address these challenges, you can use server-side rendering and pre-rendering. This creates static versions of the SPA. Another option is to use the History API or pushState() method. This method allows developers to fetch resources asynchronously and update URLs without using fragment identifiers. Combining it with the History API results in URLs that accurately reflect the content displayed on the page.

Tracking issues

Google Analytics tracking poses another issue for SEO for single-page applications. Traditional websites accurately count each view by running the analytics code every time a user loads or reloads a page. But users who navigate through different pages on a single-page application only trigger the code to run once. Individual pageviews are not triggered.

The nature of dynamic content loading prevents GA from getting a server response for each pageview. This is why standard reports in GA4 do not offer the necessary analytics in this scenario. Still, you can overcome this limitation by leveraging GA4’s Enhanced measurement and configuring Google Tag Manager accordingly.

Pagination can also pose challenges for single page application SEO, as search engines may have difficulty crawling and indexing dynamically loaded paginated content. Luckily, there are some methods that you can use to track user activity on single-page application websites.

These methods require additional effort. We will cover them later.

How to do SPA SEO

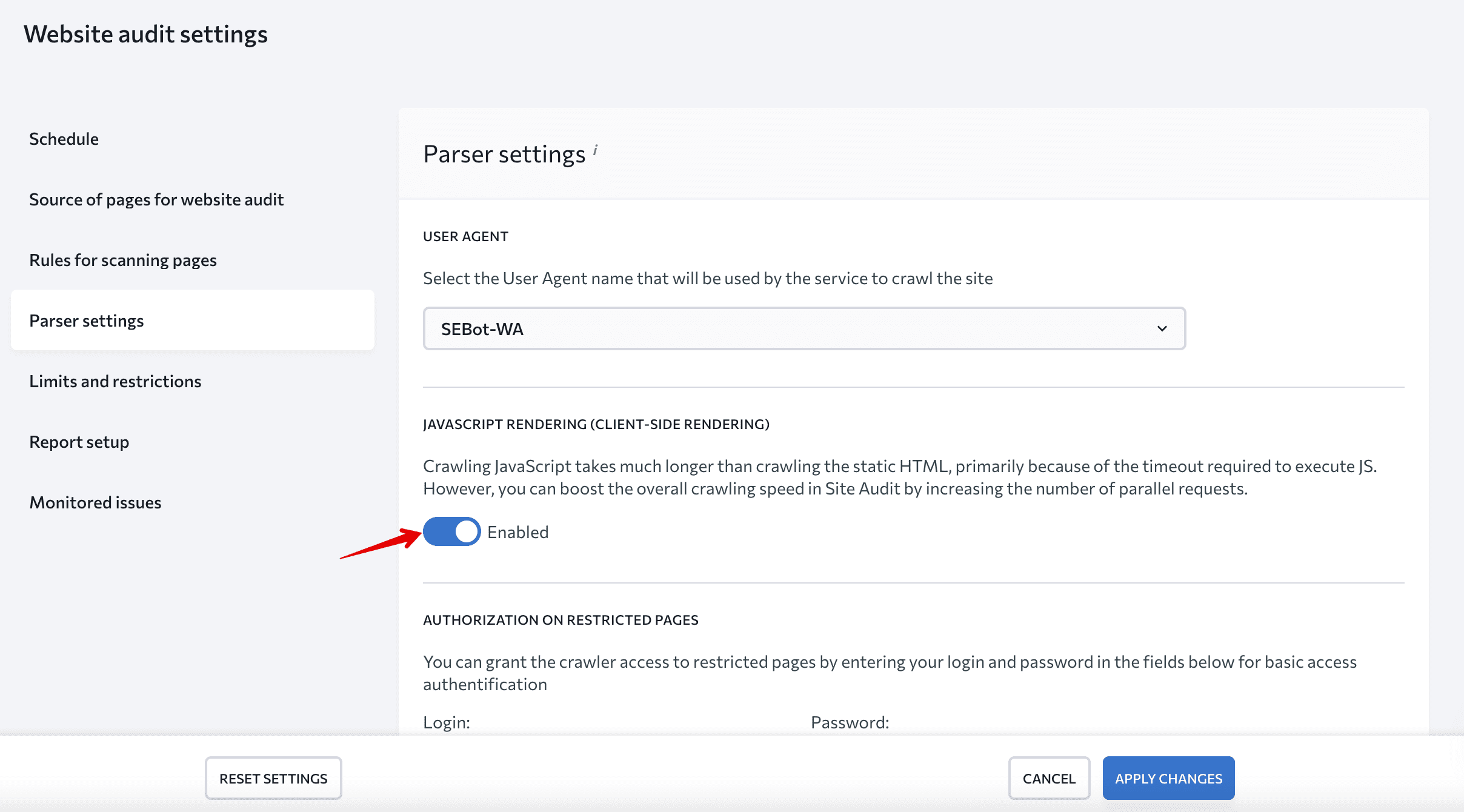

Before implementing our tips for single page app SEO, you need a reliable tool to conduct full website audits and identify all issues.

We recommend using SE Ranking’s Website Audit. This tool uses 110+ parameters to assess your website, including user experience, indexing, security issues, localization, linking, and more. Its 2.0 version has a JavaScript rendering feature for checking client-side-rendered websites like any other. Enable it in the settings to use it.

Now, let’s review best practices for SEO for single page apps.

Server-side rendering

Server-side rendering (SSR) involves rendering a website on the server and sending it to the browser. This technique allows search bots to crawl all website content based on JavaScript-based elements. While this is a lifesaver for crawling and indexing, it might slow down the load. One noteworthy aspect of SSR is that it diverges from the natural approach taken by SPAs. SPAs rely mostly on client-side rendering, which contributes to their fast and interactive nature and seamless user experience. It also simplifies the deployment process.

Isomorphic JS

One possible rendering solution for a single-page application is isomorphic, or “universal” JavaScript. Isomorphic JS plays a major role in generating pages on the server side, alleviating the need for a search crawler to execute and render JS files.

The “magic” of isomorphic JavaScript applications lies in their ability to run on both the server and client side. It works by letting users interact with the website as if its content was rendered by the browser when in fact, the user was actually using the HTML file generated on the server side. There are frameworks that facilitate isomorphic app development for each popular SPA framework. Let’s use Next.js and Gatsby for React as examples of this. The former generates HTML for each request, while the latter generates a static website and stores HTML in the cloud. Similarly, Nuxt.js for Vue renders JS into HTML on the server and sends the data to the browser.

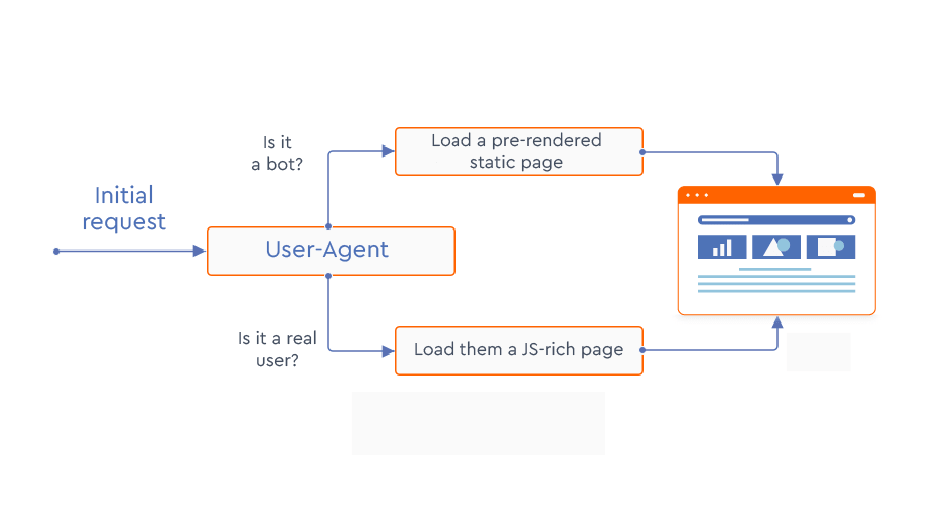

Pre-rendering

Another go-to solution for single page applications is pre-rendering. This is when all HTML elements are loaded and stored in the server cache and then served to search crawlers. Several services, like Prerender and BromBone, intercept requests made to a website and show different versions of pages to search bots and real users. The cached HTML is shown to the search bots, while the “normal” JS-rich content is shown to real users.

There are other, more time-consuming methods for serving static HTML to crawlers. One example is using Headless Chrome and the Puppeteer library, which convert routes to pages into the hierarchical trees of HTML files. However, you must remove the bootstrap code and edit your server configuration file to locate the static HTML for search bots.

Progressive enhancement with feature detection

This technique involves progressively enhancing the experience with different code resources. It uses a simple HTML page as the foundation for crawlers and users. On top of this page, additional features such as CSS and JS are added and enabled (or disabled) according to browser support.

To implement feature detection, write separate chunks of code to check if each required feature API is compatible with each browser. Fortunately, libraries like Modernizr can help you save time and simplify this process.

Views as URLs to make them crawlable

When users scroll through an SPA, they pass separate website sections. Technically, an SPA contains only one page (a single index.html file) but visitors feel like they’re browsing multiple pages. This is called “views”, meaning users perceive the HTML fragments in SPAs as screens or pages.

When users move through different parts of a single-page application website, the URL changes only in its hash part (for example, http://website.com/#/about, http://website.com/#/contact). The JS file instructs browsers to load certain content based on fragment identifiers (hash changes).

To help search engines perceive different website sections as distinct pages, implement distinct URLs using the History API. This is a standardized method in HTML5 for manipulating the browser history. Google Codelabs suggests using this API instead of hash-based routing to help search engines recognize and treat different content fragments triggered by hash changes as separate pages. The History API allows you to change navigation links and use paths instead of hashes.

Suppose you have a single-page application (SPA) with sections like Home, About, and Contact. When a user clicks on a link, this API changes the URL to yourwebsite.com/about, yourwebsite.com/home, yourwebsite.com/contact without reloading the page.

Google analyst Martin Splitt gives the same advice—to treat views as URLs by using the History API. He also emphasizes that to make links on your website crawlable by search engines, you should use the <a> tag with an href attribute instead of relying on the onclick action. This is because JavaScript onclick can’t be crawled and is invisible to Google.

The primary rule is to make links crawlable. Make sure your links follow Google standards for single page application SEO and that they appear as follows:

<a href="https://yoursite.com"> <a href="/services/category/SEO">

Google may try to parse links formatted differently, but there’s no guarantee it will follow through or succeed. Avoid links that appear in the following way:

<a routerLink="services/category">

<span href="https://yoursite.com">

<a onclick="goto('https://yoursite.com')">

Begin by adding links using the <a> HTML element, understood by Google as a classic link format. Next, the URL included should be a valid and functioning web address. Ensure it follows the rules of a Uniform Resource Identifier (URI) standard. Otherwise, the website and its content cannot be properly indexed or understood by crawlers.

Views for error pages

With single-page websites, the server has nothing to do with error handling and will always return the 200 status code, which indicates (incorrectly in this case) that everything is okay. But users may sometimes use the wrong URL to access an SPA, so there should be some way to handle error responses. Google recommends creating separate views for each error code (404, 500, etc.) and tweaking the JS file so that it directs browsers to the respective view.

Titles & descriptions for views

Titles and meta descriptions are essential elements for on-page SEO. A well-crafted meta title and description can jointly improve the website’s visibility in SERPs and increase its click-through rate.

In the case of SPA SEO, managing these meta tags can be challenging because there’s only one HTML file and URL for the entire website. At the same time, duplicate titles and descriptions are among the most common SEO issues.

Creating unique views for each section of your single-page website is key. It’s also important to assign titles and descriptions to reflect the content displayed on each view.

Consider using tools that can help you track and fix these issues, among others. SE Ranking’s On-Page SEO Checker Tool is ideal for this. It lets you optimize your page content for your target keywords, your page title and description, and other elements.

You must take a strategic approach to on-page optimization to ensure your SPA is optimized for search engines and users. Here’s your complete on-page SEO guide with top strategies for on-site optimization.

Developers can use JavaScript to set or change the meta description and <title> element in an SPA.

Using robots meta tags

Robots meta tags instruct search engines on how to crawl and index a website’s pages. When implemented correctly, they ensure search engines can crawl and index the most important parts of a website, while avoiding duplicate content or incorrect page indexing.

For example, using a “nofollow” directive can prevent search engines from following links within a certain view, while a “noindex” directive in the robots meta tag can exclude certain views or sections of the SPA from being indexed.

<meta name="robots" content="noindex, nofollow">

You can also use JavaScript to add a robots meta tag, but if a page has a noindex tag in its robots meta tag, Google won’t render or execute JavaScript on that page. In this case, your attempts to change or remove the noindex tag using JavaScript won’t be effective because Google will never even see that code.

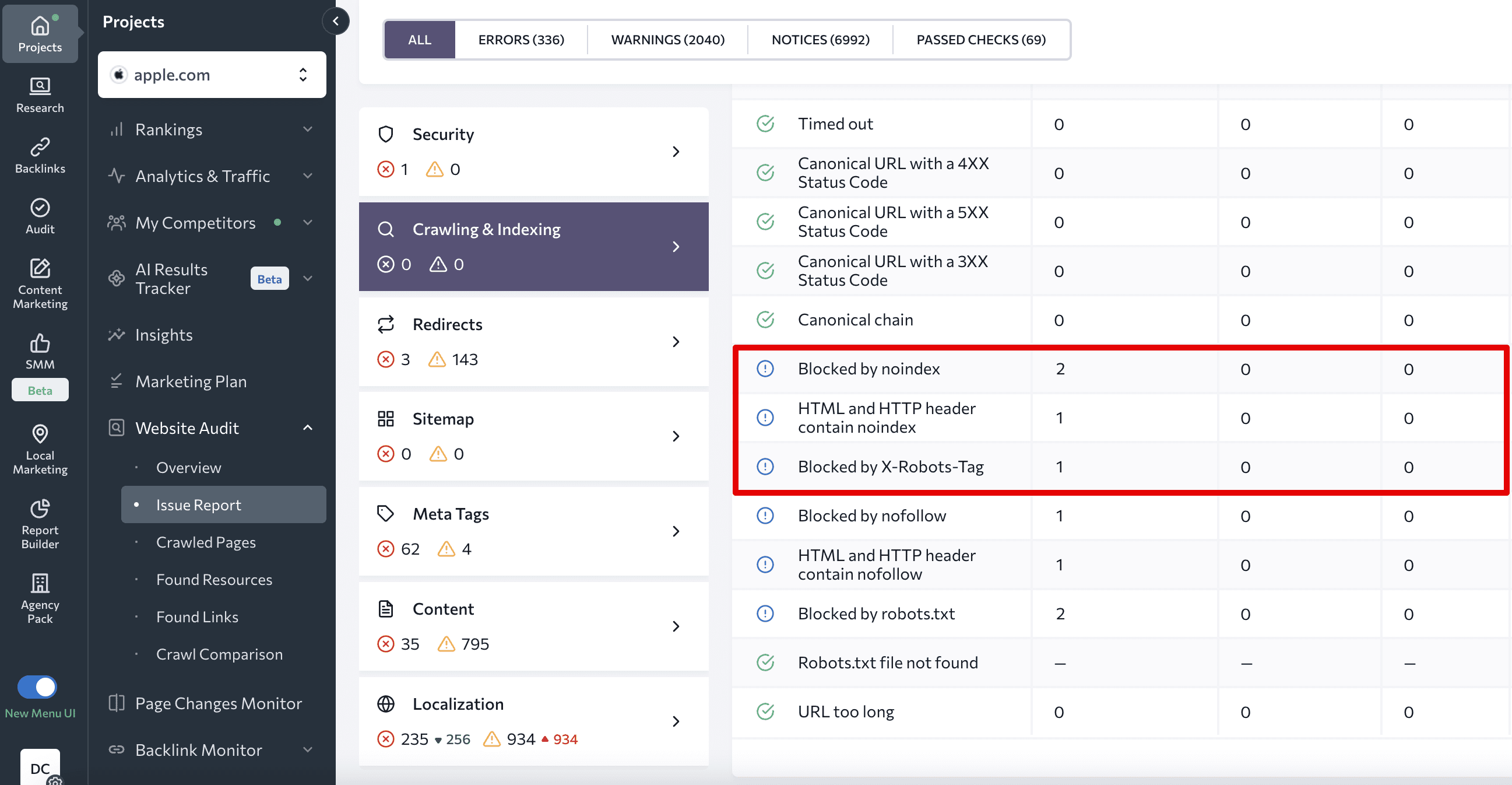

Run an SPA audit to check for robots meta tags issues on your SPA.

Avoid soft 404 errors

A soft 404 error occurs when a website should return a 404 (Not Found) error but instead returns a status code of 200 (OK).

Soft 404 errors can be problematic for SPA websites because of how they are built and the technology they use. Since SPAs rely heavily on JavaScript to dynamically load content, the server may not always accurately identify whether a requested page exists. Client-side routing, typically used in client-side rendered SPAs, makes using meaningful HTTP status codes tricky.

You can avoid soft 404 errors by applying one of the following techniques:

- Use a JavaScript redirect to a URL that triggers a 404 HTTP status code from the server.

- Add a noindex tag to error pages through JavaScript.

Lazily loaded content

Lazy loading is when you only load content when needed (e.g. loading images or videos as a user scrolls down the page). This technique can improve page speed and experience, especially for SPAs where large amounts of content can be loaded at once. Unfortunately, you can inadvertently hide content from Google if you apply lazy loading incorrectly.

Take precautions to ensure Google indexes and sees all the content on your page. Here is how:

- Apply native lazy-loading for images and iframes using the “loading” attribute.

- Use IntersectionObserver API. This allows developers to see when an element enters or exits the viewport and a polyfill to ensure browser compatibility.

- Resort to JavaScript library. This provides a set of tools and functions that make content loading easy, but only when it enters the viewport.

Always double-check that the approach you choose functions properly. Use a Puppeteer script to run local tests, and use the URL Inspection Tool in Google Search Console to see if all images were loaded.

Social shares and structured data

Websites often overlook social sharing optimization. No matter how insignificant it may look, implementing XCards and Facebook’s Open Graph helps with rich sharing across popular social media channels, which is good for your website’s search visibility. If you don’t use these protocols, sharing your link will trigger the preview display of a random, and sometimes irrelevant, visual object.

Using structured data is also extremely useful when making different types of website content easier for crawlers to understand. Schema.org provides options for labeling data types like videos, recipes, products, and so on.

You can also use JavaScript to generate the required structured data for your SPA in the form of JSON-LD and inject it into the page. JSON-LD is a lightweight data format that is easy to generate and parse.

You can conduct a Rich Results Test on Google to discover any currently assigned data types and to enable rich search results for your web pages.

Testing an SPA for SEO

There are several ways to test your SPA website’s SEO. You can use tools like Google Search Console or Mobile-Friendly Tests. You can also inspect your content in search results. We’ve outlined how to use each of them below.

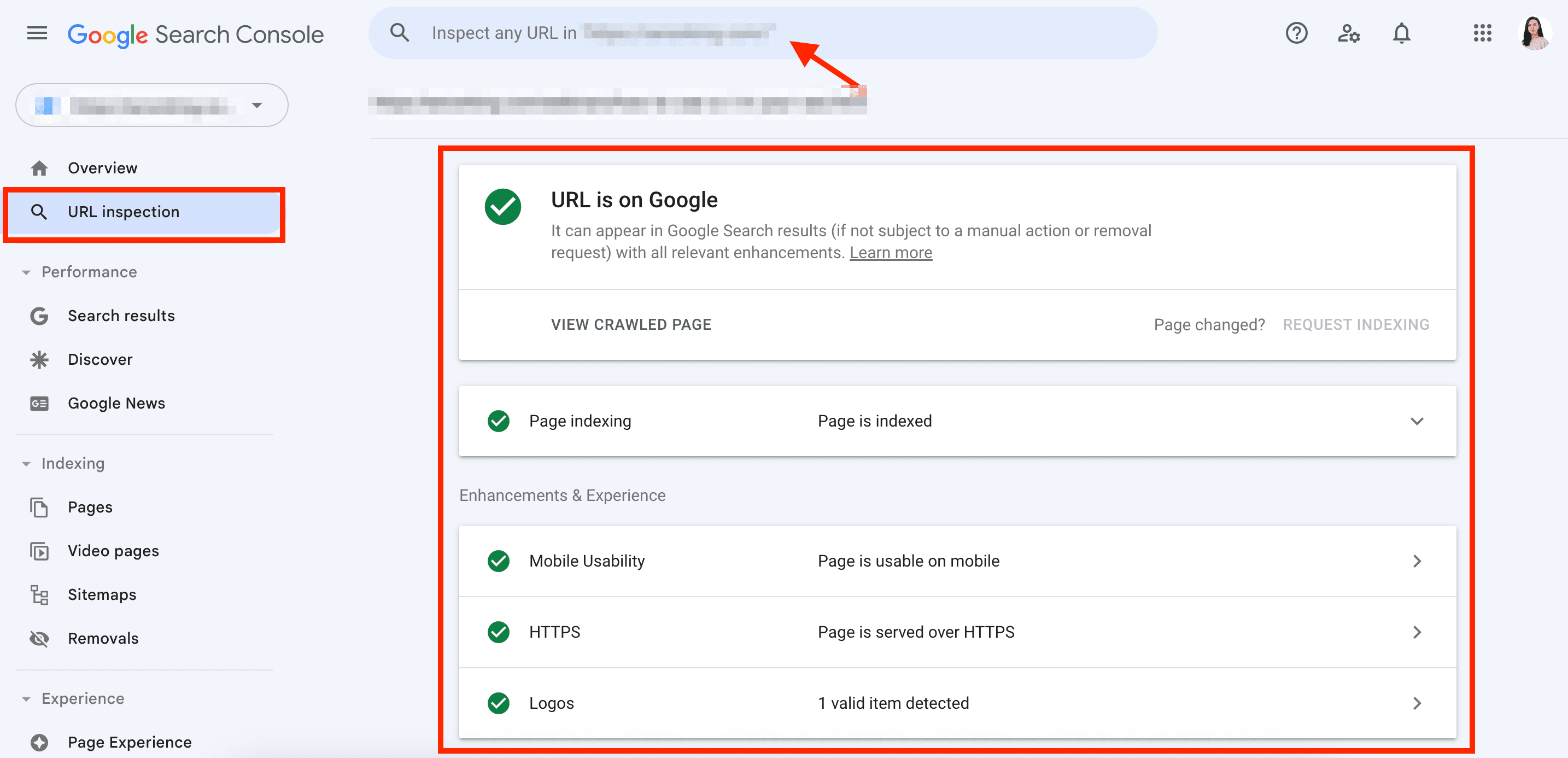

URL inspection in Google Search Console

You can access the essential crawling and indexing information in the URL Inspection section of Google Search Console. It doesn’t give a full preview of how Google sees your page, but it does provide you with basic information, including:

- Whether the search engine can crawl and index your website

- The rendered HTML

- Page resources that can’t be loaded and processed by search engines

You can open the reports to find details related to page indexing, mobile usability, HTTPS, and logos.

Mobile-friendly test

In 2019, Google switched to mobile-first indexing, meaning that the search engine now uses the mobile version of a page for ranking. If content is not accessible on mobile devices, it risks being overlooked in search results. Your site should always look good, be easy to use, and function well on mobile devices like smartphones, tablets, or e-readers.

You can use the Mobile-Friendly Test tool to evaluate your site’s mobile-friendliness. It assesses key technical (such as page speed) and usability (viewport settings, text size, touch elements, and on-screen content) factors.

Another excellent tool for testing your SPA is Headless Chrome. It’s also useful for observing how JS will be executed. Unlike traditional browsers, a headless browser doesn’t have a full UI but provides the same environment that real users would experience.

Finally, use tools like BrowserStack to test your SPA on different browsers.

Read our guide on mobile SEO to get pro tips for making mobile-friendly websites.

Check the cached versions of your pages

Since Google retired cache links and disabled cache functionality, users must find other methods for checking archived pages.

You could utilize Google Cache Checker, our free and simple tool for checking the cached version of your pages. The tool will then take you to the Web Archive and display the latest saved copy.

Check the content in the SERP

There are a few ways to check how your SPA appears in SERPs:

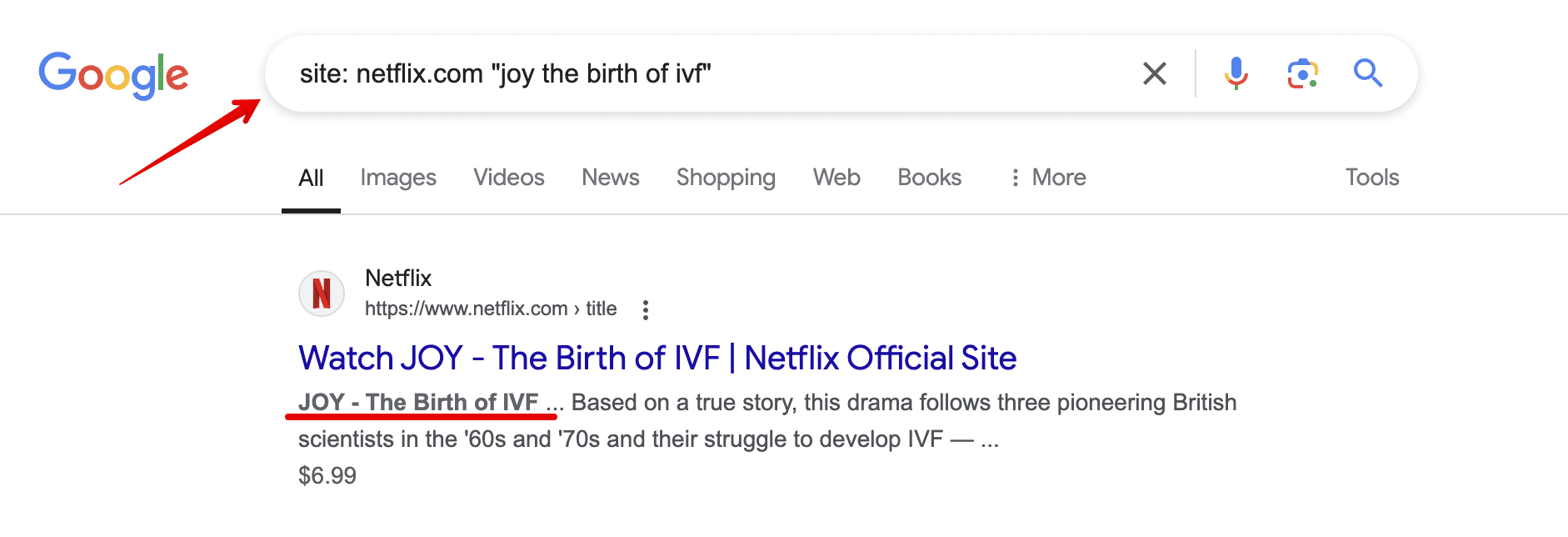

- You can check direct quotes of your content in the SERP to see whether the page containing that text is indexed.

- You can use the site: command to check your URLs in the SERP.

Finally, you can combine both. Enter site:domain name “content quote”, like in the screenshot below, and if the content is crawled and indexed, you’ll see it in the search results.

There’s no way around basic SEO

Aside from the more unique challenges associated with single page applications, common optimization techniques still apply. Here are some basic elements of SEO you should optimize SPAs for:

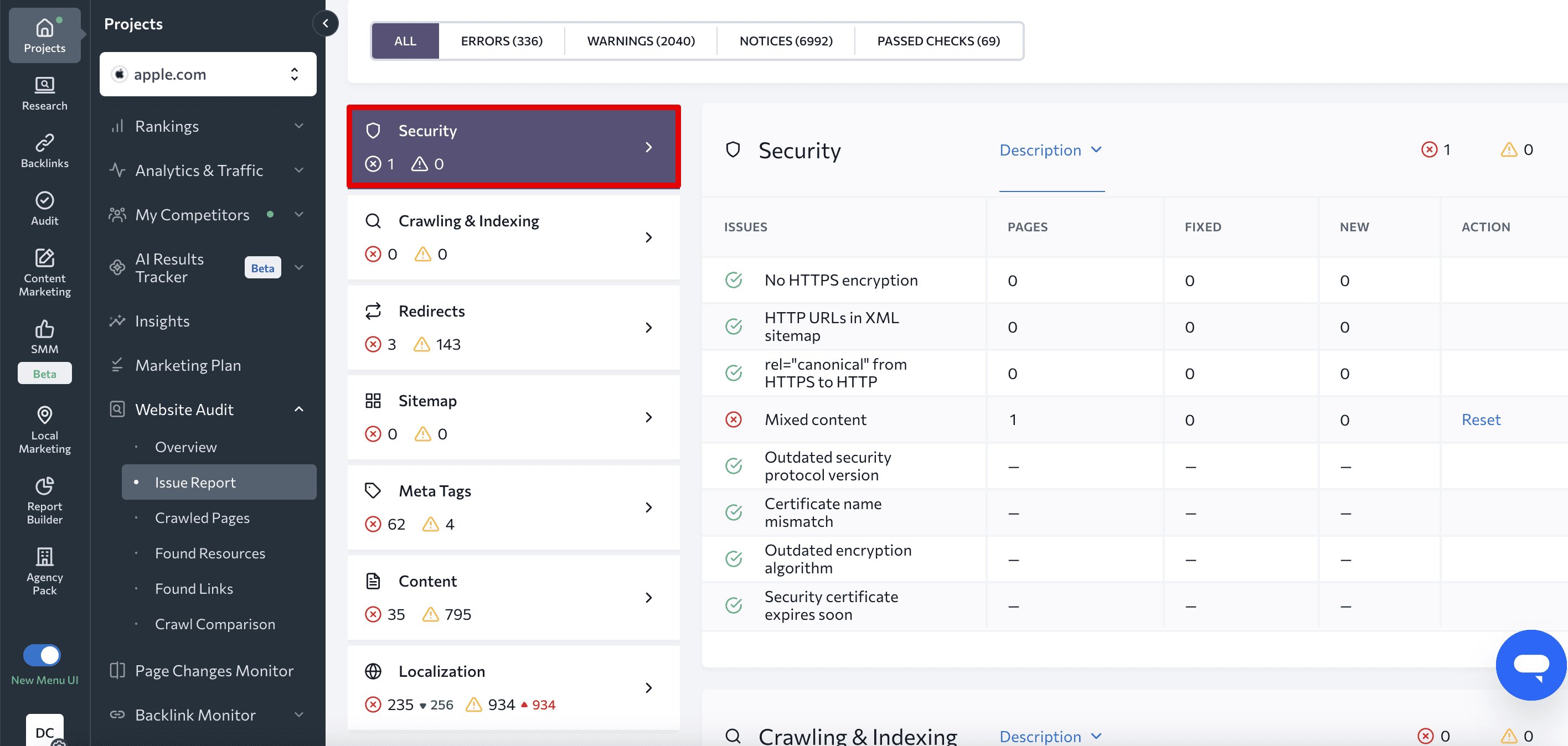

- Security. Your website should be protected with HTTPS. Without it, search engines may deprioritize your site, and any user data it handles could be at risk. Never cross website security off your to-do list, as it requires regular monitoring. Check your SSL/TLS certificate for critical errors with SE Ranking’s Website Audit tool regularly to make sure your website can be safely accessed:

- Content optimization. We’ve talked about specific measures for optimizing content in SPAs, such as writing unique title tags and description meta tags for each view, similar to how you would for each page on a multi-page website. But you must optimize your content properly before taking the above measures. Your content should be tailored to the right user intents, well-organized, visually appealing, and rich in helpful information. If you haven’t collected a keyword list for the site, it will be challenging to deliver the content your visitors need. Read our guide on keyword research to learn more.

- Link building. Backlinks are key signals to Google about how much other resources trust your website. Because of this, building a backlink profile is a vital part of your site’s SEO. No two backlinks are alike, and each link pointing to your website holds a different value. While some backlinks can significantly boost your rankings, spammy ones can damage your search presence. Consider learning more about backlink quality and following best practices to strengthen your link profile.

- Competitor monitoring. You’ve most likely already conducted research on your competitors during the early stages of your website’s development. However, as with any SEO and marketing tasks, it is important to continually monitor your niche. Thanks to data-rich tools, you can easily monitor rivals’ strategies in organic and paid search. This allows you to evaluate the market landscape, spot fluctuations among major competitors, and draw inspiration from successful keywords or campaigns that already work for similar sites.

Tracking single page applications

Track SPA with GA4

Tracking user behavior on SPA websites can be challenging, but GA4 has the tools to handle it. Using GA4 for SEO helps you better understand how users engage with your website, identify areas for improvement, and make data-driven decisions to improve user experience.

If you still haven’t installed Google Analytics, read our GA4 setup guide to learn how to do it quickly and correctly.

Once you are ready to proceed, follow the next steps:

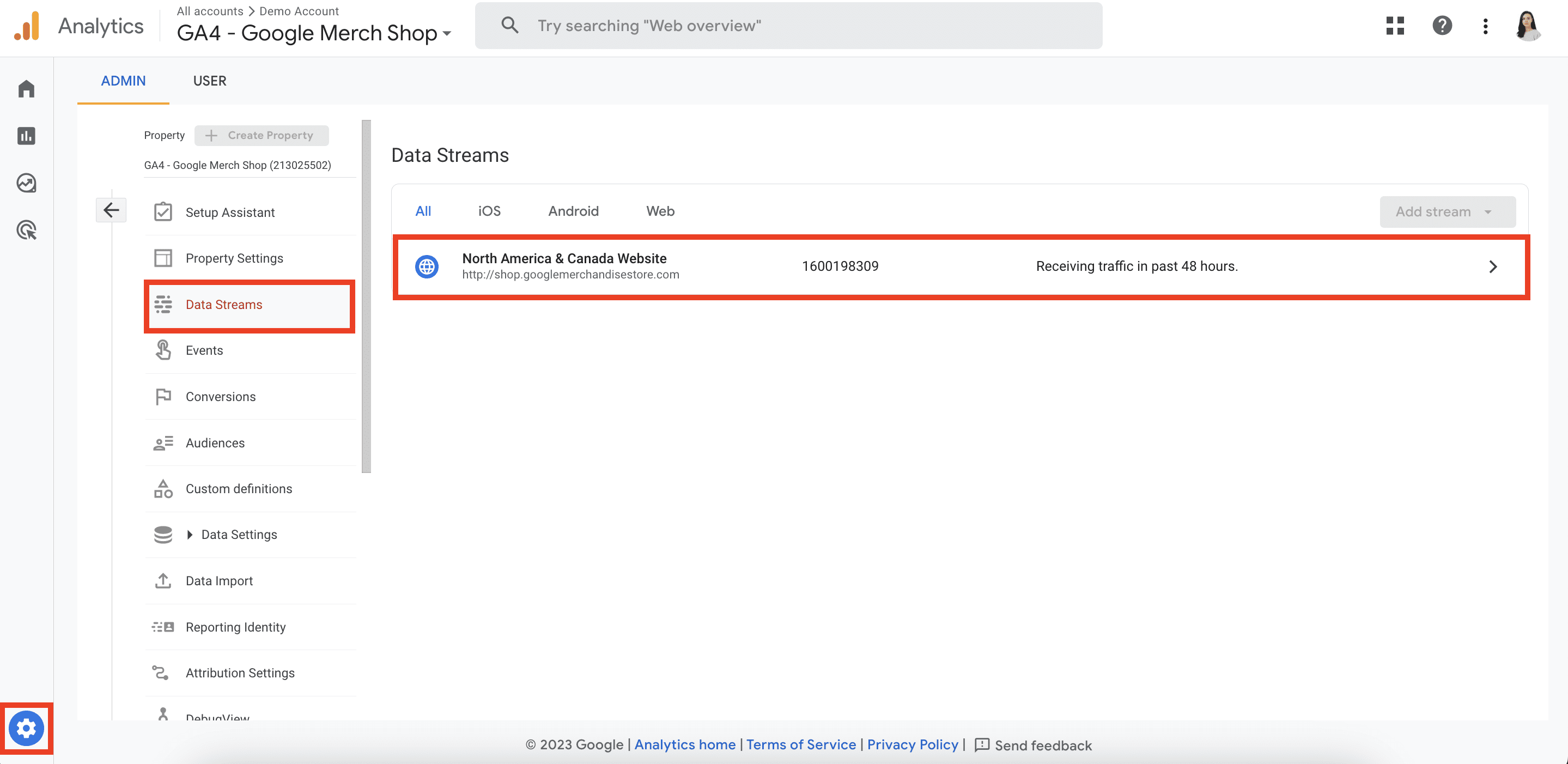

- Go to your GA4 account and then on Data Streams in the Admin section. Click on your web data stream.

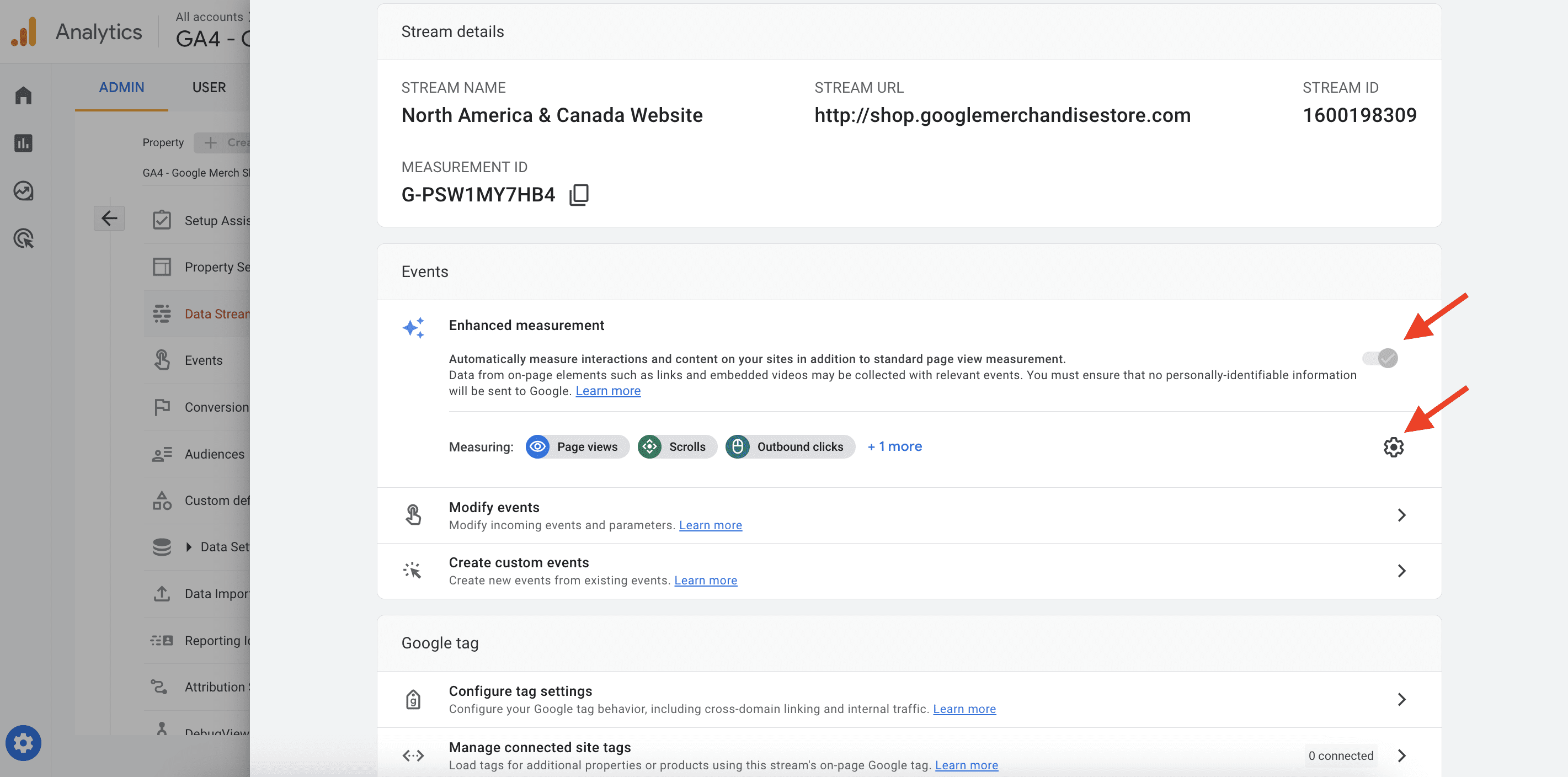

- Make sure that the Enhanced Measurement toggle is enabled. Click the gear icon.

- Open the advanced settings within the Page views section and enable the Page changes setting based on browser history events. Remember to save the changes. Disable all default tracks unrelated to pageviews, as they might affect accuracy.

- Open Google Tag Manager and enable the Preview and Debug mode.

- Navigate through different pages on your SPA website.

- In the Preview mode, the GTM container will show you the History Change events.

- If you click on your GA4 measurement ID next to the GTM container in the preview mode, you will see multiple Page View events sent to GA4.

If these steps work, GA4 can then track your SPA website. Here are some additional steps to follow if it still doesn’t track your site:

- Implement the history change trigger in GTM.

- Ask developers to activate a dataLayer.push code.

Track SPA with SE Ranking’s Rank Tracker

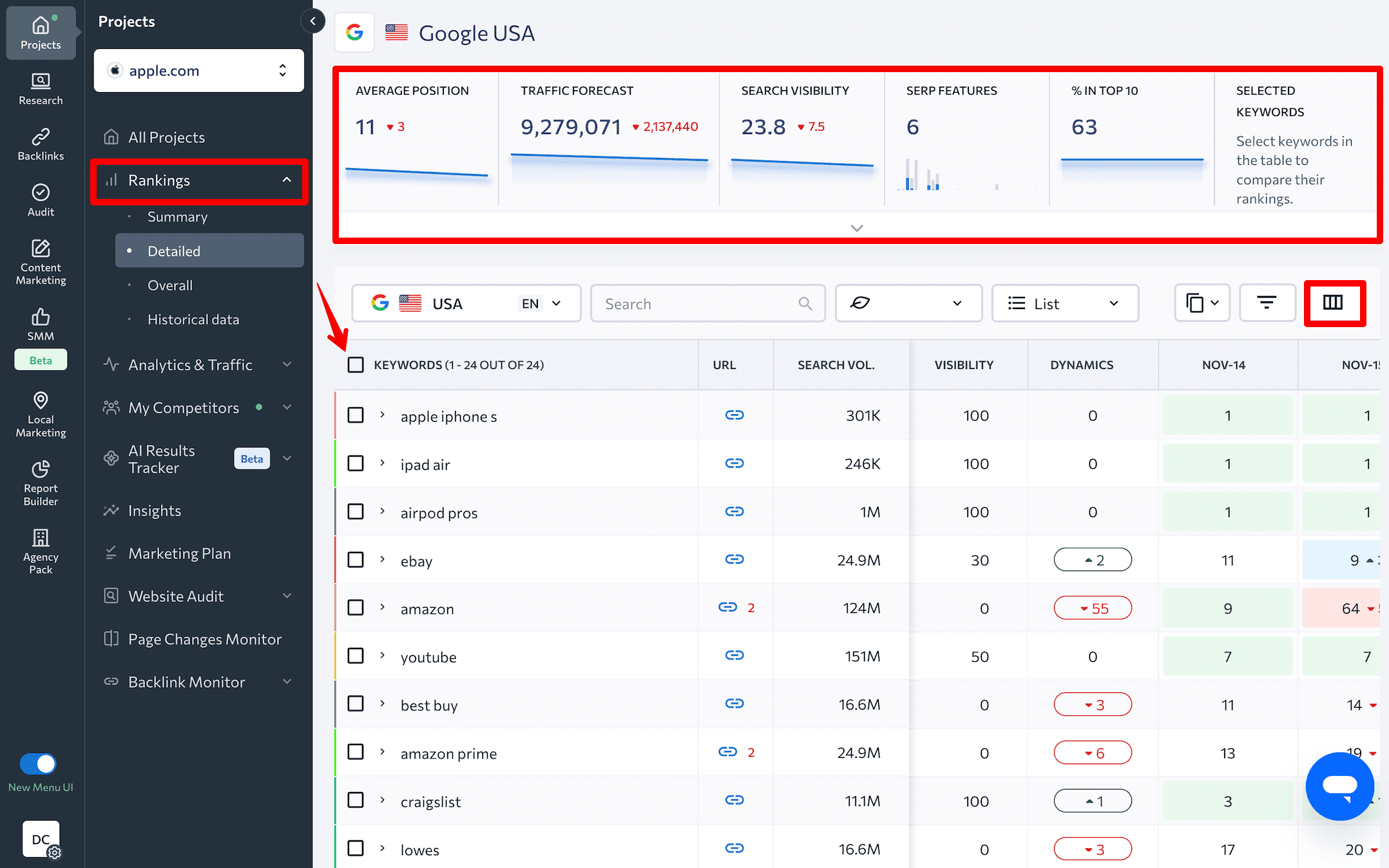

Another comprehensive tracking tool is SE Ranking’s Rank Tracker. This tool allows you to check single page applications for the keywords you want it to rank for, and it can even check them in multiple geographical locations, devices, and languages. This tool supports tracking on popular search engines such as Google, Google Mobile, Yahoo!, and Bing.

To start tracking, create a project for your website on the SE Ranking platform, add keywords, choose search engines, and specify competitors.

Once your project is setup, go to the Rankings tab, which consists of several reports:

- Summary

- Detailed

- Overall

- Historical Data

We’ll focus on the default Detailed tab. It will likely be the first report you see after adding your project. The top of this section displays your SPA’s:

- Average position

- Traffic forecast

- Search visibility

- SERP features

- % in top 10

- Keyword list

The keyword table beneath these graphs provides information on each keyword your website ranks for. It includes details on the target URL, search volume, SERP features, ranking dynamics, and so on. You can customize the table with additional parameters in the Columns section.

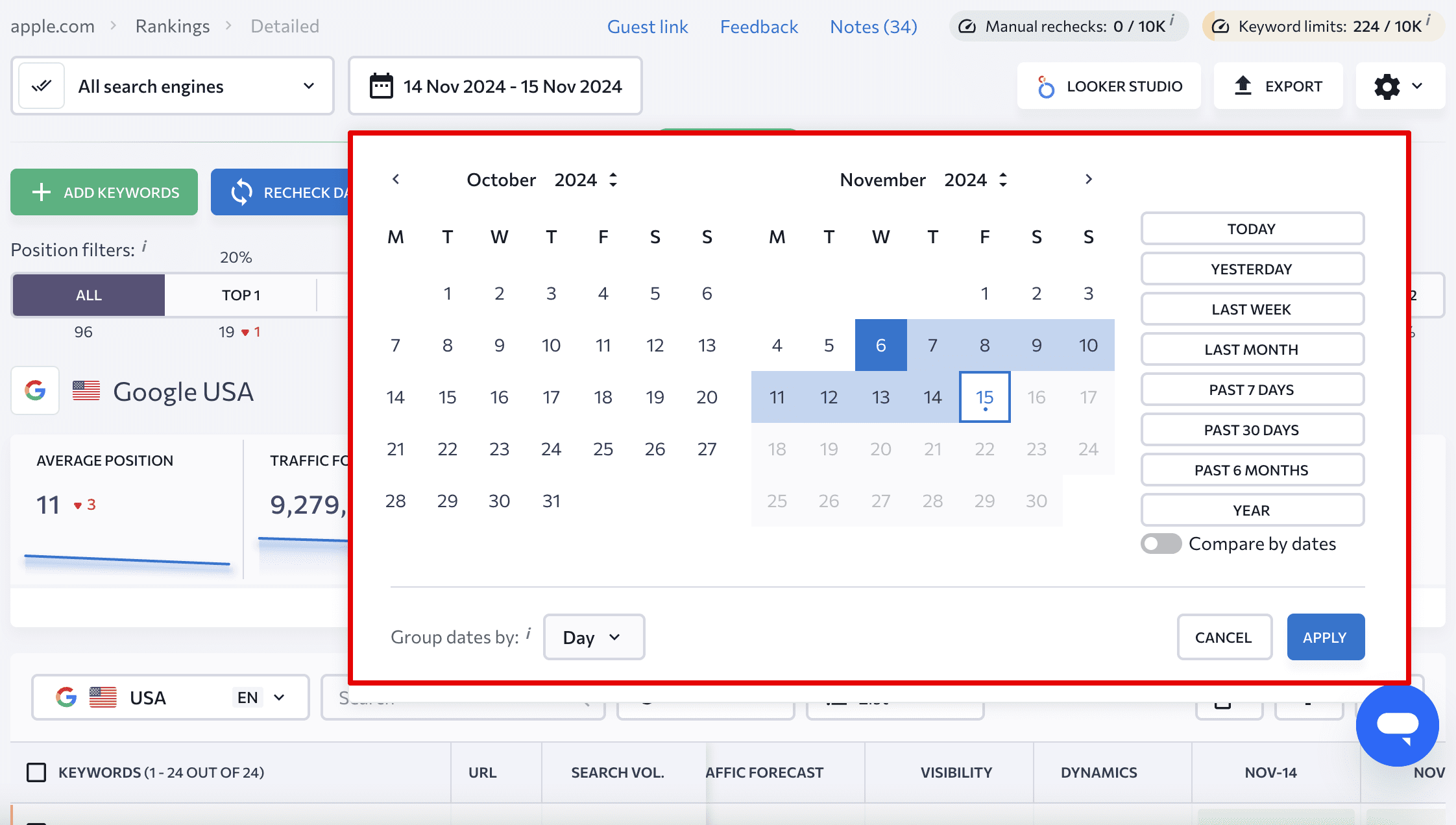

The tool lets you filter your keywords by preferred parameter. You can also set target URLs and tags, see ranking data for different dates, and even compare results.

Keyword Rank Tracker provides you with two additional reports:

- Your website ranking data: This includes all search engines added to your project. These can be found in a single tab, labeled as Detailed.

- Historical information: This includes data on fluctuations in your website rankings since the baseline date. Navigate to the Historical data tab to find this info.

For more how-to information on monitoring website positions, read our guide on rank tracking in different search engines.

Single page app websites done right

Now that you know all the ins and outs of SEO for single page apps, the next step is to put theory into action. Make your content easily accessible to crawlers and watch as your website shines in the eyes of search engines. While providing visitors with dynamic content load, blasting speed, and seamless navigation, it’s also important to remember to present a static version to search engines. You’ll also want to make sure that you have a correct sitemap, use distinct URLs instead of fragment identifiers, and label different content types with structured data.

The rise of single-page experiences powered by JavaScript caters to the demands of modern users who crave immediate interaction with web content. To maintain the UX-centered benefits of SPAs while achieving high rankings in search, developers are switching to what Airbnb’s engineer Spike Brehm calls “the hard way”—skillfully balancing the client and server aspects of web development.