What is SEO?

What does SEO stand for in marketing? SEO stands for search engine optimization. It means putting a web page in the search results in order to receive organic traffic from searchers. There hasn’t been a day that billions of people didn’t type certain queries in search engines to find useful information. To be precise, Google alone receives over 99,000 requests per second! The number of searches grows exponentially, which gives websites a large audience they can potentially convert into their regular visitors or paying customers. Learning the rules of getting to the top of the search results and complying with those rules are what SEO basically is.

How does searching work?

When you open any search engine and type the query you’re interested in, you’ll see the list of the first 10 results that can be organized in a different way. It’s called the SERP (search engine results page). There might be more than 10 results if the SERP includes paid ads displayed above, below, or on the right side to organic results.

The place where a page stands among the results is its search engine ranking and the higher the ranking, the more trust the page has and probably the more relevant it is to the query. To define the order of pages to appear, search engines evaluate hundreds of ranking factors, which we will cover later. The ranking process happens organically: in contrast to paid ads, you can’t pay Google or other engines to place a website higher or re-evaluate it more regularly.

If you’re an active searcher who knows and notices all the features of the search results page and the differences between different types of queries, you can skip this part and move to the section about crawling and indexing.

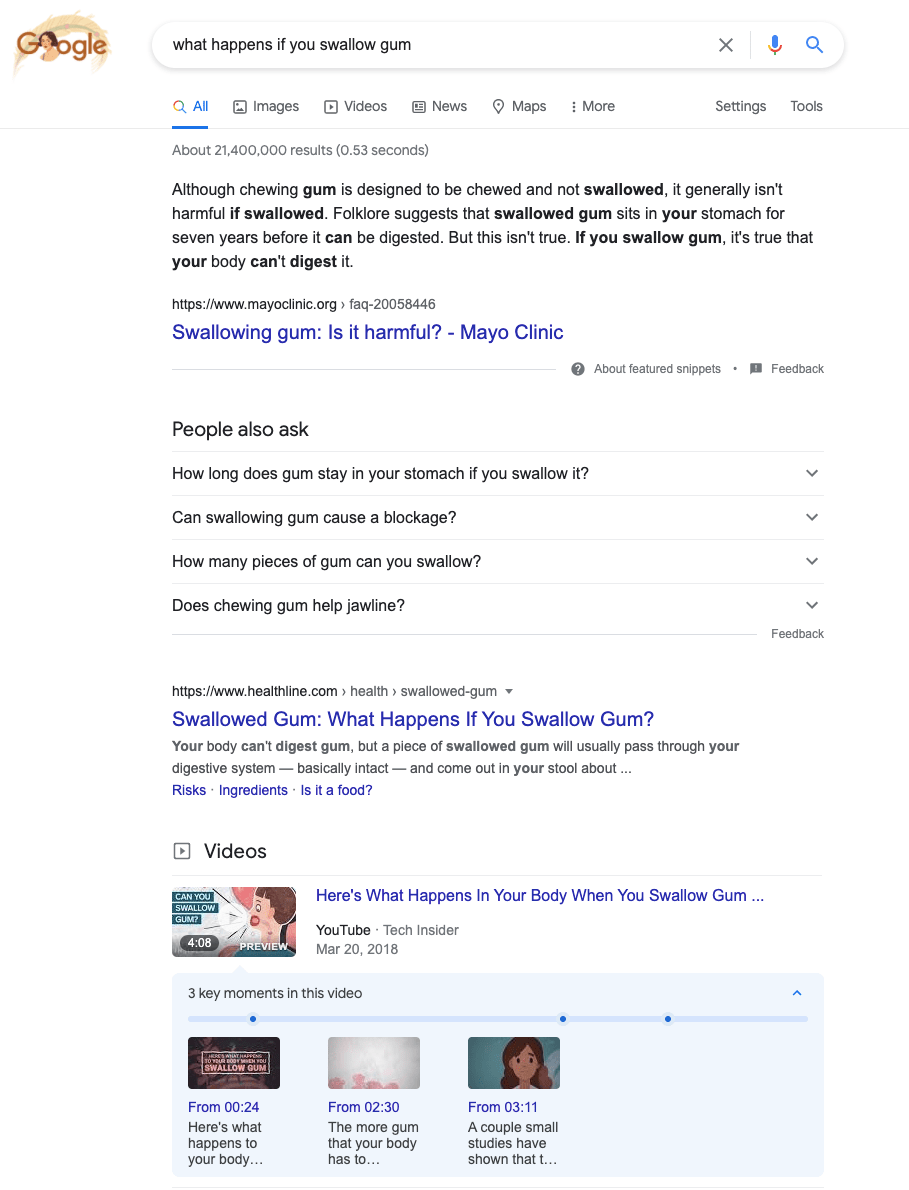

For now, let’s see how different types of searches work. The more unambiguous the query is, the bigger the chances of finding the right information quickly. For example, if you format your query as a question, you’ll probably get a lot of SERP features (which are distinctive blocks of information that help navigate through the search).

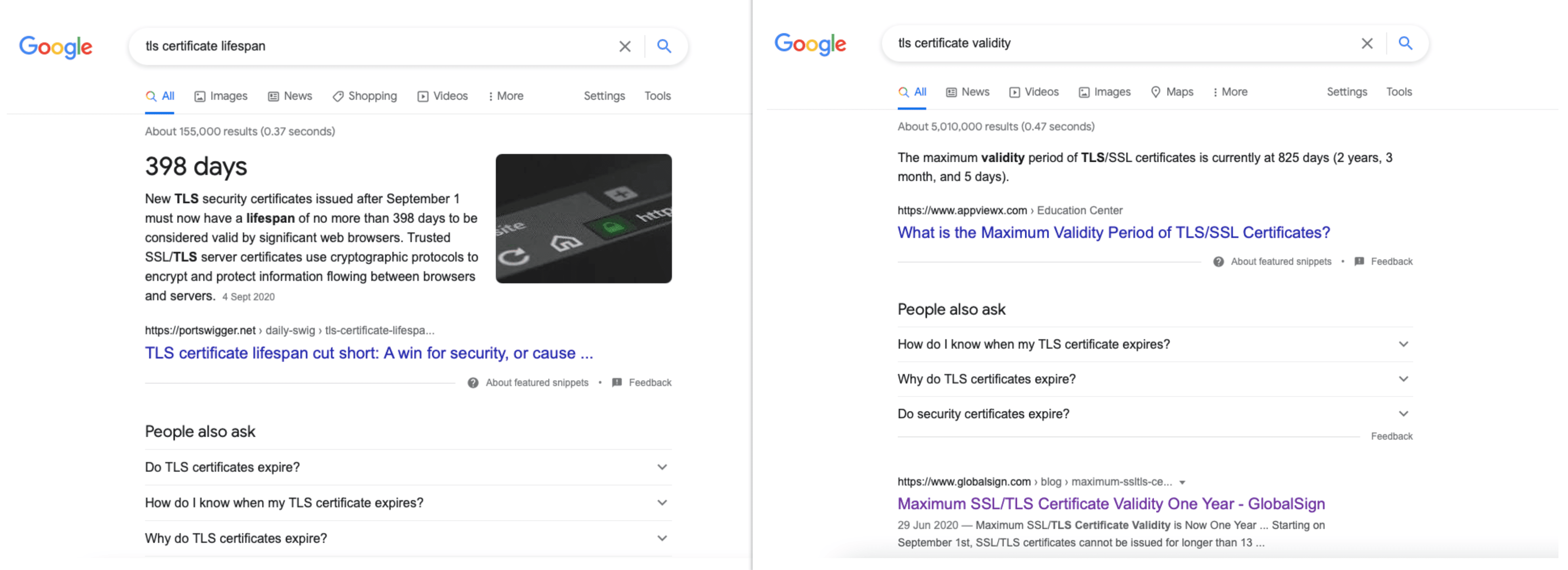

In the screenshot above, you can see the following:

- a short answer (Google pulls the most relevant information out of a page and puts it in a text block above the link)

- a list of similar searches (the “People also ask” section collects requests relevant to the topic)

- a list of suggested videos (Google is even capable of breaking a featured video into several parts)

As you can see, you get the short answer right from the search results and then you can click on different types of sources to find out more details. So, the system is designed to cater to searchers’ needs as quickly as possible and provide them with the most authoritative and helpful information.

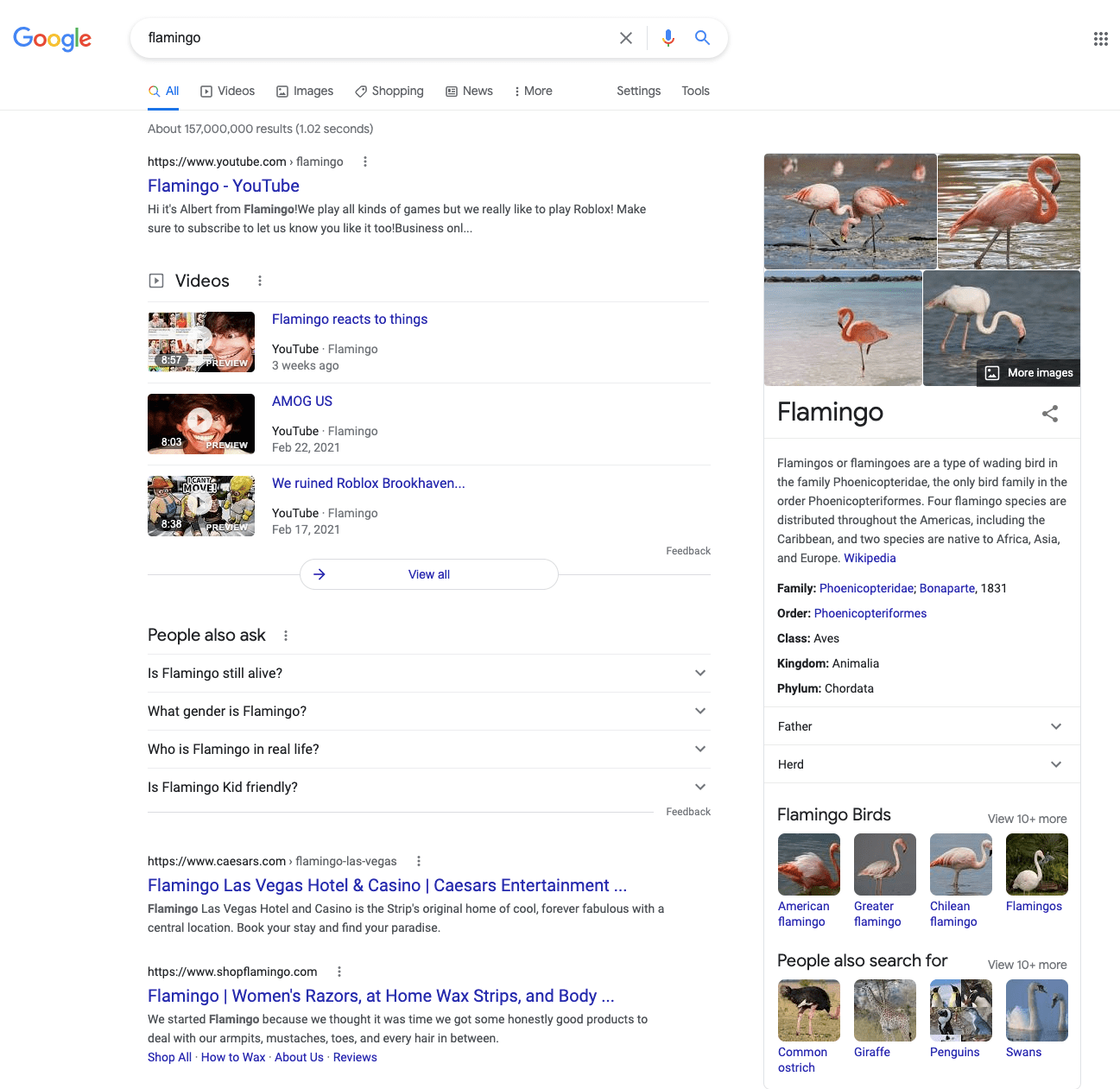

Now, let’s see what happens if you type a very general query:

When you’re using just one word and it’s not a super-unique phenomenon, Google might get confused and give you very different results. As you can see from the screenshot, searching for “flamingo” will show the eponymous YouTube channel, hotel, and body care brand together with images of flamingo birds. If you’re looking for something different, you need to make the query more specific.

With that said, search engines work to understand searchers and give them the most relevant sources. The search features are improving to make it easier for users to jump to the best suitable result. What it means for website owners is that they have to research the queries that might interest their target audience and place them on the website. But more importantly, they have to make that content really helpful so that when people click on the website, they receive exactly what they were hoping to find.

Now, this was a very generic explanation of how search works as seen by users. Now, let’s dive into the inner mechanics of search as performed by search engines.

How do search engines organize web pages in the search?

For websites to get to the SERPs, they need to be easily discoverable by search engines. Even if you provide high-quality content that corresponds to what people search for, you won’t be able to rank high without getting discovered through the processes of crawling and indexing.

The mechanics behind rankings work in the same manner among search engines. Since Google is the most vocal about its tools and processes, we’ll illustrate crawling and indexing based on Google’s explanations.

What is crawling?

The process of establishing that a certain web page is out there on the web is called crawling. Simply put, crawling means being visited and scanned by a search engine.

Search engines act as directories of all existing websites but, as you might imagine, they need to update their databases all the time: there are over 1.7 billion sites with more than 500,000 being added each day. So, how does a search engine know that a web page is out there?

- It has already visited the page.

- It follows the link from a known page to a new one.

- It processes a list of pages in a sitemap.

- It follows the instructions from CMSs about new or updated pages.

- It gets data from domain registrars and hosting providers.

What is indexing?

After finding a new page, a search engine analyzes the content on the page, its structure and layout to rank it for relevant queries. The process of understanding what that page is about is called indexing. The better Google or another engine understands the page, the better it is for that page.

What elements a search engine scans to understand a page:

- its overall structure

- title and description tags (these are HTML tags that don’t appear on the page itself but are shown in the search results)

- headings

- text

- images (or any other visual content)

As the results of this process, a web page gets to the index, which is a database of all web content. To maintain its huge databases, Google, for instance, has millions of servers across 21 data center locations around the globe.

What is search engine ranking?

Each time a user types a query, a search engine pulls information from the index, trying to match the search intent with the highest quality answers. The order of results shown for each particular query is called ranking. Search engines evaluate a number of parameters to define the order—these parameters are called ranking factors or ranking signals and we’ll talk about them later. Rankings fluctuate all the time because search engines crawl and index a lot of new web pages and re-index already existing ones. To keep an eye on such dynamics, you can rely on specialized tools like SE Ranking’s SEO Rank Tracker.

Check out our guide on tracking search engine rankings to learn how to effectively monitor and analyze your ranking positions.

Why do you need SEO?

Let’s get back to the term SEO for a bit. It was spotted in usage already in the 1990s even though at that time, search engines functioned as directories and there wasn’t much websites could do to “optimize.” During the last decades, multiple attempts have been made to rebrand SEO or bury it but it doesn’t seem realistic that the practice of optimization will be long gone.

Search engines not only process tons of web sources to serve their rankings—they also put efforts into improving their algorithms and, ultimately, making search a better place. Innovative technologies are reshaping the search landscape, adding new opportunities for users and learning to better understand different searches. Despite the fact that the term SEO doesn’t say a thing about real users, the practice of SEO is actually about working to the user’s benefit.

SEO is a powerful tool to reach out to your target audience. Organic search is the most effective channel for promoting a website—research has shown that it offers a 20 times higher traffic opportunity than paid search. What benefits does search optimization offer?

- Target traffic. Organic search is a primary source of web traffic and above that, it can connect websites with their exact target. Ranking high, you’ll be getting visitors who are likely to be interested in your content and products or services.

- Online reputation. Getting cited and mentioned on different sources increases the level of trust users place on your site.

- Control over website quality. When you’re doing everything right for SEO, it means that your website is secure, fast, easy-to-use, and reliable—in other words, it’s delightful for visitors.

- Cost-efficient promotion. Even though SEO does require financial investment and it takes time for the results to show, it is cheaper than other marketing methods.

To attract visitors and achieve the goals you pursue with a website (increase brand awareness, conversions, etc.), you’ll need to follow the best SEO practices and regularly monitor website’s performance against major SEO parameters.

To help you get the idea of how much you can achieve thanks to optimization, with our partners at ThinkEngine, we’ve prepared this interactive chatbot with some key facts and stats. If this data won’t persuade you of SEO’s fabulous possibilities, we don’t know what will 🙂

Why is SEO important for marketing?

There are numerous reasons why SEO is an essential part of most inbound marketing strategies. Investing resources in SEO, for example, helps increase your site’s search visibility and brand awareness, boosts organic traffic, attracts new visitors and transforms them into leads. So, let’s take a look at some of the most important reasons why you should include SEO in your marketing strategy.

Brand awareness. You may have the best product in the world, but it will never sell if your customers can’t find it. Users can pick from dozens of competing search results on Google to find the one that fits their needs best, but almost 70% of them will stick with one of the top 5 SERP suggestions. To create a winning SEO marketing strategy, you’ll need to embrace competition and fight for your place in the sun, ergo — search visibility.

Organic traffic. We’ve already pointed out in the previous paragraph that smart SEO is the best way to increase traffic to your website. With the correct SEO and digital marketing techniques, you can reach top search positions faster, get more site visitors, and turn them into customers. In other words, SEO makes traffic boom and businesses bloom.

Conversions and profits. Modern SEO focuses on user experience and site usability rather than raw data and traffic. By applying the best SEO and CRO techniques to your website and making user sessions more convenient, you can easily increase conversion rates and, you guessed it, increase profits. As an added bonus, search engines will regard your site as user-friendly and will reward you with higher search positions, allowing you to drive even more traffic and potential customers. This means double the profit!

Long-term investment. Another advantage of SEO is that it requires fewer funds and resources than other marketing channels while also being a long-term, accumulative investment. This means it carries a lower financial risk and has a colossal ROI potential.

As you can see, combining search engine optimization and marketing can take your business to the next level. Sadly, many marketers continue to ignore SEO and miss out on an almost endless list of opportunities. We are confident, however, that our dear readers are not among them. Now, let’s take a look at how to integrate SEO with your marketing efforts to help your website rank higher on SERPs.

What is considered as a ranking factor?

Let’s see how exactly to get liked by search engines. The aspects of web pages whose evaluation impacts the position in the SERPs are called ranking factors. In the early days of search engines, they would rank websites based on domain parameters, basic structure, and keywords. If a page had a lot of target keywords, it would succeed in the search. From that time and till now, the situation has changed, a lot. Search engines have been evolving to address user needs more effectively, pushing back low-quality pages and eliminating manipulative SEO techniques. Let’s explore the major ranking signals in the context of search engine updates and improvements.

In an introductory article about how to get a website liked by a search engine, Google names 3 things:

- Answering user intents. High-quality content that gives the information users are searching for is key to high rankings.

- Getting links from other websites. Naturally placed links from external sources tell search engines that a website can be trusted and contains valuable information.

- Ensuring accessibility. A logical structure that doesn’t have orphan or dead-end pages (that don’t have any links pointing to them or from them) helps search engines understand a website.

Naturally, these factors are just a tip of the iceberg, but they alone tell a lot about how to succeed in the search: you should focus on providing the most helpful information, building credibility, and making your website easily crawlable. Let’s dig a little deeper into how these and other vital aspects would have worked earlier and are working now.

If you don’t want to read the details about different algorithms and search evolution, go to the rundown of ranking factors relevant for 2021.

How important is the content on a web page?

You may have heard the phrase “content is king”—it has been on the tongues of marketers starting from 1996, when Bill Gates expressed his belief that the power of content was to make impact and money on the internet. Content is still the number one ranking factor which you will find mentioned in any SEO checklists, but there’s a question of how exactly search engines learn that one page contains more useful information than another page.

In the early days of web search, it was hard to find results matching a too abstract query or a badly formulated one. But people come to search engines not necessarily knowing how to formulate what they’re looking for and they expect to see the most relevant results anyway. Addressing the problem of adapting the system to how people search, Google and its competitors have been improving their algorithms.

Several times, Google said that it was able to understand searches “better than ever before.” The first time it was about the 2013 Hummingbird update. This algorithm update marked the switch to semantic search, which meant that Google understood the intent behind a query and was able to distinguish subtopics related to a query. Spamming pages with keywords for better search visibility was over for good. Not the number of exact keyword matches but content’s relevancy to the search intent was taken into account.

In 2019, Google once again announced to revolutionize their understanding of how users search. Investing in language understanding research, Google developed a new natural language processing technique called BERT that analyzes the context around individual words. The engine is able to decipher misspelled queries and distill passages from web pages that answer particular queries—and shift rankings based on this analysis. So optimizing for semantic search is essential for SEO.

Still, search algorithms aren’t perfect. The system may understand what you’re asking and what topics and subtopics you can be interested in but provide you with outdated information. For example, when I typed “TLS certificate lifespan” to Google, the featured snippet included the correct result but when I typed “TLS certificate validity” meaning to find the exact same answer, the SERP highlighted information that is no longer relevant.

With all that said about search engines evolving to match user intents with the best possible answers, the primary task for web content is to be helpful. But it’s not the only thing to consider. Apart from being relevant to user intents, web content has to be:

- Up-to-date. Since the 2010 Caffeine update, Google has been favoring fresher results. In this regard, it’s important to update your content if something has changed in what you wrote about. Also, monitoring industry trends and turning the latest news into content pieces might give you a win over competitors. Note that the frequency of publishing new content isn’t a ranking factor but naturally, if your website doesn’t get any new content in a significant period of time, its rankings are likely to drop.

- Written by experts. The level of expertise demonstrated in the content directly translates to ranking distribution. Google features particular guidelines called EAT (Expertise, Authoritativeness, and Trustworthiness) for credibility of web sources assessed at the level of author, page, and website. To be perceived well against EAT parameters, information on a page should be accurate, the whole website should be reliable, and author(s) should be recognized as industry experts. Another quality system called YMYL (Your Money or Your Life) is applied to searches in one way or another related to health, financial details, and safety. Google requires the highest expertise from the YMYL pages to rank well.

- Compliant with regulations. Search engines may remove web pages from the index when their content violates legal regulations or copyright policies or exploits personal data (publish identifiable information without user consent).

Another thing about content is its readability. This issue has been debated for years. At some point, Google even launched the reading level filter for users to stick to those formats they are likely to understand. However, the feature wasn’t very helpful and eventually, Google ceased it. Many SEO and marketing specialists believe that the easier it is to digest content, the better it is for both search engines and website visitors. The content has to be comprehensible for sure, but it’s not considered the best practice to make it as easy to read as possible. Google’s John Muller states that it’s best to use “the language of your audience,” which makes a lot of sense: if you’re targeting tech-savvy customers, for example, you can afford going all out with technical details.

How do backlinks take part in the ranking process?

In the late 1990s, analysts have been thinking about how to incorporate the value of hyperlinks into the ranking system. For the first time, off-page factors (external to the page itself) were taken into use. Google’s Larry Page and Sergey Brin developed the revolutionary PageRank algorithm that counted links pointing to a website from an external source (now known as backlinks) as “votes.” Google wasn’t the first search engine to consider the links but it was the pioneer in creating a working algorithm for assessing the links.

Websites started aggressively acquiring backlinks and participating in link exchanges, leaving Google no chance but ceasing excessive link schemes and prioritizing quality over quantity. Now, it’s important to build a safe backlink profile by getting links from the most trusted sources and removing the spammy ones. Websites are placed higher not because of having a lot of backlinks (like it was in the early 2000s) but because of having relevant links from authoritative pages.

How does loading speed matter?

In 2010, Google announced that site speed became a ranking factor. Websites with loading speed issues were getting increased bounce rates and therefore lower positions in the SERPs. For a decade, website owners have been trying to provide visitors with the quickest possible page loads. While speed is undoubtedly an important factor of the website’s performance, the focus has recently shifted to ensuring fast interaction with the first rendered content (instead of guaranteeing fast load of a full page).

Starting from June 2021, Google’s page experience signals that include Core Web Vitals directly influence the rankings. These signals are all aimed at improved user experience and engagement with web content. They include safe browsing, mobile-friendly design, and metrics of interactivity and stability of web pages. If the first visible part of a page is loaded fast, shortly becomes possible to click around, and doesn’t disturb with ads or popups shifting the layout, the page will have great Core Web Vitals measurements and be placed high in the search.

What role does user experience play in optimization?

Google has been using traffic data to serve rankings, and the more people visit a certain website, the more trustworthy it gets in the eyes of Google. Not only website visits but user engagement with content is taken into account.

Starting from the RankBrain update, Google has been observing user experience signals, evaluating how easy a page is to interact with. The search engine analyzes how many clicks a search result gets and how much time users spend exploring it. If Google learns that a web page gets lots of clicks and high engagement, this page will get a ranking boost. By contrast, websites that are difficult to navigate and push users back to the SERPs aren’t likely to get high rankings. Some aspects of page optimization—for example, meta description tags—don’t directly affect the rankings but they still have some influence because they impact the number or clicks a page gets from the SERPs.

Do websites have to be mobile-friendly?

Mobile search has been dominant since 2015 and from the same year, Google has been boosting those pages that have a mobile-friendly layout. In 2016, the search engine introduced mobile-first indexing, meaning that the index was going to be built primarily based on mobile versions of websites. To stay afloat, modern websites need to provide a robust mobile experience. It’s best to adopt responsive design that adjusts the layout to any device and screen size.

So, what are the major ranking factors?

Summing up all the aforementioned and adding some SEO parameters that haven’t significantly changed over the years, here are the 10 most important aspects that influence website’s rankings:

- Domain metrics. The history and validity of a domain matter for search engine rankings. Google looks into registration and renewal dates, placing more trust in those domains that are paid for several years in advance. If a domain is not brand new, its previous history might damage the new website occupying the domain. On the other hand, old domains with penalty-free history may carry more value for search engines, but not significantly.

- Site security. Security is an aspect you never want to compromise. Google has been using an HTTPS protocol as a ranking signal since 2014, and other search engines are also prioritizing safe connection. If a website uses an HTTP protocol or some of the resources on it are loaded via HTTP, there’s outdated cryptography or any other SSL/TLS vulnerability that might expose user data, it decreases the level of trust and harms SEO.

- Mobile optimization. Searches performed via mobile devices have been dominant since 2015 and continue increasing. Mobile-friendliness is part of ranking factors across all search engines and your website isn’t likely to survive the competition without a functional mobile version.

- Loading speed. Loading speed is important for ranking well but there are specific parameters related to website load that matter more than the speed of load per se. These are Core Web Vitals that measure the time when a web page becomes accessible and interactive, as well as the impact of possible layout shifts.

- Site structure. The availability of a properly formatted sitemap and a comprehensive website structure broken into categories are crucial for good rankings. It’s important to be consistent in the site’s architecture and use navigational elements such as breadcrumbs or table of contents.

- Content. When the content on a page is well-structured, well-researched, and comprehensive to the target audience, then the page has high chances of ranking in the top. Search engines value originality, overall quality, usefulness, and freshness of content. Apart from text, they scan any other type of content on web pages: images, gifs, videos, pdf files so those should also be original, high-resolution, and relevant to the context. Content that is considered unsafe, is copied from other sources, or appears to be duplicated throughout a website sends negative ranking signals.

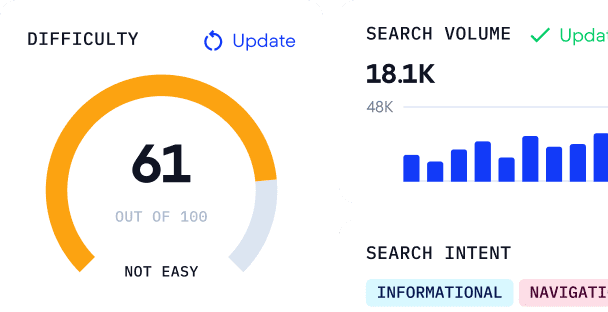

- Keywords. Different studies show that those pages tend to perform better in the search that have a keyword placed in the beginning of the title tag, in the H1 heading (and in H2-H6 too), and throughout the page content (especially in the first 100 words). Besides that, a keyword in the URL is a small positive ranking factor, and some SEO practitioners believe that a keyword in the first word of the domain name or in the subdomain can boost rankings.

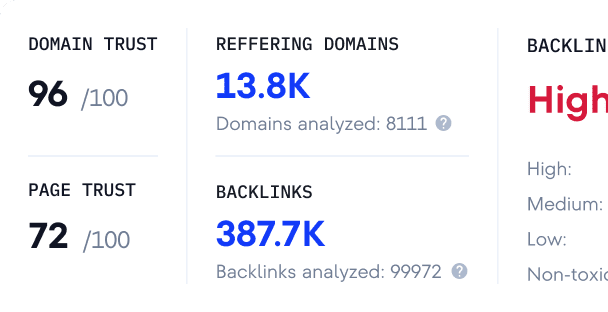

- Backlink profile. Having trustworthy sources linking out to your website is vital for SEO. Search engines evaluate the number of referring domains and pages, their relevance to the linked content, their overall quality and age. When building a backlink profile, make sure that you get links from diverse websites but all of high quality and related to your niche. Also, check that they aren’t dead. Use tools like Broken Link Checker to detect links that no longer work and replace them. Another thing to put attention on is anchor text as it helps search engines understand what the linked page is about. Some link building techniques or mistakes can lead to website penalization: excessive link exchange; a lot of spammy or non-relevant links; paid partner links and links placed by users not attributed as Sponsored and UGC, respectively.

- On-page links. Links that you put in the website’s content also impact search engine rankings. Even though external links aren’t an official ranking signal, referring to authoritative sources helps send trust signals. In its turn, linking out to your own pages helps establish relations between them. Internal linking allows for accurate crawling and link juice distribution across the website tells search engines what pages carry more value than others. It’s important to track the links: if they get broken and remain on the website, they act as negative ranking signals.

- User experience. UX factors influence rankings: for example, Google’s algorithms evaluate websites’ click-through rate and pogo sticking. If users don’t return to the search results shortly after clicking on a page and spend enough time exploring the content of that page, it’s definitely good for search presence. Interstitials like popups and intrusive ads placed on a page may damage user experience and hurt rankings.

How dominant is Google and what about other search engines?

The history of web search didn’t start from Google. Proto-engines were born in the university environment starting from 1990, when Alan Emtage launched Archie, a public directory of FTP sites. In 1994, Stanford University students Jerry Wang and David Filo created Yahoo. It was a directory of websites that were manually submitting themselves. Some other search engines also saw the light of day that year, but they failed to make any real impact.

In 1996, Stanford University students Larry Page and Sergey Brin built a new search engine called Backrub, which eventually was renamed and registered as Google. Starting from 2000, Google powered Yahoo’s search results, which was the beginning of the end for Yahoo’s fame.

In 1998, the world saw a prototypical paid search: the Goto service developed by the Pasadena-based incubator allowed advertisers to place bids to be shown above organic results. In 2000, Google introduced AdWords, now known as Google Ads. Paid ads and organic rankings co-exist in the SERPs but are independent of each other. Using paid ads in different search engines, websites get featured according to how much they pay for each click, while in organic search, you can’t pay to get featured or improve your rankings.

It wasn’t until 2009 when today’s biggest rival of Google was introduced: Microsoft Live Search turned into Bing. At the time, this search engine had some innovative features—for example, dedicated sections to different types of search (shopping, travel, local business, related to health) or preview of results visible on a user’s hover. Despite these helpful features, it didn’t come close to Google in the number of searches.

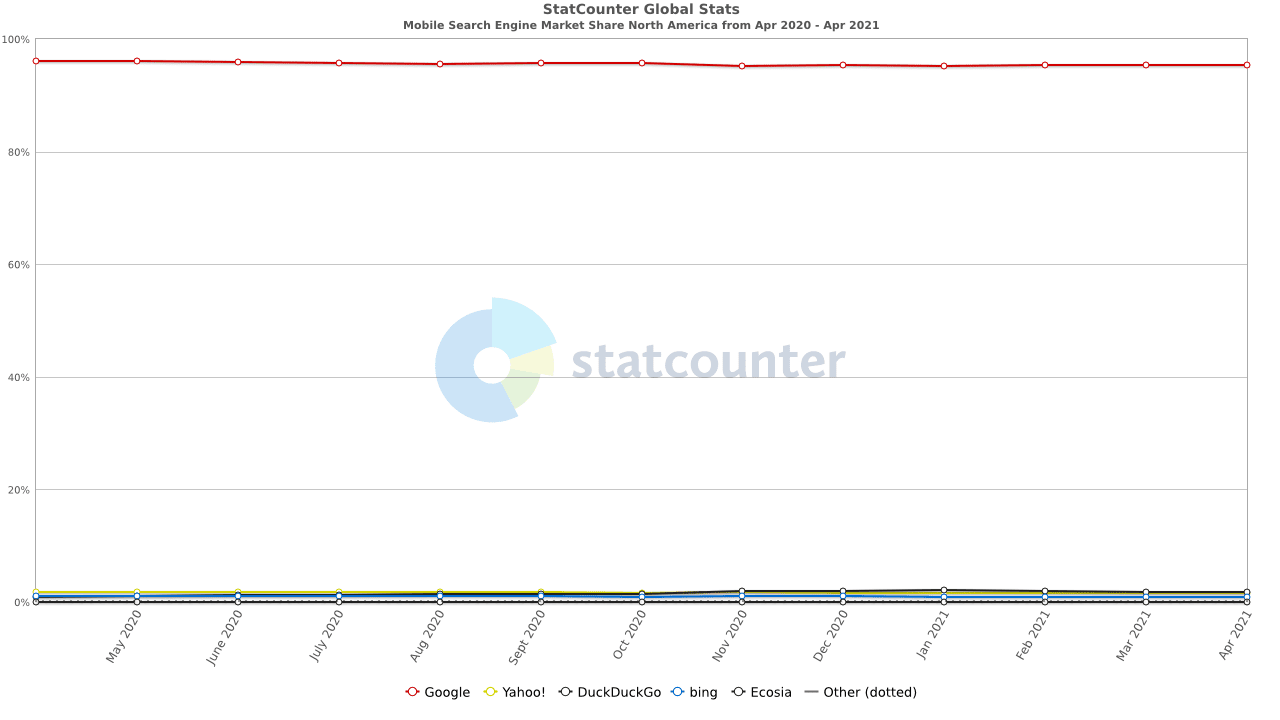

Through the years and till now, all the other engines have been failing to outperform the search giant. 2018 statistics show that around 70% of all web traffic comes from search engines, Google being responsible for almost 60%. Search engine comparison by StatCounter demonstrates how far behind Google leaves its competitors: it accounts for 95.8% of mobile searches in North America (95.4% worldwide). On desktops, users Google their queries in 87% of cases, Bing receives the desktop share of over 10% in North America and over 6% in Europe, and Yahoo and DuckDuckGo are getting around 1-3% of searchers’ attention.

Nevertheless, many alternatives to Google exist and they attract the audience that isn’t satisfied with Google’s policies, especially regarding privacy. DuckDuckGo is the most popular privacy-minded search engine used by people concerned with how much of their data is being tracked on a daily basis and sold to advertisers. The engine incorporates data from 400+ sources (including crowd-sourced sites and Bing and excluding Google), while its own crawler, DuckDuckBot, does its job by prioritizing the most secure sites. In January 2021, DuckDuckGo hit 100 million searches per day. It’s still not as many as Google, Bing, and Yahoo receive, but the tendency is clear: there are a lot of people seeking a more private and safety-first searching experience.

DuckDuckGo is not the only privacy-centered search engine alternative:

- StartPage doesn’t record IP addresses and doesn’t have cookies, but the fact that its results are coming from Google raises a red flag. Its share is pretty small compared to big search engines—StartPage gets 4.5 million searches per day.

- Quant doesn’t collect user data and provides results gathered from Bing and by its own crawler. Quant offers useful shortcuts that work similarly to Google’s operators and also has dedicated search sections (maps, music, scientific and medical information). In 2020, Quant announced to take a new course toward monetization and creating an ad platform, generating doubts about its pro-privacy approach.

- Anonymous browser Brave launched its own search engine in 2021. It powers results by its own crawler and has the system that analyzes usefulness of pages in search without tracing user-specific data.

User tracking might seem like the biggest issue with Google and other search giants, but it’s not the only problem that pushes other companies to create alternatives. For example, Ecosia wishes to minimize the carbon footprint by running servers on solar plants and donating 80% of its profits to planting trees. Or, Switzerland-based Swisscows focuses on safe, family-friendly content and automatically removes any violent or explicit results.

Even though the numbers tell it’s hard if not impossible to cut some of Google’s pie, companies still plan on conquering the search with their new search engines. Ahrefs’ founder believes that their system will be able to compete with Google. Ahrefs’ vision is centered around two things: privacy (which is already a big drive for Google alternatives) and profit share (distributing massive earnings across powerful platforms like Wikipedia that publish content that is widely popular in search but isn’t meant to be monetized). The idea sounds intriguing and a little too good to be true—what will come of it remains to be seen.

It’s your choice whether to target other search engines. Their crawling and indexing mechanics are pretty much the same so if you take care of basic SEO, your website will likely be shown in the results of different search engines. But if you know that some part of your target audience prefers some alternative to Google, you might want to do a little extra research on how to please that particular search engine.

To sum up

Here’s a short summary of what we’ve discussed in this article:

- SEO (search engine optimization) is a practice of getting a website shown in search results for particular user queries. The higher is the position among the results, the more target traffic a website receives.

- SEO is the most effective method to promote a website, get traffic, and build trust. A robust SEO strategy guarantees long-term success in attracting website visitors and converting them into customers.

- Search engines cater to the needs of users and evaluate web pages according to how helpful they are to searchers.

- To rank web pages, search engines first need to crawl (discover) and index (understand) them.

- Hundreds of factors influence rankings but most importantly, a web page should give the best possible answer to a user query. Apart from the quality and relevance of content, crucial parameters evaluated by search engines include security, site structure, user experience metrics, and backlinks.

- Google occupies the lion’s share of all web searches but websites can also target alternative search engines and receive valuable traffic from there.