How to spot duplicate content and fix it

Easy to create, hard to get rid of, and extremely harmful for your website—that’s duplicate content in a nutshell. But how badly does duplicate content actually hurt SEO? What are the technical and non-technical reasons behind the existence of duplicate content? And how can you find and fix it on the spot? Read on to get the answers to these key issues and more.

What is duplicate content?

Duplicate content is basically copy-pasted, recycled (or slightly tweaked), cloned or reused content that brings little to no value to users and confuses search engines. Content duplication occurs most often either within a single website or across different domains.

Having duplicate content within a single website means that multiple URLs on your website are displaying the same content (often unintentionally). This content usually takes the form of:

- Republished old blog posts with no added value.

- Pages with identical or slightly tweaked content.

- Scraped or aggregated content from other sources.

- AI-generated pages with poorly rewritten text.

Duplicated content across different domains means that you have content that shows up in more than one place across different external sites. This might look like:

- Scraped or stolen content published on other sites.

- Content that is distributed without permission

- Identical or barely edited content on competing sites

- Rewritten articles that are available on multiple sites

Does duplicate content hurt SEO?

The quick answer? Yes, it does. But the impact of duplicate content on SEO depends greatly on the context and tech parameters of the page you’re dealing with.

Having duplicate content on your site—say, very similar blog posts or product pages—can reduce the value and authority of that content, according to search engines. This is because search engines will have a tough time figuring out which page should rank higher. Not to mention, users will feel frustrated if they can’t find anything useful after landing on your page.

On the other hand, if another website takes or copies your content without permission (meaning you’re not syndicating your content), it likely will not directly harm your site’s performance or search visibility. As long as your content is the original version, it’s of high quality, and you make small tweaks to it over time, search engines will keep identifying your pages as such. The duplicate scraper site might take some traffic, but they almost certainly won’t outrank your original site in SERPs, according to Google’s explanations.

How Google approaches duplicate content

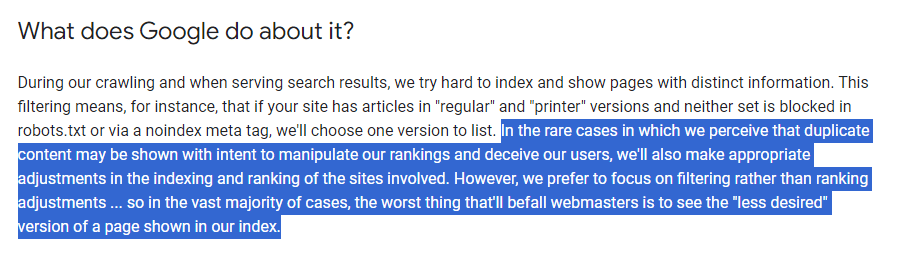

While Google officially says, “There’s no such thing as a “duplicate content penalty”, there are always some buts, which means you should read between the lines. Even if there is no direct penalty, it can still hurt your SEO in indirect ways.

Specifically, Google sees it as a red flag if you intentionally scrape content from other sites and republish it without adding any new value. Google will try hard to identify the original version among similar pages and index that one. All of them will struggle to rank. This means that duplicate content can lead to lower rankings, reduced visibility, and less traffic.

Another “stop” case is when you try to create numerous pages, subdomains, or domains that all feature noticeably similar content. This can become another reason why your SEO performance declines.

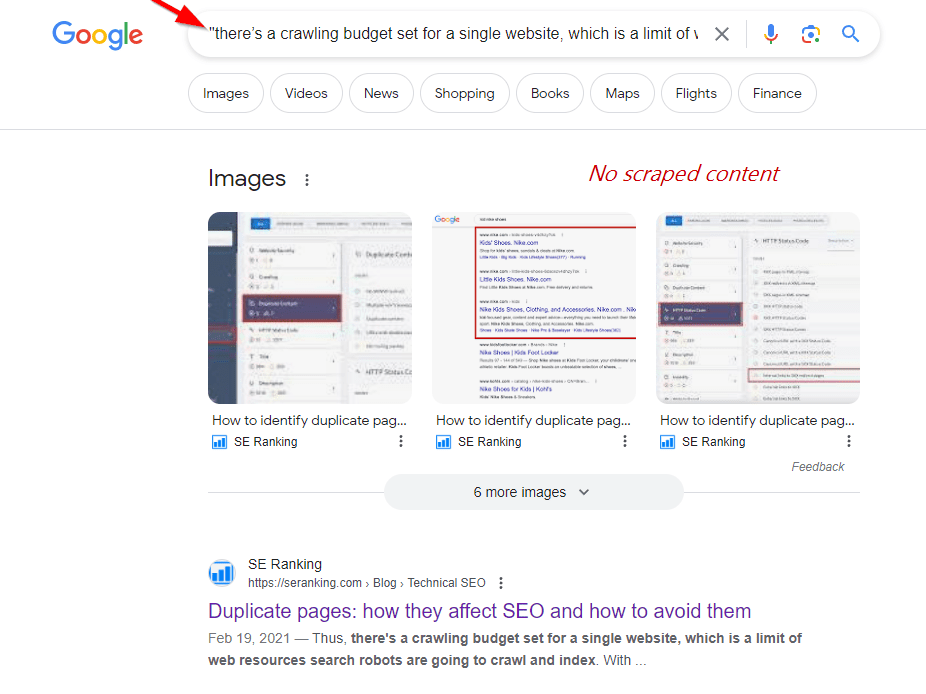

Plus, consider that any search engine is essentially a business. And like any business, it doesn’t want to waste its efforts for nothing. Thus, there’s a crawling budget set for a single website, which is a limit of web resources search robots are going to crawl and index. Googlebot’s crawling budget will be exhausted sooner if it has to spend more time and resources on each duplicate page. This limits its chances of reaching the rest of your content.

One more “stop case” is when you are reposting affiliate content from Amazon or other websites while adding little in the way of unique value. By providing the exact same listings, you are letting Google handle these duplicate content issues for you. It will then make the necessary site indexing and ranking adjustments to the best of its ability.

This suggests that well-intentioned site owners won’t be penalized by Google if they run into technical problems on their site, as long as they don’t intentionally try to manipulate search results.

So, if you don’t make duplicate content on purpose, you’re good. Besides, as Matt Cutts said about how Google views duplicate content: “Something like 25 or 30% of all of the web’s content is duplicate content.” Like always, just stick to the golden rule: create unique and valuable content to facilitate better user experiences and search engine performance.

Duplicate and AI-generated content

Another growing issue to keep in mind today is content created with AI tools. This can easily become a content duplication minefield if you are not careful. One thing is clear – AI-generated content essentially pulls together information from other places without adding any new value. If you use AI tools carelessly, just typing a prompt and copying the output, don’t be surprised if it gets flagged as duplicate content that ruins your SEO performance. Also remember that competitors can input similar prompts to produce very similar content.

Even if such AI content may technically pass plagiarism checks (when reviewed by special tools), Google is capable of determining text created with little added value, expertise, or original experience per their EEAT standards. Though not a direct penalty, just know that using only AI-generated content may make it harder for your content to perform well in searches as its repetitive nature becomes noticeable over time.

To avoid duplicate content and SEO issues, it’s essential to ensure that all content on a website is unique and valuable. This can be achieved by creating original content, properly using canonical tags, and avoiding content scraping or other black hat SEO tactics.

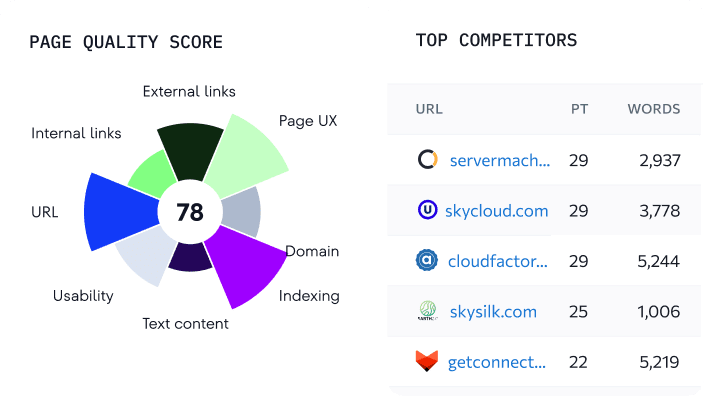

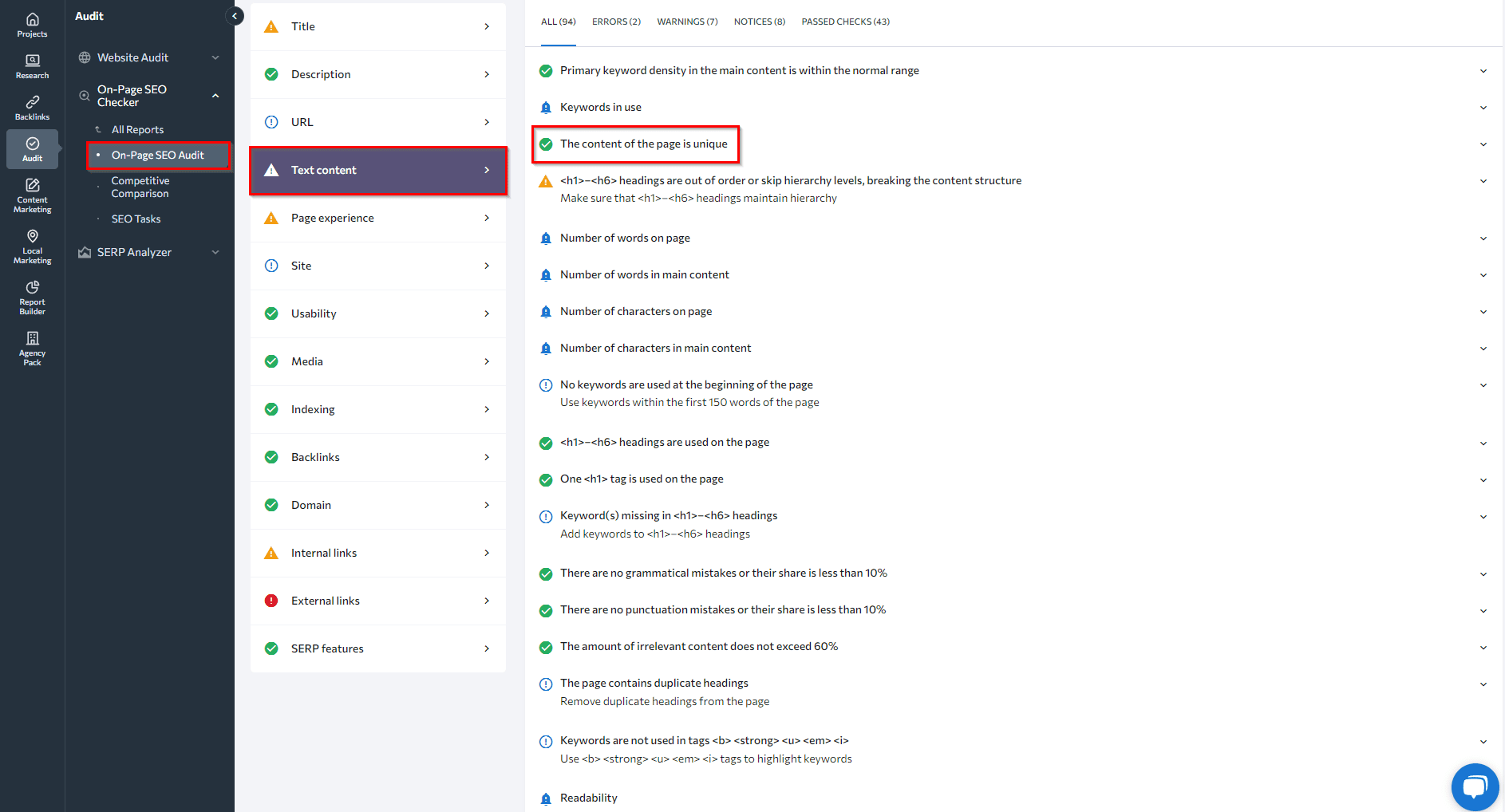

For instance, using SE Ranking’s On-Page SEO tool, you can perform a comprehensive analysis of content uniqueness, keyword density, the word count in comparison to top-ranking competitor pages, as well as the use of headings on the page. Besides the content itself, this tool analyzes key on-page elements like title tags, meta descriptions, heading tags, internal links, URL structure, and keywords. Thus, by leveraging this tool, you can produce content that is both unique and valuable.

Types of duplicate content

There are two types of duplicate content issues in the SEO spaces:

- Site-wide/Cross-domain Duplicate Content

This occurs when the same or very similar content is published across multiple pages of a site or across separate domains. For instance, an online store might use the same product descriptions on the main store.com, m.store.com, or localized domain version store.ca, leading to content duplication. If the duplicate content spans across two or more websites, this is a bigger issue that might require a different solution.

- Copied Content/Technical Issues

Duplicate content can arise from directly copying content to multiple places or technical problems causing the same content to show up at several different URLs. Examples include lack of canonical tags on URLs with parameters, duplicate pages without the noindex directive, and copied content that gets published without proper redirection. Without proper setup of canonical tags or redirects, search engines may index and try to rank near-identical versions of pages.

How to check for duplicate content issues

To start, let’s define the different methods for detecting duplicate content problems. If you’re focusing on issues within one domain, you can use:

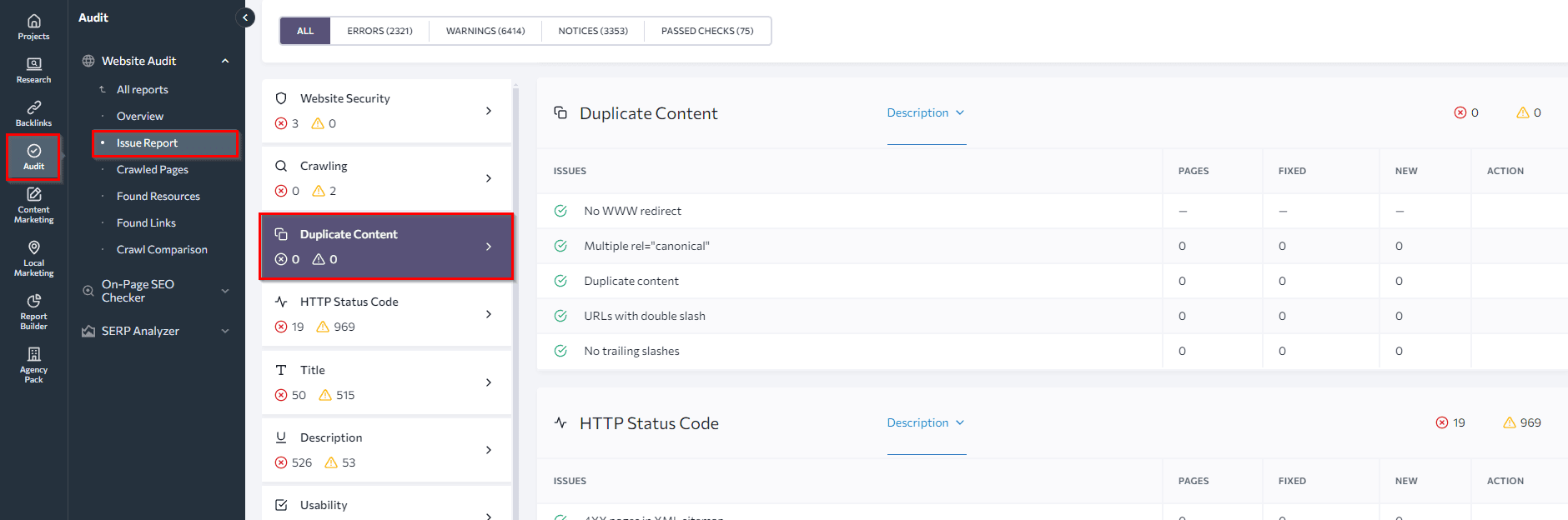

SE Ranking’s Website SEO Audit tool. This tool can help you find all web pages of your website, including duplicates. In the Duplicate Content section of our Website Audit tool, you’ll find a list of pages that contain the same content due to technical reasons: URLs accessible with and without www, with and without slash symbols, etc. If you’ve used canonical to solve the duplication problem but happen to specify several canonical URLs, the audit will highlight this mistake as well.

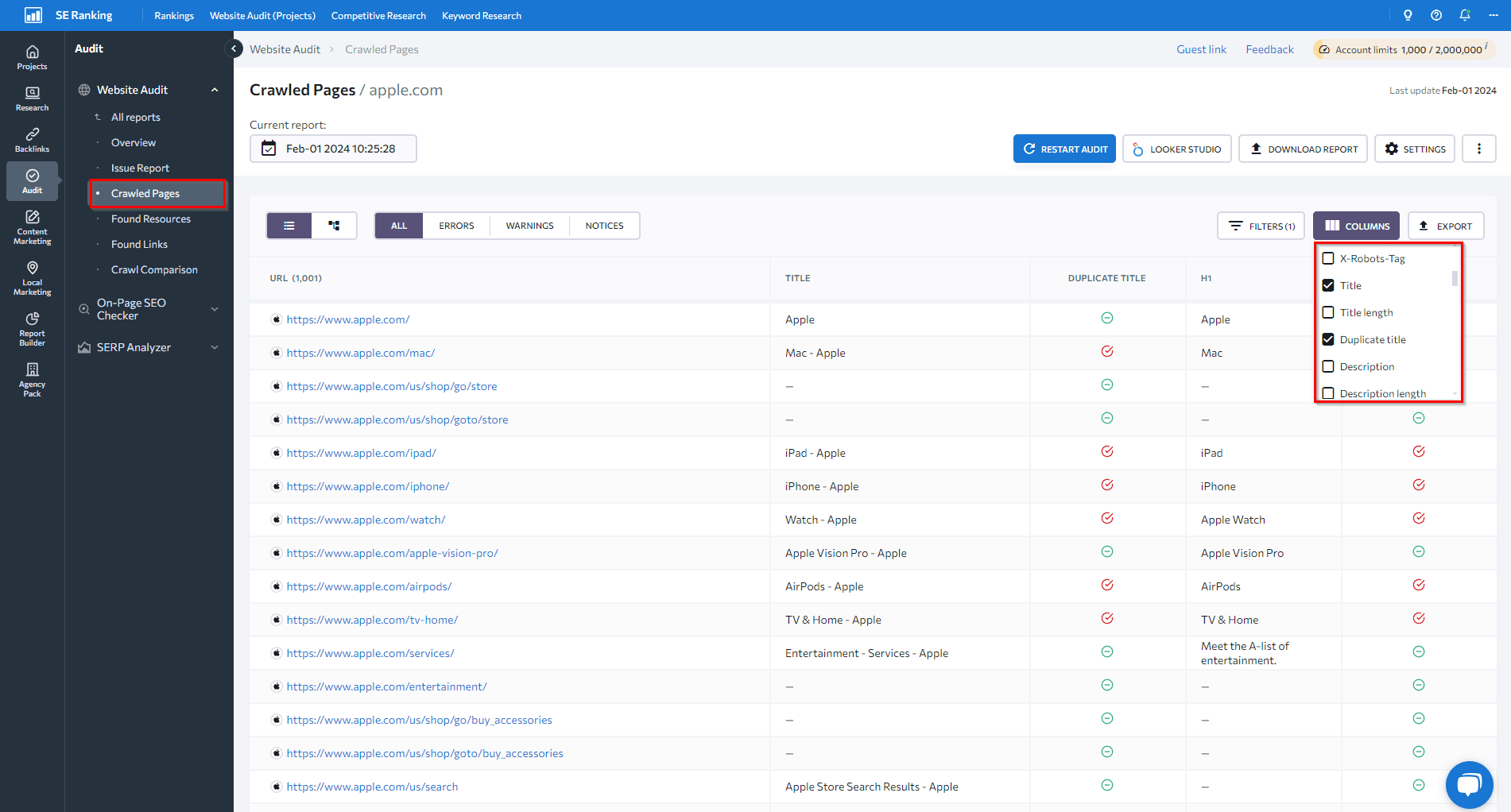

Next, look at the Crawled Pages tab below. You can find more signs of duplicate content issues by looking at pages with similar titles or heading tags. To get an overview, set up the columns you want to see.

To find the best tool for your needs, explore SE Ranking’s audit tool and compare it with other website audit tools.

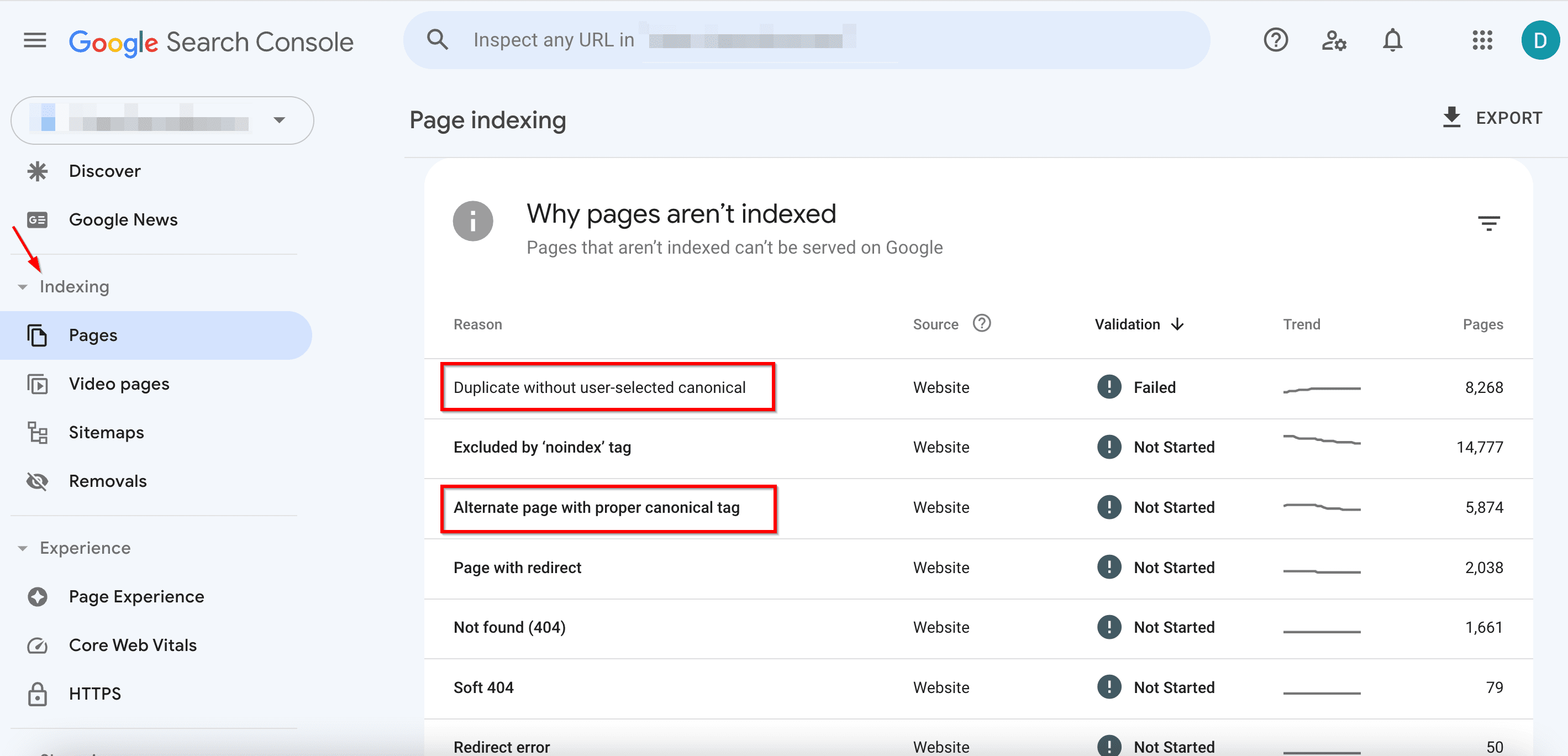

Google Search Console can also help you find identical content.

- In the Indexing tab, go to Pages.

- Pay attention to the following issues:

- Duplicate without user-selected canonical: Google found duplicate URLs without a preferred version set. Use the URL Inspection tool to find out which URL Google thinks is canonical for this page. Now, this isn’t an error. It happens because Google prefers not to show the same content twice. But if you think Google canonicalized the wrong URL mark the canonical more explicitly. Or, if you don’t think this page is a duplicate of the canonical URL selected by Google, ensure that the two pages have clearly distinct content.

- Alternate page with proper canonical tag: Google sees this page as an alternate of another page. It could be an AMP page with a desktop canonical, a mobile version of a desktop canonical, or vice versa. This page links to the correct canonical page, which is indexed, so you don’t need to change anything. Keep in mind that Search Console doesn’t detect alternate language pages.

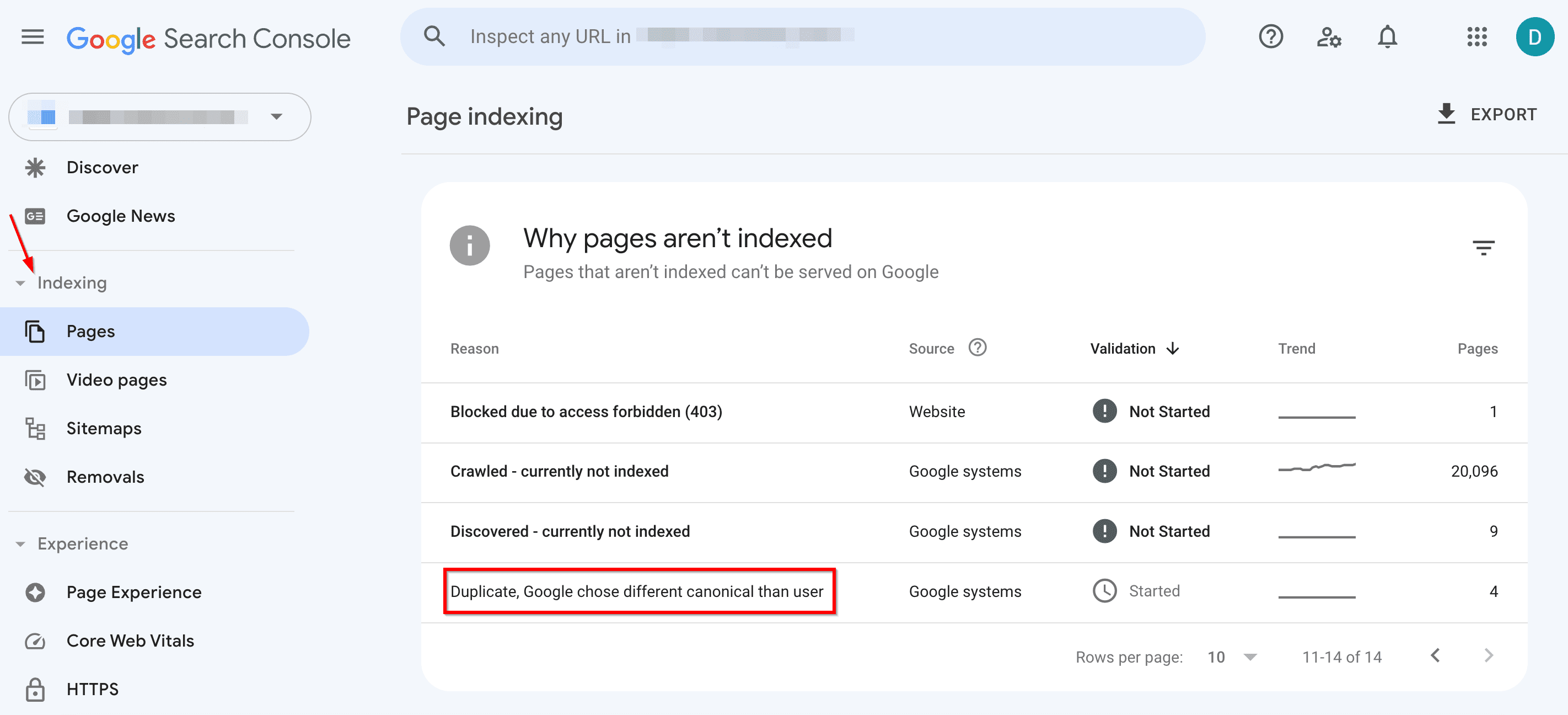

- Duplicate, Google chose different canonical than user: Google marks this page as the canonical for a group of pages, but it suggests that a different URL would serve as a more appropriate canonical. Google doesn’t index this page itself; instead, it indexes the one it considers canonical.

Pick one of the methods listed below to find duplication issues across different domains.

- Use Google Search: Search operators can be quite useful. Try to google duplicate content using a snippet of text from your page in quotes. This is useful because scraped or syndicated content can outrank your original content.

- Use On-Page Checker to examine content on specific URLs: This intensifies your manual content audit efforts. It uses 94 key parameters to assess your page, including content uniqueness.

To do this, scroll down to the Text Content tab within the On-Page SEO Checker. It will show you if your content uniqueness scores within the recommended level.

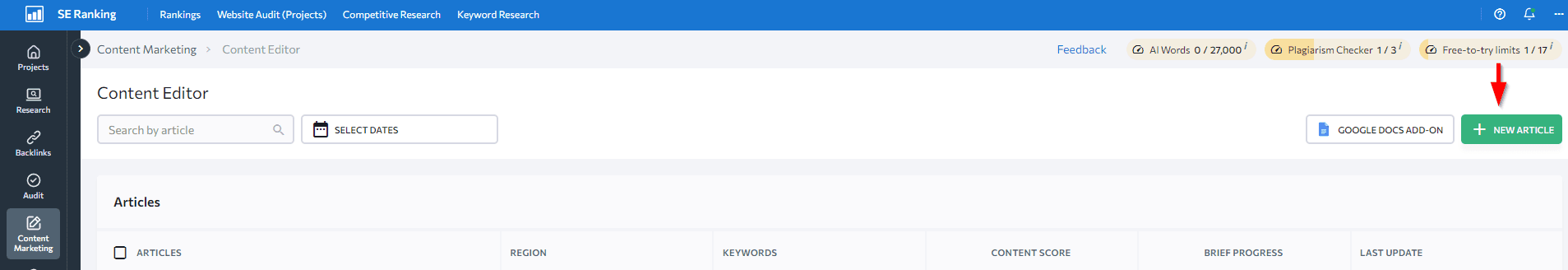

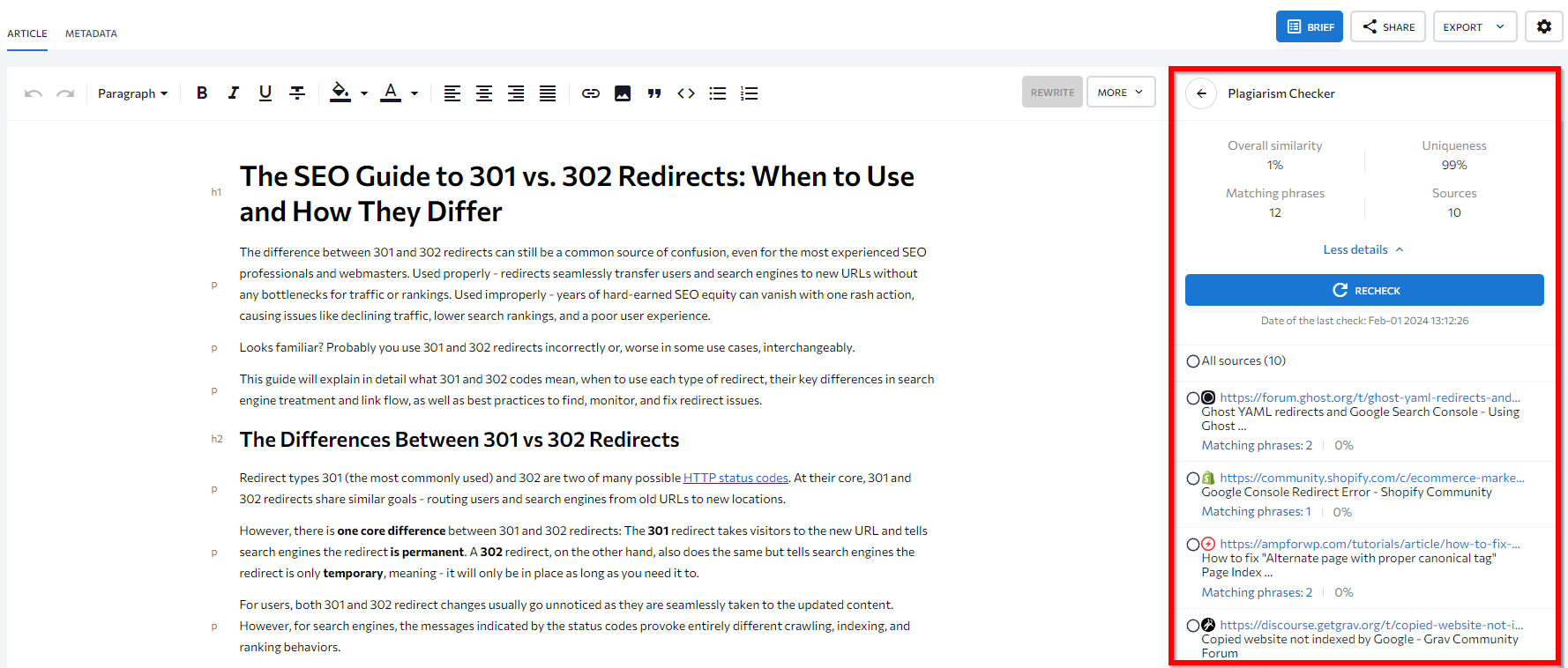

- Use SE Ranking’s AI-powered Content Editor tool to save time. It features a built-in Plagiarism Checker that scans your content and runs it through a big database to confirm that it’s the original (not copied) version. It shows the percentage of matching words, how unique the text is, the number of pages with matches, and the unique matching phrases across all competitors.

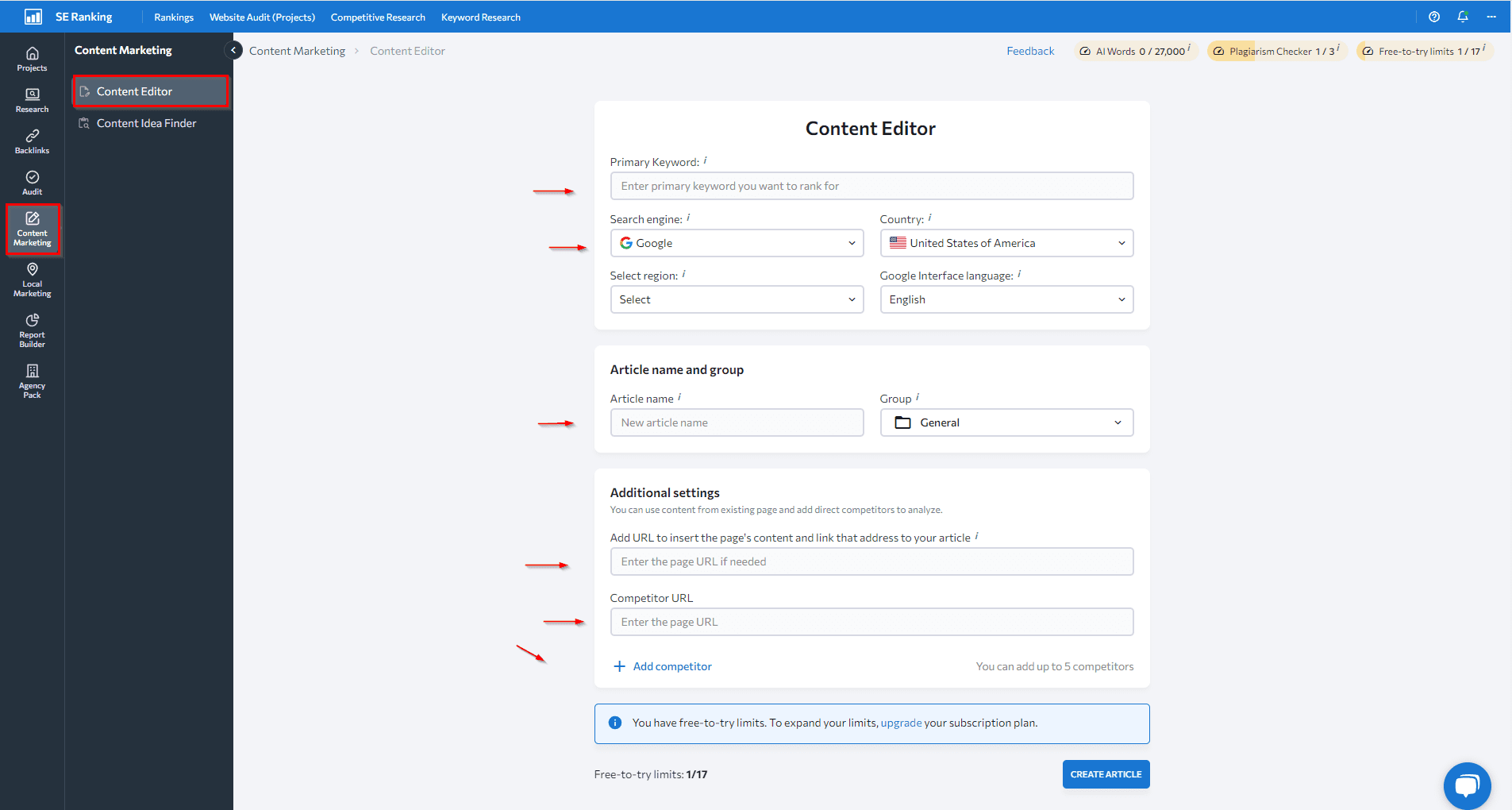

To start, find the Content Editor within the SE Ranking platform, click on the “New article” button, and specify the details of your article.

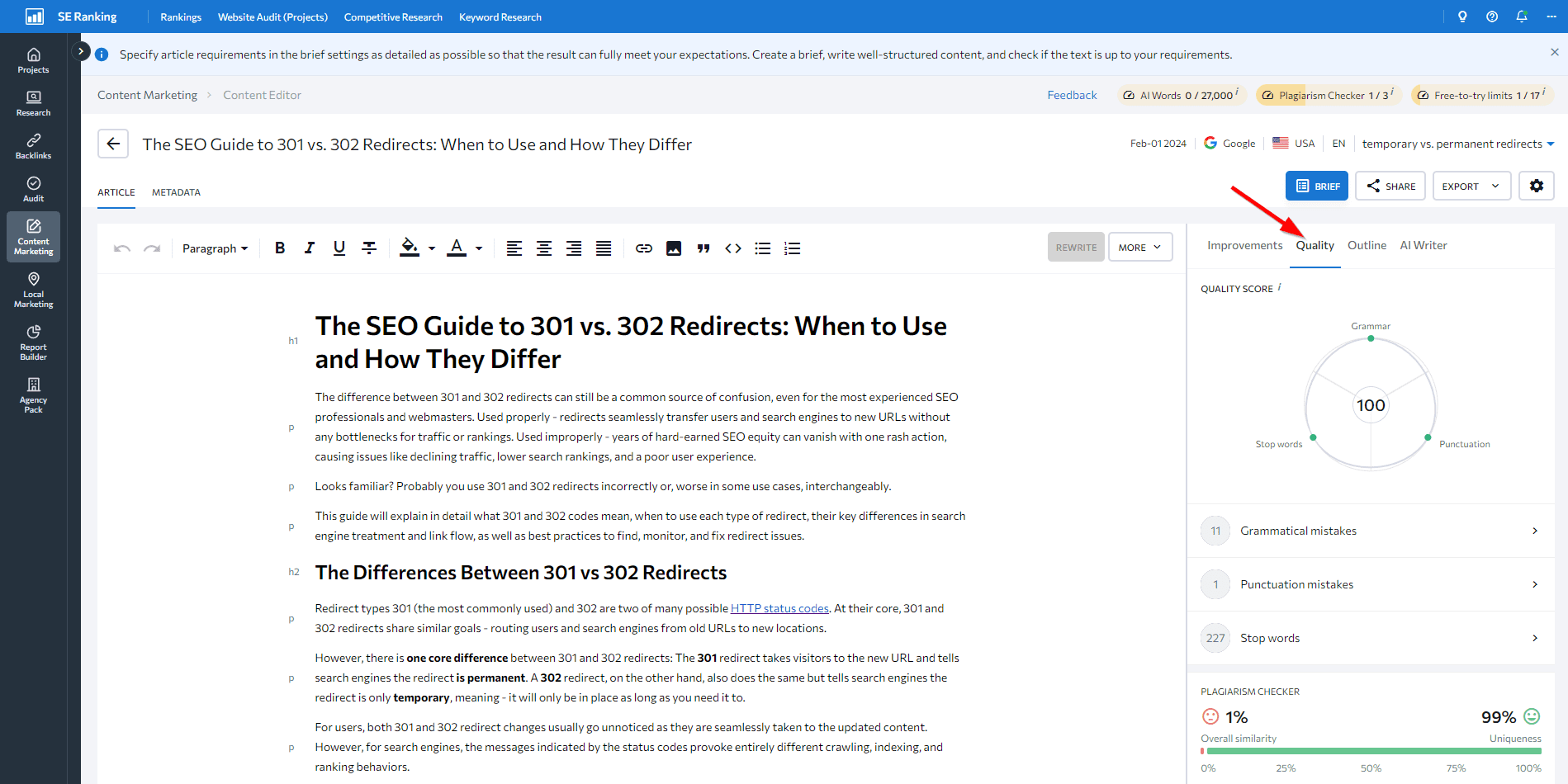

Add your article and open the Quality tab in the right sidebar.

Scroll down to Plagiarism Checker and see the details.

The most common technical reasons for duplicate content

As previously noted, unintentional duplicate content in SEO is common, mainly due to the oversight of certain technical factors. Below is a list of these issues and how to fix them.

URL parameters

Duplicate content typically happens on websites when the same or very similar content is accessible across multiple URLs. Here are two common technical ways for this to happen:

1. Filtering and sorting parameters: Many sites use URL parameters to help users filter or sort content. This can result in several pages with the same or similar content but with slight parameter variations. For example, www.store.com/shirts?color=blue and www.store.com/shirts?color=red will show blue shirts and red shirts, respectively. While users may perceive the pages differently based on their preferences, search engines might interpret them as identical.

Filter options can create a ton of combinations, especially when you have multiple choices. This is because the parameters can be rearranged. As a result, the following two URLs would end up showing the exact same content:

- www.store.com/shirts?color=blue&sort=price-asc

- www.store.com/shirts?sort=price-asc&color=blue

To prevent duplicate content issues in SEO and boost the authority of filtered pages, use canonical URLs for each primary, unfiltered page. Note, however, that this won’t fix crawl budget issues. Alternatively, you can block these parameters in your robots.txt file to prevent search engines from crawling filtered versions of your pages.

2. Tracking parameters: Sites often add parameters like “utm_source” to track the source of the traffic, or “utm_campaign” to identify a specific campaign or promotion, among many other parameters. While these URLs may look unique, the content on the page will remain identical to that found in URLs without these parameters. For example, www.example.com/services and www.example.com/services?utm_source=twitter.

All URLs with tracking parameters should be canonicalized to the main version (without these parameters).

Search results

Many websites have search boxes that let users filter and sort content across the site. But sometimes, when performing a site search, you may come across content on the search results page that is very similar or nearly identical to another page’s content.

For example, if you search for “content” on our blog, the content that appears is almost identical to the content on our Content category page.

This is because the search functionality tries to provide relevant results based on the user’s query. If the search term matches a category exactly, the search results may include pages from that category. This can cause duplicate or near-duplicate content issues.

To fix duplicate content, use noindex tags or block all URLs that contain search parameters in your robots.txt file. These actions tell search engines “Hey, skip over my search result pages, they are just duplicates.” Websites should also avoid linking to these pages. And since search engines try to crawl links, removing unwanted links prevents them from crawling the duplicate pages.

Localized site versions

Some websites have country-specific domains with the same or similar content. For example, you might have localized content for countries like the US and UK. Since the content for each locale is similar with only slight variations, the versions can be seen as duplicates.

This is a clear signal that you should set up hreflang tags. These tags help Google understand that the pages are localized variations of the same content.

Also, the chance of encountering duplicates is still high even if you use subdomains or folders (instead of domains) for your multi-regional versions. This makes it equally crucial to use hreflang for both options.

Non-www vs. www

Websites are sometimes available at two different versions: example.com and www.example.com. Although they lead to the same site, search engines see these as distinct URLs. As a result, pages from both the www and non-www versions get indexed as duplicates. This duplication splits the link and traffic value instead of focusing on a preferred version. It also leads to repetitive content in search indexes.

To address this, sites should use a 301 redirect from one hostname to the other. This means either redirecting the non-www to the www version or vice versa, depending on which version is preferred.

URLs with trailing slashes

Web URLs can sometimes include a trailing slash at the end:

- example.com/page/

And sometimes the slash is omitted:

- example.com/page

These are treated as separate URLs by search engines, even if they lead to the same page. So, if both versions are crawled and indexed, the content ends up being duplicated across two distinct URLs.

The best practice is to pick one URL format (with or without trailing slashes), and use it consistently across all site URLs. Configure your web server and hyperlinks to use the chosen format. Then, use 301 redirects to consolidate all relevance signals onto the selected URL style.

Pagination

Many websites split long lists of content (i.e. articles or products) across numbered pagination pages, such as:

- /articles/?page=2

- /articles/page/2

It’s important to ensure that pagination is not accessible through different types of URLs, such as /?page=2 and /page/2, but only through one of them (otherwise, they will be considered duplicates). It’s also a common mistake to identify paginated pages as duplicates, as Google does not view them as such.

Tag and category pages

Websites may often display products on both tag and category pages to organize content by topic.

For example:

- example.com/category/shirts/

- example.com/tag/blue-shirts/

If the category page and the tag page display a similar list of t-shirts, then the same content is duplicated across both the tag and category pages.

Tags typically offer minimal to no value for your website, so it’s best to avoid using them. Instead, you can add filters or sorting options, but be careful as they can also cause duplicates, as mentioned above. Another solution is to use noindex tags on your pages, but keep in mind that Google will still crawl them.

Indexable staging/testing environments

Many websites use separate staging or testing environments to test new code changes before deploying them for production. Staging sites often contain content that is identical or very similar to the content featured on the live site version.

But if these test URLs are publicly accessible and get crawled, search engines will index the content from both environments. This can cause the live site to compete against itself via the staging copy.

For example:

- www.site.com

- test.site.com

So, if the staging site has already been indexed, remove it from the index first. The fastest option is to make a site-removal request through the Search Console. On the other hand, it’s possible to use HTTP authentication. This will cause Googlebot to receive a 401 code, stopping it from indexing those pages (Google does not index 4XX URLs).

Other non-technical reasons for duplicate content

Duplicate content in SEO is not just caused by technical issues. There are also several non-technical factors that can lead to duplicate content and other SEO issues.

For example, other site owners may deliberately copy unique content from sites that rank high in search engines in an attempt to benefit from existing ranking signals. As mentioned earlier, scraping or republishing content without permission creates unauthorized duplicate versions that compete with the original content.

Sites may also publish guest posts or content written by freelancers that hasn’t yet been properly screened for its uniqueness score. If the writer reuses or repurposes existing content, the site may unintentionally publish duplicate versions of articles or information already available online elsewhere.

Orphan pages (those with no internal links pointing to them) can also quietly contribute to duplicate content issues, especially when forgotten or left over from staging or old site updates.

The results are typically pretty bad and unexpected in both cases.

Thankfully, the solution for both is simple. Here’s how to approach it:

- Before posting guest articles or outsourced content, use plagiarism checkers to make sure they’re entirely original and not copied.

- Monitor your content for any unauthorized copying or scraping by other sites.

- Set protective measures with partners and affiliates to ensure your content is not over-republished.

- Put a DMCA badge on your website. If someone copies your content while you have the badge, the DMCA will require them to take it down for free. The DMCA also provides tools to help you find your plagiarized content on other websites. They will quickly remove any copied text, images, or videos.

How to avoid duplicate content

When creating a website, make sure you have appropriate procedures in place to prevent duplicate content from appearing on (or in relation to) your site.

For example, you can prevent unnecessary URLs from being crawled with the help of the robots.txt file. Note, however, that you should always check it (i.e with our free Robots.txt Tester). This will prevent robots.txt from closing off important pages to search crawlers.

Also, you should close off unnecessary pages from being indexed with the help of <meta name=”robots” content=”noindex”> or the X-Robots-Tag: noindex in the server response. These are the easiest and most common ways to avoid problems with duplicate page indexing.

Important! If search engines have already seen the duplicate pages, and you’re using the canonical tag (or the noindex directive) to fix duplicate content problems, wait until search robots recrawl those pages. Only then should you block them in the robots.txt file. Otherwise, the crawler won’t see the canonical tag or the noindex directive.

Eliminating duplicates is non-negotiable

The consequences of duplicate content in SEO are many. They can cause serious harm to websites, so you shouldn’t underestimate their impact. By understanding where the problem comes from, you can easily control your web pages and avoid duplicates. If you do have duplicate pages, it’s crucial to take timely action. Performing website audits, setting target URLs for keywords, and doing regular ranking checks helps you spot the issue as soon as it happens.

Have you ever struggled with duplicate pages? How have you managed the situation? Share your experience with us in the comments section below!