ChatGPT vs Perplexity vs Google vs Bing: Which AI search engine generates the best answers?

Although AI search engines are becoming more popular, with many new models launched, the quality and reliability of their responses vary.

With this research, we want to compare responses generated by ChatGPT with the enabled search feature (ex-SearchGPT), Perplexity, Google AI Overviews (AIOs), and Bing Search AI powered by Copilot. We looked at the similarities and differences in their answers and discovered how each AI search engine selects sources to cite.

The data used for research:

- Keywords: 2,000 keywords from 20 niches (100 per industry)

- Analysis Location: United States

- Analysis Period: Feb 26, 2025 – March 3, 2025

The selected queries triggered the following response counts:

- Perplexity: 1,999 answers (99.95%)

- ChatGPT: 1,998 answers (99.90%)

- Bing Copilot: 1,452 answers (72.60%)

- Google AI Overviews: 1,163 answers (58.15%)

See more details on our brief methodology at the end of this article.

These findings aim to help SEO professionals and content creators better align their optimization strategies with each AI search tool’s unique characteristics.

Let’s move on to the research results below.

-

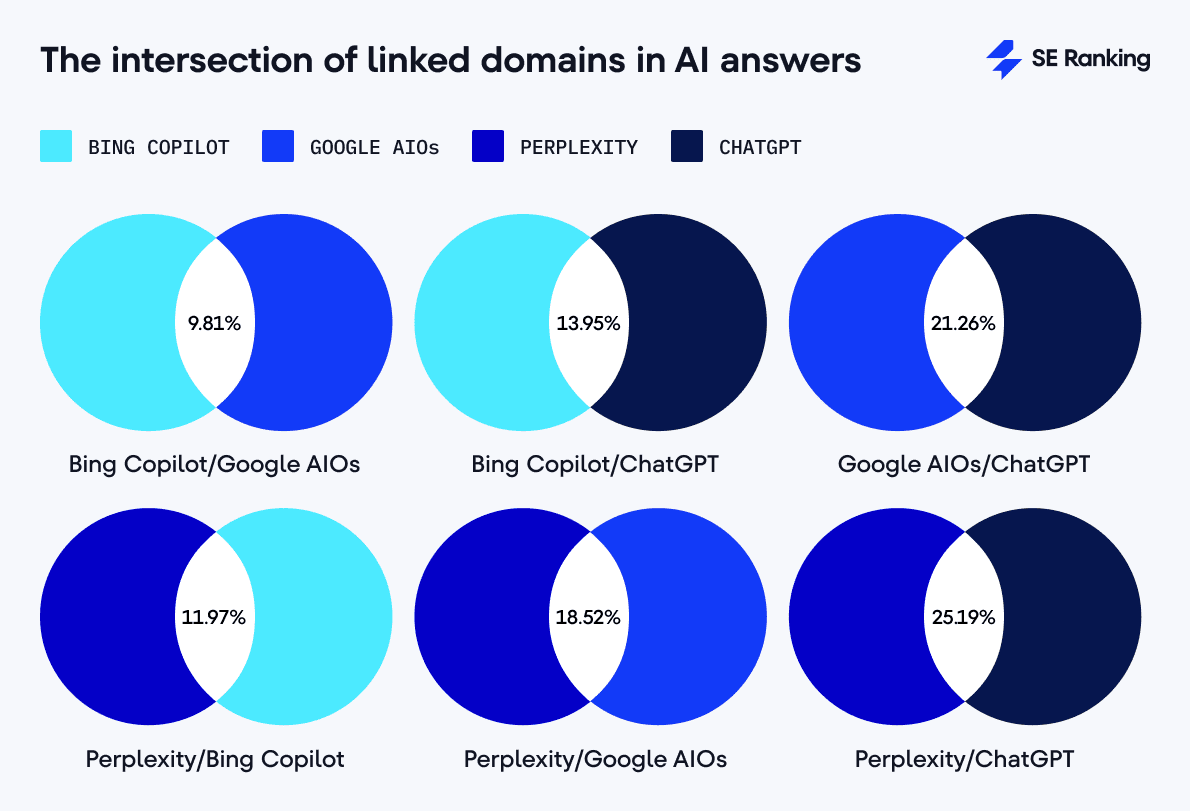

Perplexity and ChatGPT have the highest overlap of referenced domains, having 25.19% of cited domains in common. Google AIO and ChatGPT also demonstrate high overlap (21.26%). Bing Copilot has the lowest overlap of cited domains (9.81% of intersection with Google AIOs, 11.97% with Perplexity, and 13.95% with ChatGPT).

-

Bing Copilot often sources domains less than 5 years old (18.85%). Google’s AIO feature cites citations from older domains the most (49.21% are over 15 years old). ChatGPT favors older domains (45.8% are over 15 years old) and frequently includes newer sources (11.99% are less than 5 years old). This suggests that younger websites are more likely to get traffic from Bing and ChatGPT than from AIOs. Perplexity typically cites domains that are 10-15 years old (26.16%).

-

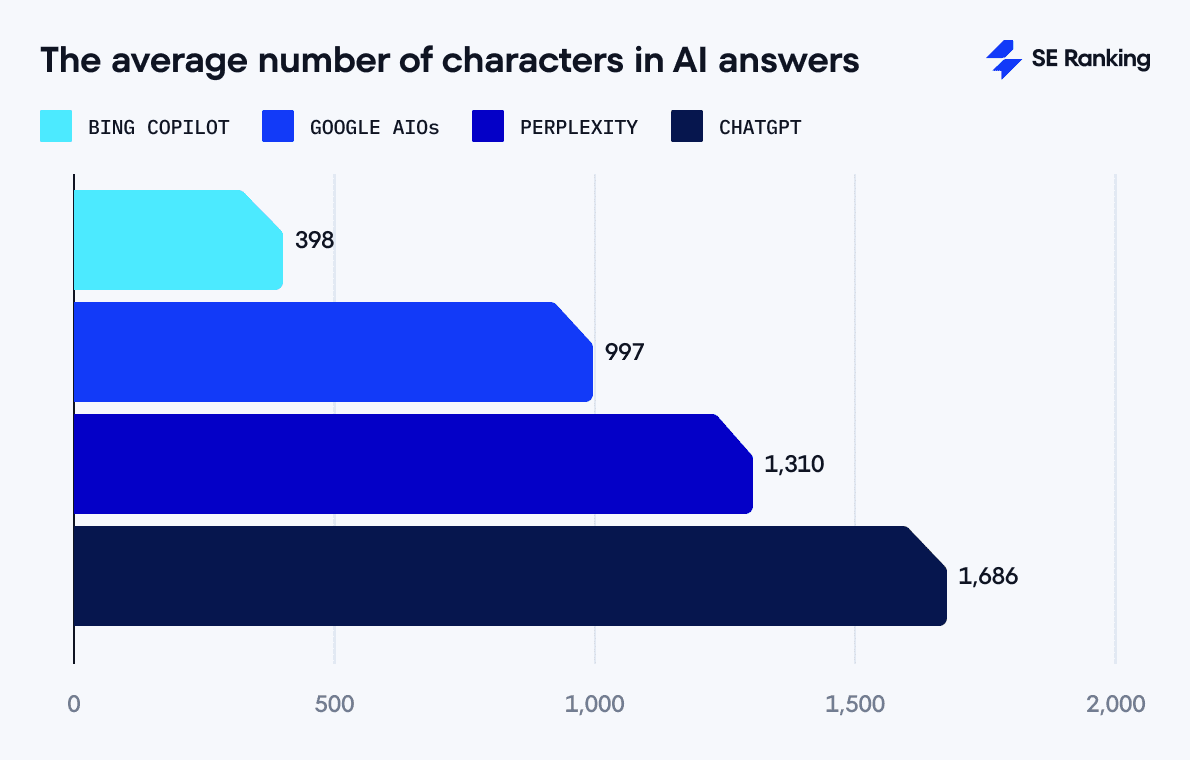

Bing Copilot generates the shortest average responses (398 characters) but uses the most diverse vocabulary. Its answers are straightforward, rarely include complex structures, and are moderately subjective (0.45). It has the fewest supporting links on average compared to other AI search engines (3.13 links). Content shown by Bing Copilot is moderately similar to that of Perplexity (0.57) and ChatGPT (0.56).

-

Google AIO responses are medium in length (997 characters on average) and, like Bing, have low grammatical complexity. AIOs repeat words often, suggesting limited vocabulary. AIOs use moderately opinionated language (0.48) and tend to cite many sources to support their answers (9.26 links on average). AIO answers are the least like other AI search engine answers (0.48).

-

Perplexity generates moderately long responses (1,310 characters on average) but frequently repeats information. It produces the most subjective content (0.50) and demonstrates the highest grammatical complexity. It is also the most consistent in average referencing behavior (5.01 links), with five links in nearly all responses.

-

ChatGPT produces the longest answers (1,686 characters on average), with a relatively diverse vocabulary and the most fact-based tone (0.44). But it often uses grammatically complex sentence structures, with the highest average number of links per response (10.42 links).

-

Perplexity and ChatGPT give similar responses. Apart from answer length and tone of voice, they also demonstrate the highest semantic similarity (0.82). Both also frequently link to pages with minimal traffic (44.88% of cases in Perplexity and 47.31% in ChatGPT).

-

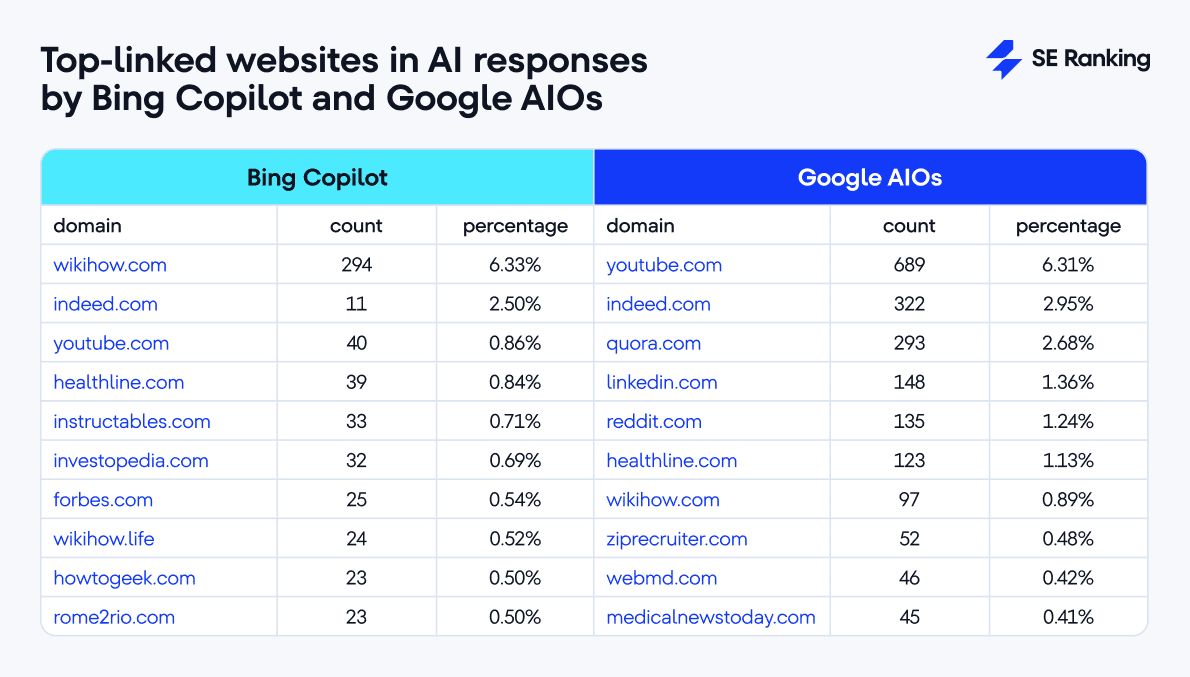

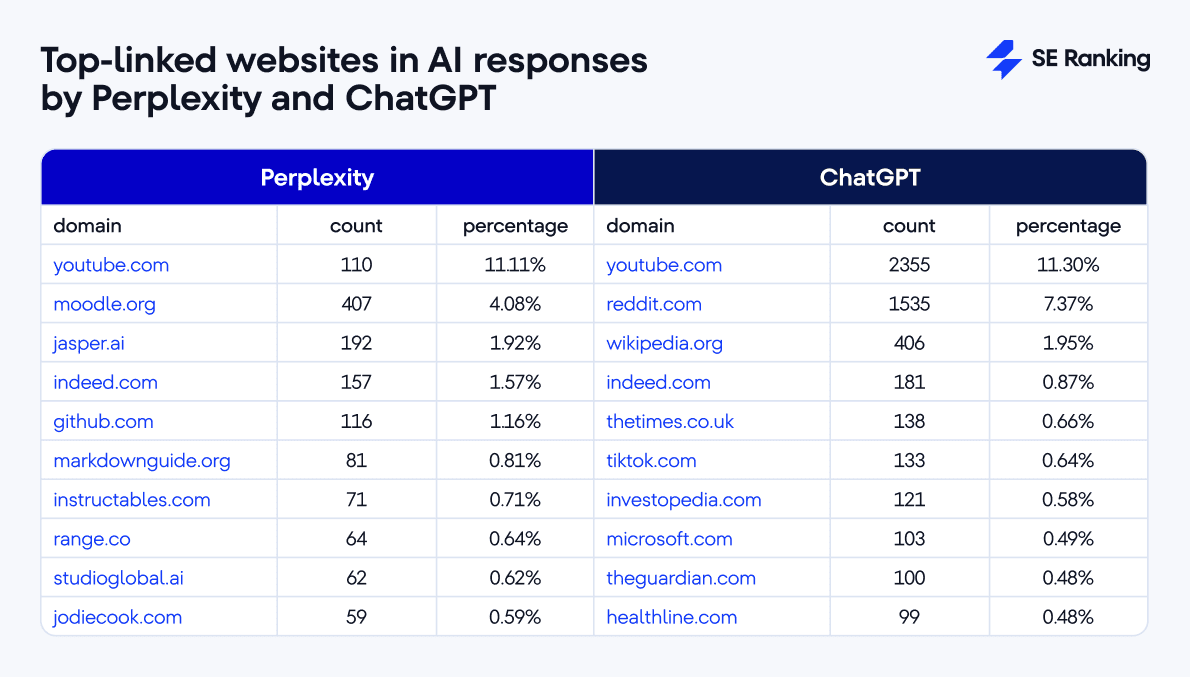

YouTube is the most linked-to website in responses produced by ChatGPT (11.30%), Perplexity (11.11%), and AIOs (6.31%). Bing Copilot only refers to YouTube 0.86% of the time. Its number one source is WikiHow ( 6.33%).

Disclaimer:

This research explores how AI search engines, like Google AI Overviews, Bing Copilot, ChatGPT, and Perplexity (both with search feature enabled), respond to user queries and select sources to back up their answers.

Note that factors like keywords and analysis timeframes can influence results. The check was run before the Google Core Update ended. After this update SEO specialists noticed that AIOs began to appear for about 80% of queries.

This is our interpretation, but other valid conclusions may be drawn.

Source analysis: Link count, cited websites, domain overlap, and more

AI response link count

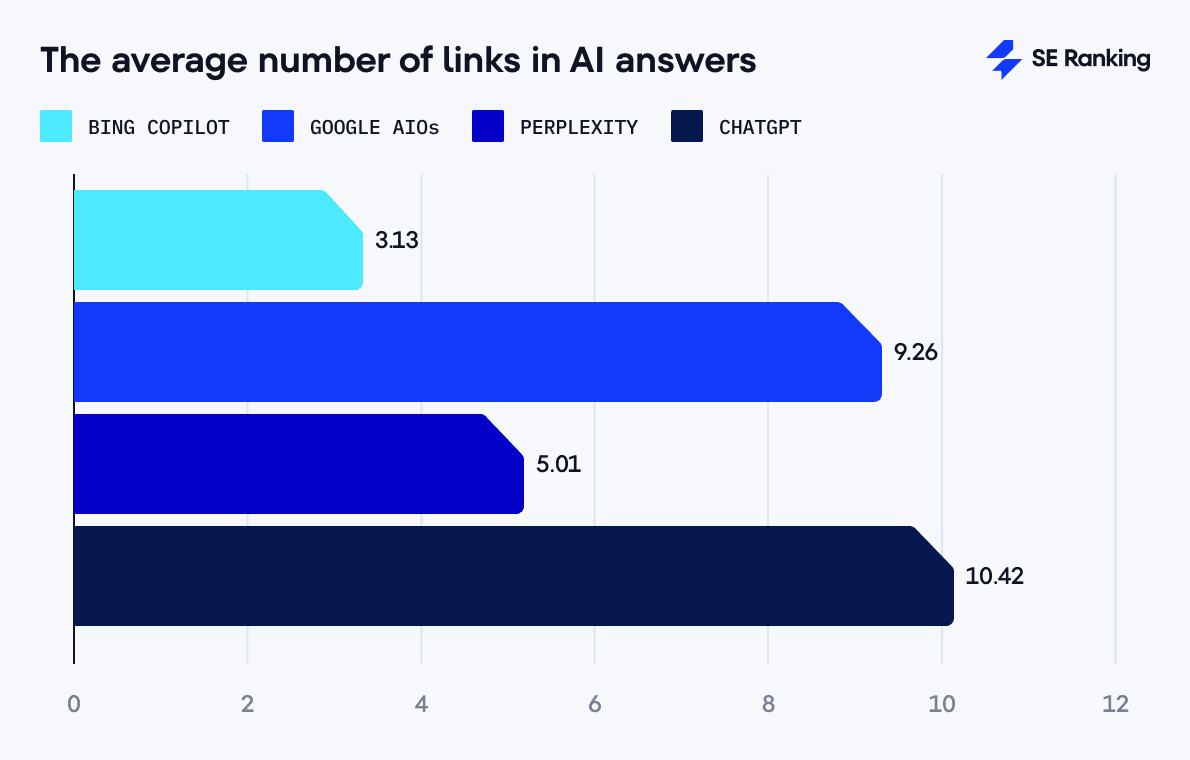

All four AI tools include vastly different average numbers of links included in their responses:

- ChatGPT: 10.42 links per response. This is the highest number of references, indicating thoroughly supported content.

- Google AIOs: 9.26 links per response. Google also heavily references sources to provide verifiable answers.

- Perplexity: 5.01 links per response. Perplexity uses references to back its responses to a moderate extent.

- Bing Copilot: 3.13 links per response. Bing uses the fewest references and provides the shortest responses (398 characters).

Not surprisingly, the tools producing the longest (ChatGPT 1,686 characters) and shortest answers (Bing 398 characters) also include the most and least references. But the same can’t be said about Google’s AIOs and Perplexity. Although AIOs generate shorter responses than Perplexity (977 compared to 1,310 characters), they use almost twice as many links to validate responses (9.26 compared to 5.01).

We cover AI answer length in more detail later on in this study, in the Content Analysis section.

Correlation between AI response length and source count

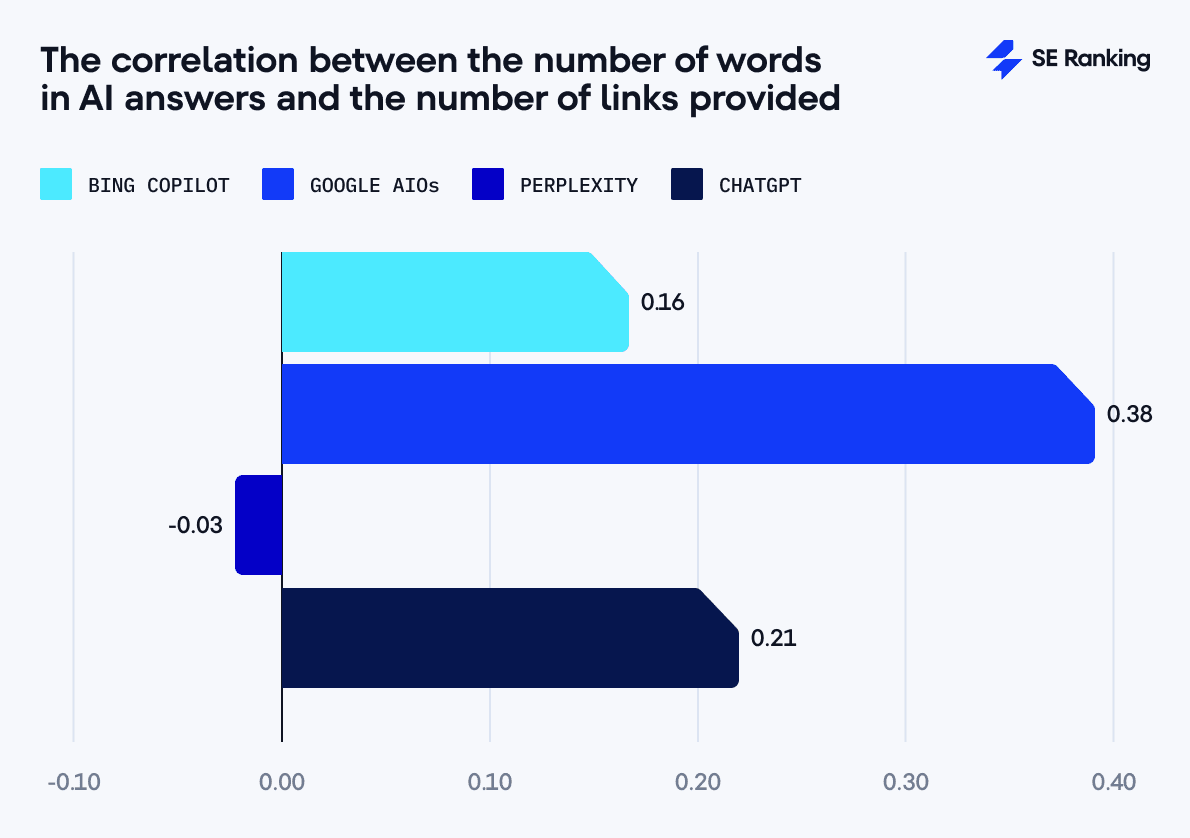

We used the Pearson correlation coefficient to find the correlation between response length (in words) and the number of links in each answer. This coefficient measures the degree of relationship between two datasets. It suggests that longer answers will have more links.

The Pearson correlation coefficient is always between -1 and +1, where

- +1 means a positive relationship where the variables increase together.

- 0 means no relationship.

- -1 means a negative relationship where one variable goes up while the other goes down.

Here are the average correlation coefficients of our dataset:

- Bing Copilot: 0.16, weak positive correlation

- Google AIO: 0.38, moderate positive correlation

- Perplexity: -0.03, no significant correlation

- ChatGPT: 0.21, weak positive correlation

Although AIOs show the highest correlation value (0.38), this is still only a moderate positive correlation between the answer length and number of links provided.

ChatGPT is second with a weaker positive correlation (0.21). This suggests some connection between answer length and reference count, but not as strong a connection as AIOs.

Bing Copilot also demonstrates a weak correlation (0.16), while Perplexity shows no significant connection (-0.03). Perplexity maintains a highly consistent number of links in its answers, typically citing five sources. AI Overviews display a much wider range, with their link count varying from as few as two to as many as 53.

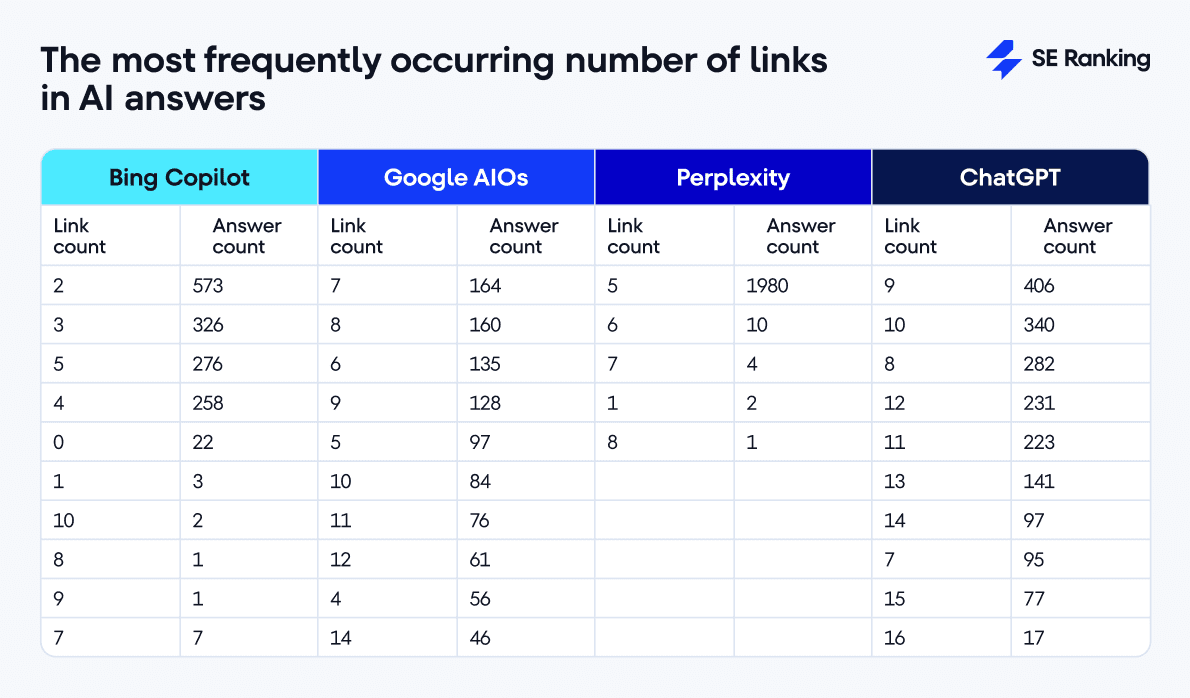

The most frequently occurring link count in AI answers

Bing Copilot takes a minimalist approach to citing sources. It typically uses 2 links (573 cases), followed by 3 links (326 cases), and then 5 links (276 cases). Bing Copilot favors concise answers backed by just enough sources to get the job done. In 22 instances, it skips links entirely, likely drawing solely from its internal knowledge base.

Google AIO has a wider distribution in link count, peaking at 7 references (164 cases), followed by 8 references (160 cases) and 6 references (135 cases). Google uses more sources than Bing Copilot, reflecting its more detailed approach to content support.

ChatGPT tends to feature 9 sources (406 cases), 10 sources (340 cases), and 8 sources (282 cases). In some cases, the link count reached 24. This means ChatGPT prefers comprehensive answers.

Perplexity demonstrates the most homogeneous distribution, with most responses (1,980 cases) including exactly 5 sources. This suggests a clearly defined information-referencing strategy, contrasting other tools’ more diverse referencing approaches.

Traffic, keywords, backlinks, and referring domains of URLs cited in AI responses

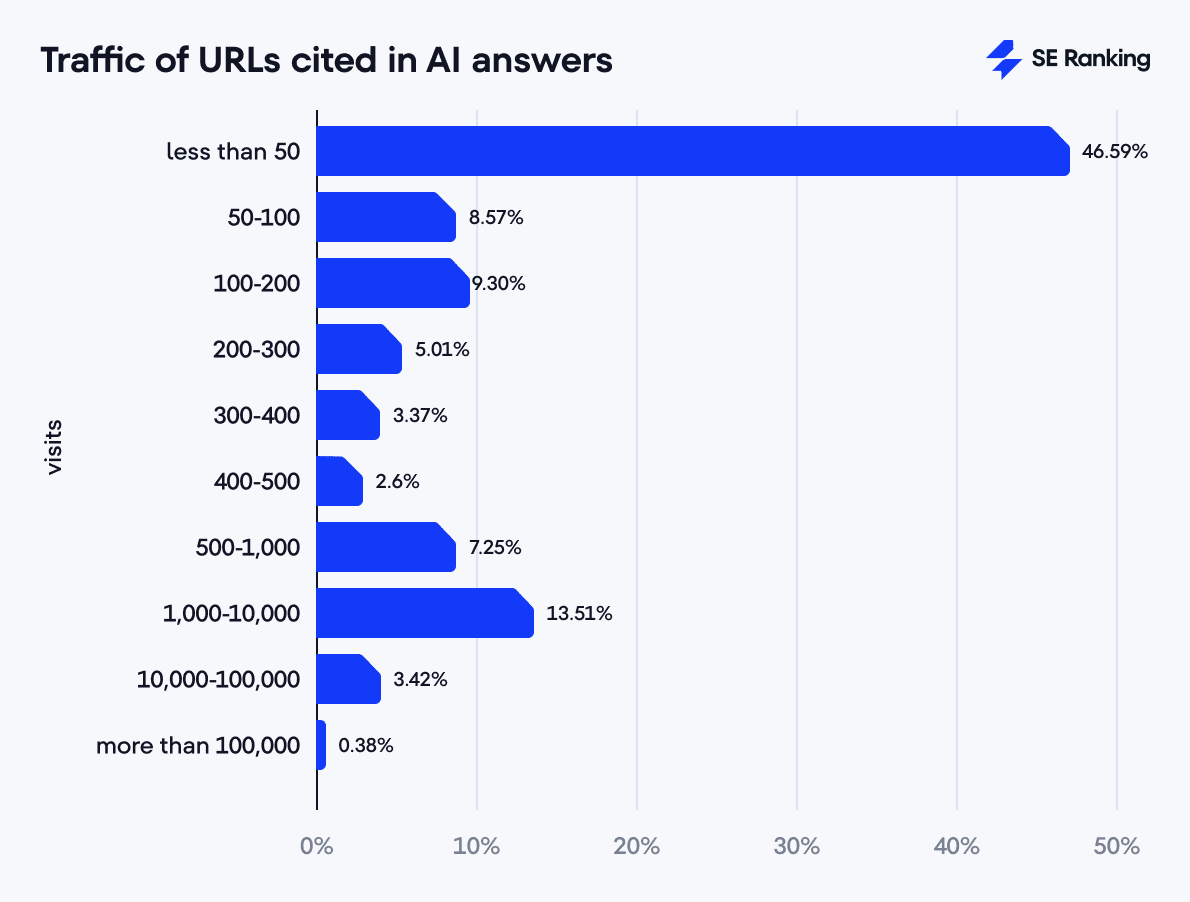

We used SE Ranking’s Competitor Analysis Tools to analyze links featured as sources in AI answers. Out of the 46,299 total links appearing in AI answers across all tools, we have information about organic traffic data for 33,508 URLs (72.37%). 29,271 of these 33,508 URLs (87.34%) have non-zero organic traffic from Google regular search, and 4,237 URLs (12.64%) receive no traffic from Google.

The traffic distribution shows that almost half of the links (15,613 URLs or 46.59%) get few traffic, from 0 to 50 visits. 4,237 of these URLs have zero traffic. 13.51% of URLs receive between 1,000 and 10,000 visits. Only 0.38% of URLs have extremely high traffic, with more than 100,000 visits.

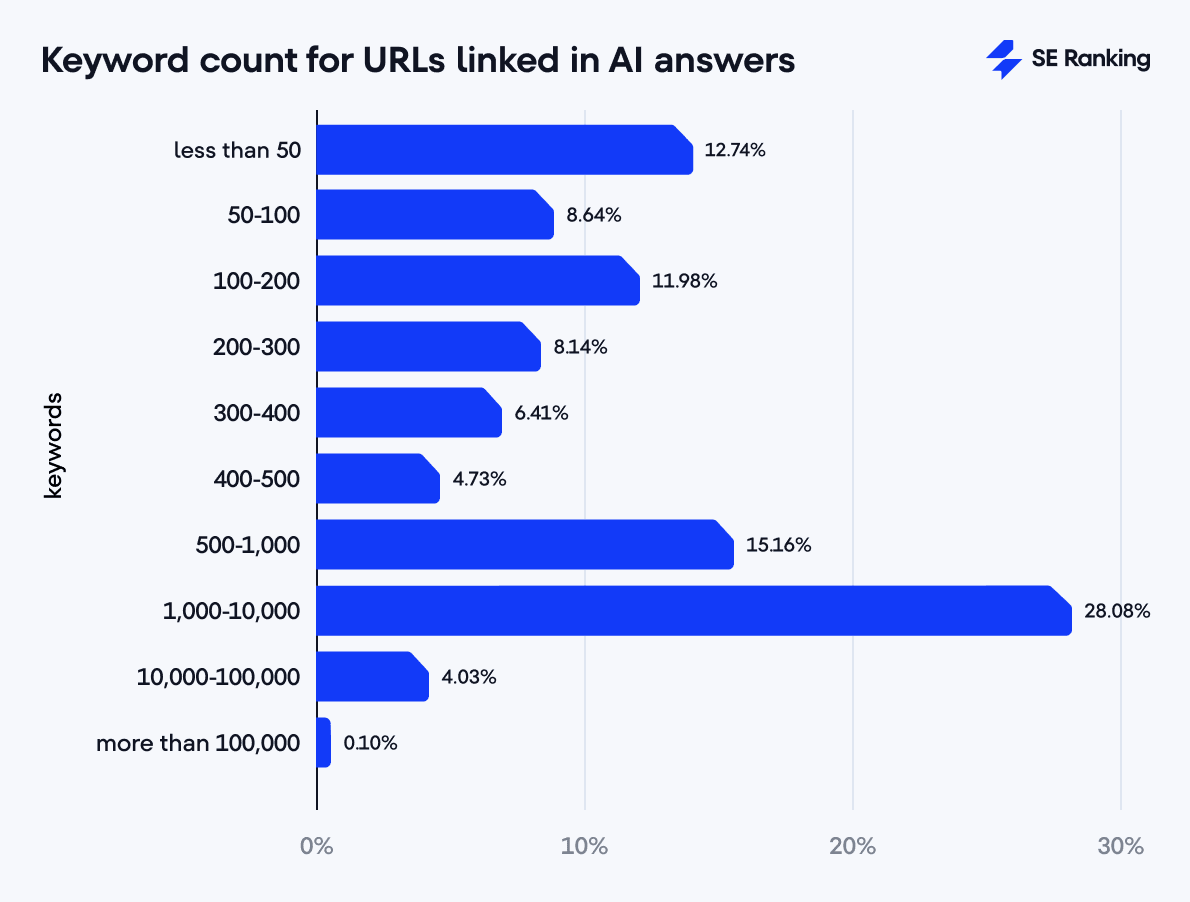

As for keywords, 28.08% of URLs rank in regular Google search for 1,000-10,000 keywords. 12.74% of URLs rank for only a few keywords (0-50 queries). This is typically highly specialized content.

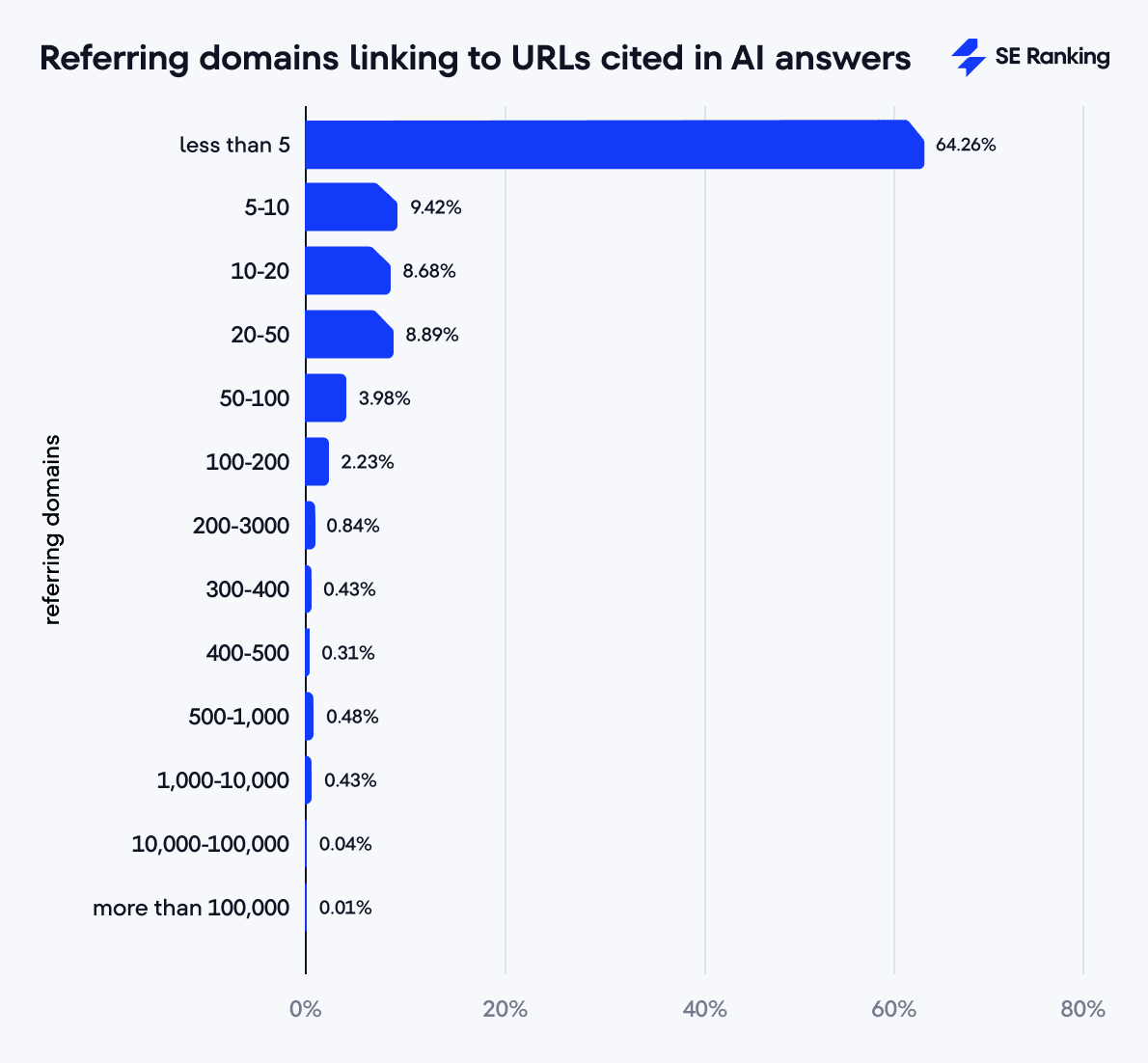

The referring domain data provided by our Backlink Checker shows that most URLs (64.26%) have up to 5 referring domains. This makes sense given that the selected keywords tend to trigger informational content like blog posts. Most of the linked sources have limited traffic as well. A decent share of URLs also have 5 to 50 referring domains (27%).

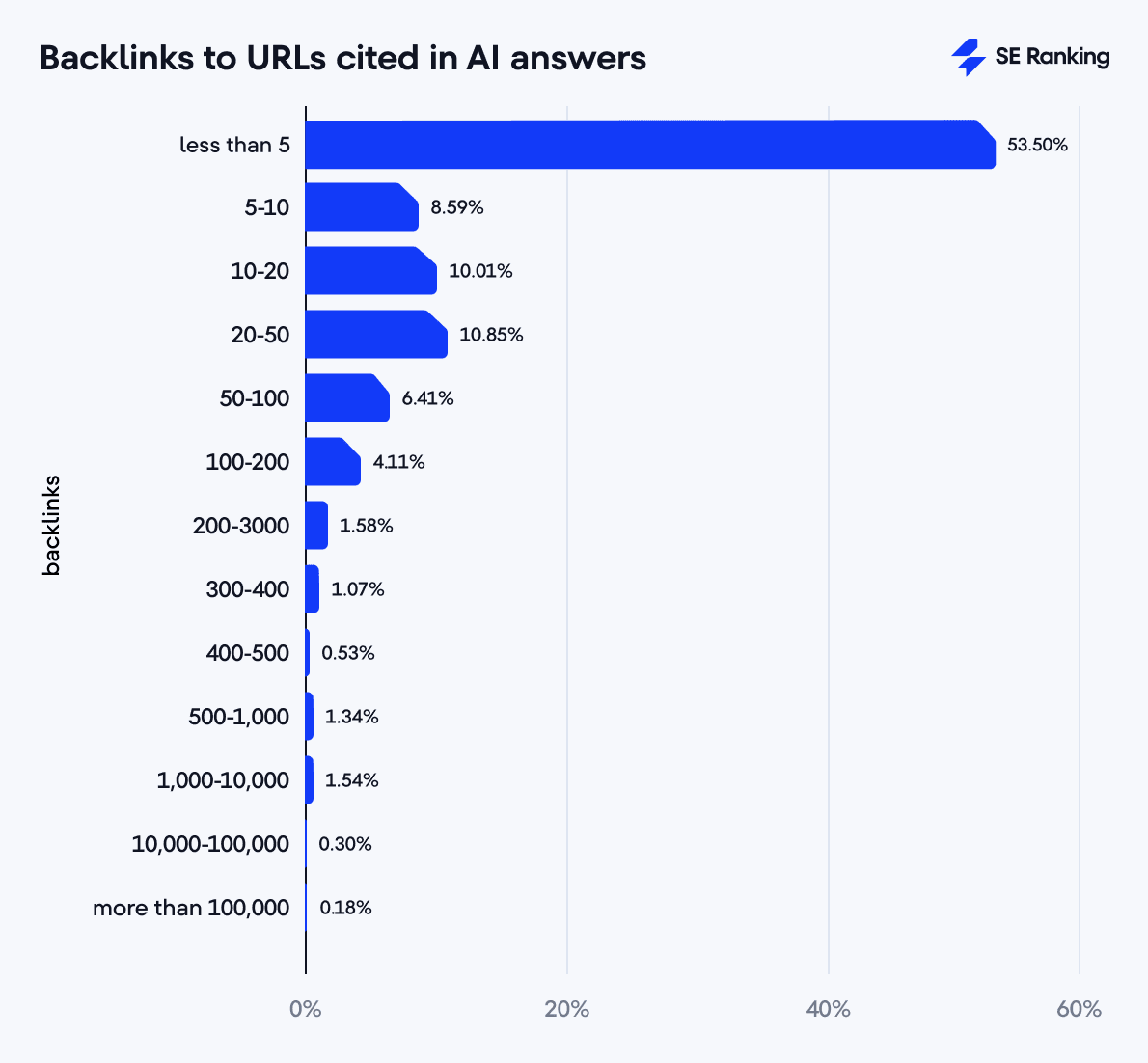

The backlink distribution follows a similar pattern. 53.50% of URLs have between 0 and 5 backlinks, and 29.45% have between 5 and 50 backlinks. But keep in mind that only 75.67% of all URLs (35,043 out of 46,299) have backlinks and referring domain data available.

Most URLs cited by the AI search engines we studied receive organic traffic from regular organic Google results, but many have limited traffic. This suggests that AI search engines cite popular and lesser-known sources, meaning they might prioritize content relevance over traditional SEO metrics. But it’s also possible that our keyword sample is influencing the results.

We also observed a clear gap between high-performing URLs (with substantial traffic, keywords, and backlinks) and those with minimal performance. This indicates a bimodal distribution: a small group of “elite” URLs with high metrics and a much larger group with more modest performance.

Traffic, keywords, backlinks, and referring domains of URLs cited by ChatGPT and Perplexity

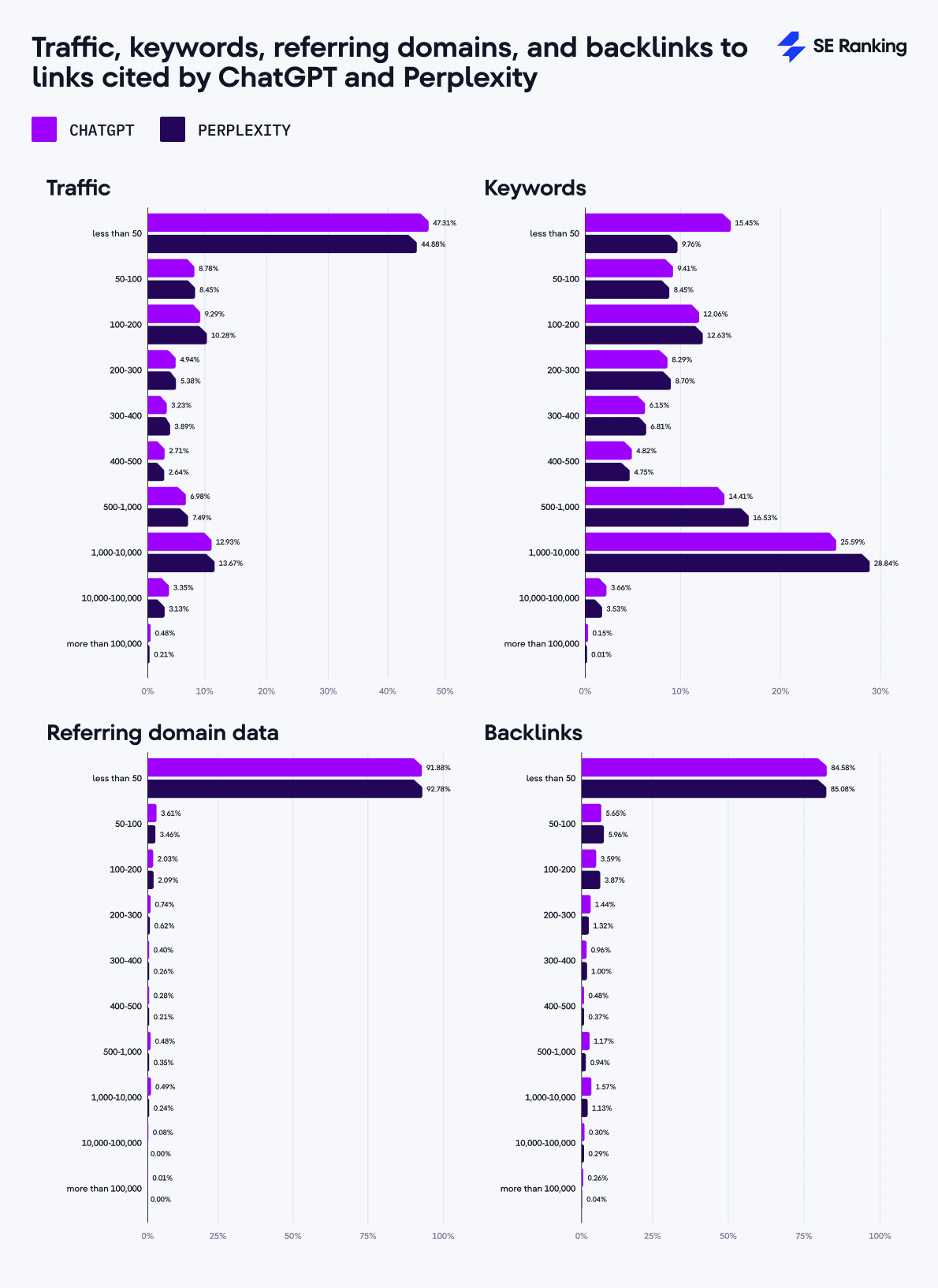

According to our data sample, ChatGPT referenced 20,811 links in total. We have data on 15,520 of them (74.57%), where 13,564 (87.40%) have non-zero traffic from Google.

The largest share of linked pages (47.31%) has minimal traffic (up to 50 visits), and pages with high traffic (100,000+ visits) account for only 0.48%.

As for the number of keywords these sources rank for in Google:

- 15.45% of pages rank for up to 50 keywords

- 25.59% of pages rank for 1,000-10,000 keywords

- 0.15% of pages rank for more than 100,000 keywords

Perplexity shows a slightly different picture, with 9,999 links in total. We have data for 6,714 (67.15%) of them, where 6,022 (89.69%) have non-zero traffic from Google.

Like ChatGPT, the largest share of links in Perplexity’s answers (44.88%) has minimal traffic.

In terms of keywords linked pages rank for:

- 9.76% of linked pages rank for a minimum number of keywords, up to 50

- 28.84% of linked pages rank for 1,000-10,000 keywords

- 0.01% of linked pages rank for more than 100,000 keywords

1,956 URLs (12.60%) cited by ChatGPT and 692 links (10.30%) cited by Perplexity receive no traffic from Google, despite both AI search engines deeming them relevant. This suggests that AI search engines can identify and prioritize useful sources that traditional search engines overlook.

Both AI systems source URLs with few referring domains: 92.78% of pages cited by Perplexity and 91.88% of pages cited by ChatGPT have fewer than 50 referring domains.

Top-linked websites in AI responses

YouTube dominates across ChatGPT (11.3%), Perplexity (11.11%), and Google AIOs (6.31%). These platforms prefer video content as supporting material, while Bing Copilot only cites YouTube 0.86% of the time.

Bing Copilot links most to WikiHow (6.33%). This correlates with its strategy of seeking practical, actionable, and step-by-step answers. Bing Copilot and Google AIOs also frequently link to Indeed (2.5%) for jobs and career-related content.

Google’s AIO feature is the only platform that includes LinkedIn (2.77%) in its list of top 10 linked websites. This suggests that Google prefers professional content. Google also cites community sources like Quora or Reddit (2.68% and 1.24%), adding user-driven experiences and collective knowledge to its responses.

Perplexity links to Moodle (4.08%) more than any website except YouTube (11.11%). It’s the only AI platform that references Moodle, which suggests that Perplexity prioritizes educational content and learning materials. Perplexity also references specialized websites like GitHub, Instructables, and Markdown Guide to support specific queries. In addition, 20% of the domains in Perplexity’s top 10 most linked-to websites are directly related to AI (Jasper.ai, Studioglobal.ai).

ChatGPT focuses on user-generated content more than any other AI in this list. Its 10 most linked-to websites include YouTube (11.3%), Reddit (7.37%), Wikipedia.org (1.95%), and TikTok (just under 1%). It also cites reputable news sources like The Times (0.66%) or The Guardian (0.48%) and professional platforms like Investopedia (0.58%) and Microsoft (0.49%).

When comparing domain distributions across tools, ChatGPT and Perplexity show a greater concentration on a limited set of domains, while Google and Bing demonstrate a more even distribution.

The top 3 most linked-to domains in Perplexity and ChatGPT make up 17.07% and 20.63% of all cited sources, respectively. In Google AIOs, this share is 12.03%, while in Bing Copilot, it’s only 9.69%. This suggests different approaches to ranking and evaluating source relevance.

References of Reddit, Quora, LinkedIn, Wikipedia, and YouTube in AI answers

YouTube is by far the most cited domain across all analyzed tools. It is cited a lot in responses produced by ChatGPT and Perplexity.

AI Overviews frequently suggest YouTube videos, even pointing you to exact time stamps, but you can’t watch them directly from the SERP. You need to visit YouTube.com instead. ChatGPT also includes media content ‘For a visual demonstration’ in its responses. Unlike Google’s AI Overviews, it lets users watch videos directly from the chat interface.

YouTube also receives the most citations in niches related to entertainment and education:

- Games: 475 citations in total

- Hobbies and Leisure: 434 citations in total

- Vehicles: 391 citations in total

- Sports: 348 citations in total

Reddit is cited most by ChatGPT (1,535 times). It is also in the top five linked-to websites in Google AIOs, but the overall count isn’t as impressive (135 times). In each analyzed niche, ChatGPT cites Reddit between 28 and 101 times, with the highest rates in Home and Garden (101 citations), Hobbies and Leisure (97 citations), and Sports (95 citations).

Wikipedia shows a fairly even distribution of citations across tools, although ChatGPT still references this domain most frequently. Wikipedia receives the most citations in niches related to objective knowledge:

- Finance: 38 citations from ChatGPT

- Heavy Industry and Engineering: 39 citations from ChatGPT

- Science and Education: 28 citations from ChatGPT

Quora is almost exclusively cited by Google AIO, with the highest rates in Community and Society (55 citations), Lifestyle (35 citations), and Home and Garden (24 citations). Other tools rarely cite this domain.

LinkedIn has unique citation patterns. AIOs are the only AI search engine that actively cite it, specifically for professionally oriented queries. The highest number of LinkedIn references is for Jobs and Career (51 citations), Business and Consumer Services (33 citations), and Finance (16 citations).

Intersecting linked-to domains in AI responses

We checked how frequently domains cited by one AI search engine appear in another. The higher the percentage, the more domains the tools have in common.

Here’s how the shared domains in AI responses break down:

- Bing Copilot/Google AIO: 9.81%

- Perplexity/Bing Copilot: 11.97%

- Bing Copilot/ChatGPT: 13.95%

- Perplexity/Google AIO: 18.52%

- Google AIO/ChatGPT: 21.26%

- Perplexity/ChatGPT: 25.19%

Perplexity and ChatGPT overlap the most, having 25.19% of their referenced domains in common. This is interesting given that only 20% of their top-linked-to websites coincide.

Google AI Overviews and ChatGPT also have a high overlap percentage (21.26%), meaning they are partially aligned in sourcing strategies. But Google’s overlap with Perplexity is slightly lower (18.52%).

ChatGPT has the highest overlap with other AI search engines, which may suggest it uses the most versatile set of sources or uses the most balanced algorithm to parse out results. It also has the most cited sources in its answers, which can influence the results.

Bing Copilot has the lowest overlap with all other tools, suggesting a distinct approach to sourcing. Bing Copilot and Google AI Overviews have the lower overlap, at only 9.81%. This indicates that Microsoft and Google have much different approaches to citing information. The overlap between Copilot and Perplexity is also relatively low at only 11.97%.

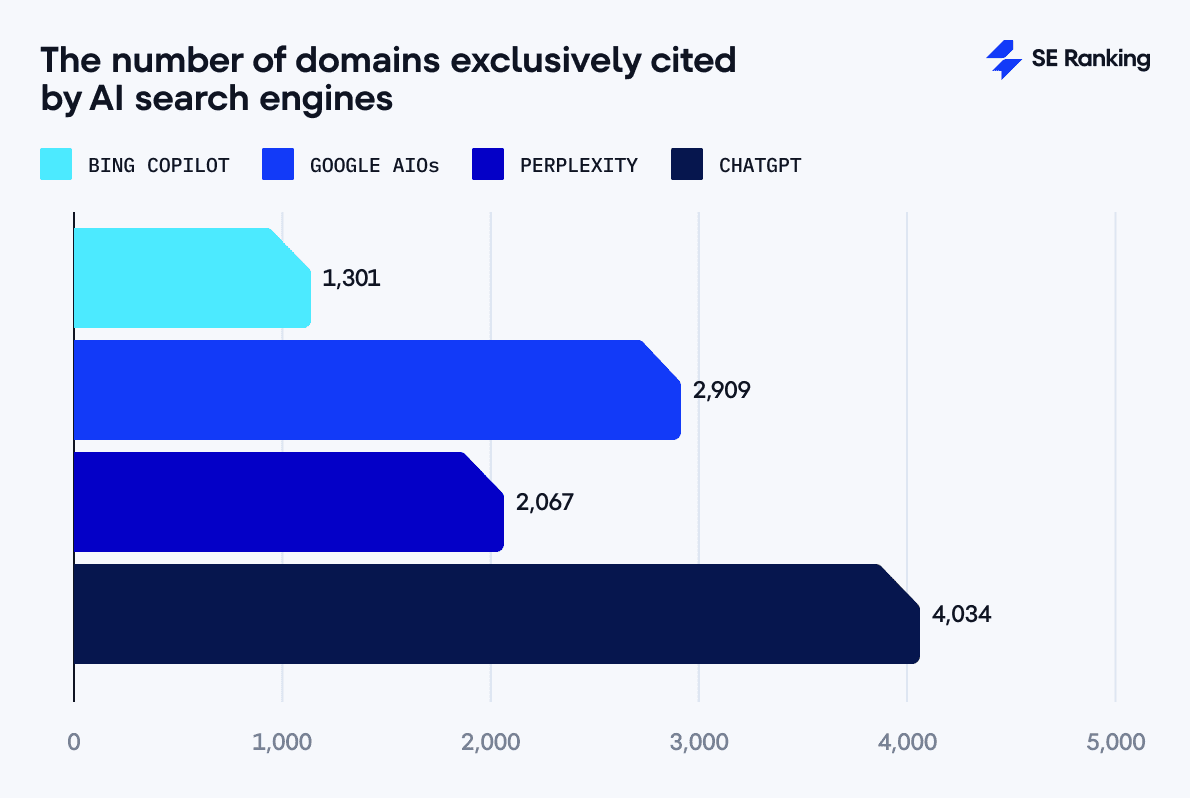

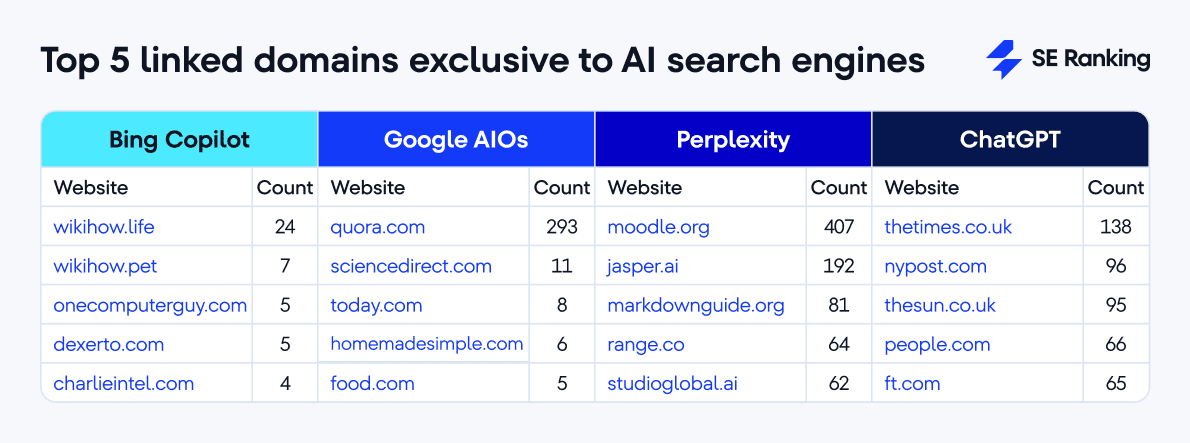

Unique domains linked to by AI answers

We considered the domain unique or exclusive if it was cited by only one AI tool from the set we analyzed.

Our data shows that ChatGPT has the highest number of unique domains (4,034). This can be explained by the fact that ChatGPT uses the largest number of cited sources compared to other AI search engines. It references many sources when generating answers that other AI search engines don’t use:

- Bing Copilot: 1,301 unique domains

- Google AIO: 2,909 unique domains

- Perplexity: 2,067 unique domains

- ChatGPT: 4,034 unique domains

Google AIOs come in second, followed by Perplexity and Bing Copilot. It’s worth noting the difference between the number of unique domains and how much they matter in SERPs. For example, although AIOs link to a ton of domains that other AI search engines don’t link to (2,909), only Quora.com gets cited frequently (293 times); other sources get featured much less often.

Check out the top 5 unique domains that AI search engines link to:

When we compare the top five exclusive domains cited exclusively by each AI tool with the overall top 10 most-linked websites for each, it’s clear that many of these popular domains pop up across multiple AI search engines. This points to a shared set of trusted sources they all rely on, though there are some exceptions:

- Moodle.org: Exclusive to Perplexity and one of its top 10 frequently cited sources.

- Thetimes.co.uk: Unique to ChatGPT.

- Quora.com: Exclusive to Google AIOs and also their third most-linked-to website.

- Jasper.ai: Unique to Perplexity and its third top-referenced website.

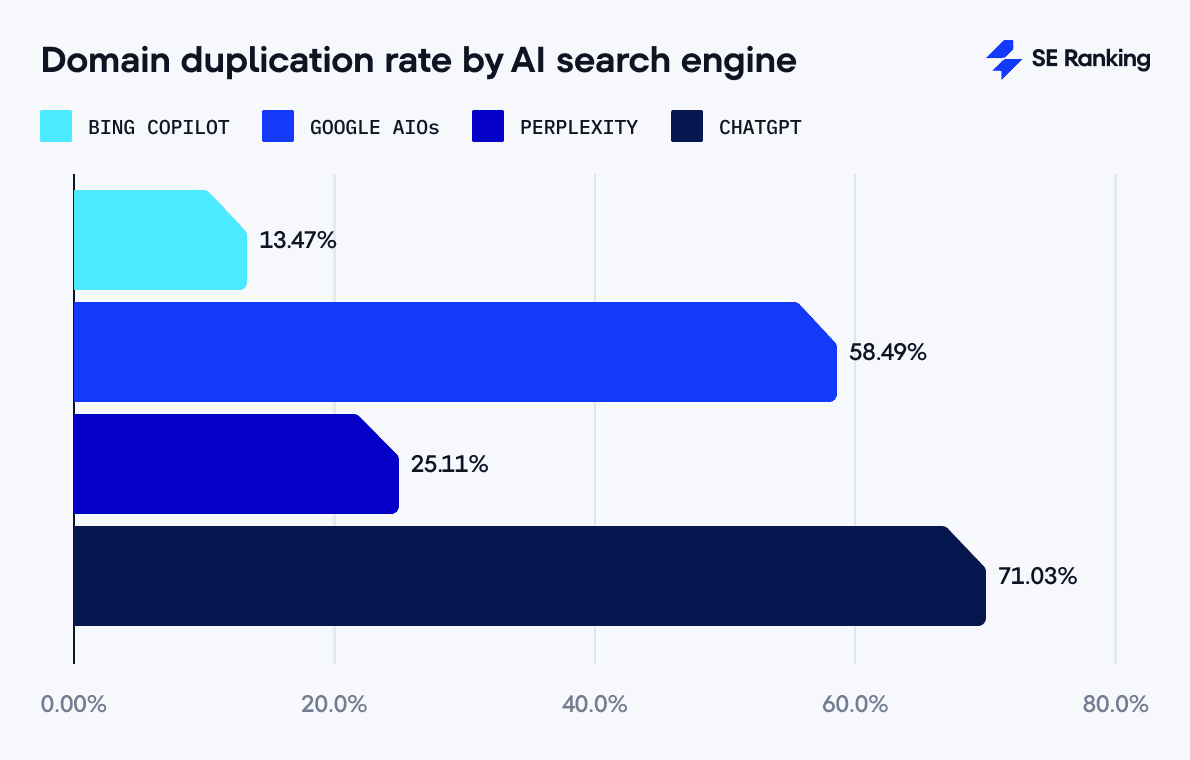

Duplicate domains in AI responses

Out of the 2,000 queries analyzed:

- ChatGPT produced 1,412 answers where at least two URLs from the same domain appeared. It cited duplicate domains at the highest rate (71.03%).

- Google AIOs generated 689 responses with duplicate domains, at a 58.49% rate.

- Perplexity used URLs from the same domain in 501 responses (a 25.11% rate).

- Bing Copilot repeated domains the least (just 203 queries), at a 13.47% rate.

The results show ChatGPT’s strong tendency to repeatedly cite certain websites. Google AI Overviews also prioritize some sources over others but has greater diversification than ChatGPT. Perplexity is more balanced, while Bing provides the most diverse sources from a broader range of websites.

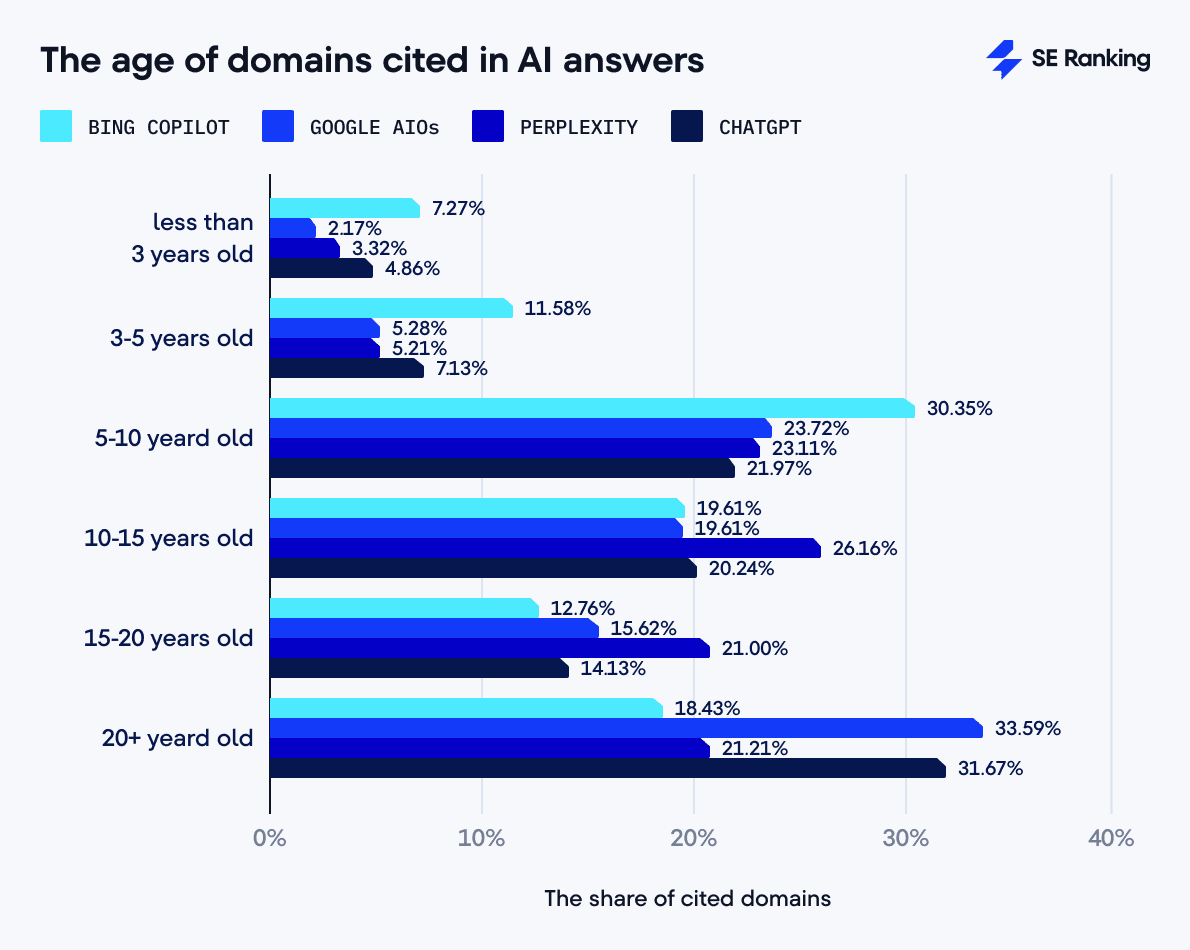

Age of domains cited in AI responses

The average age of domains cited by AI search engines follows this pattern:

- ChatGPT: 17 years

- Google AIO: 17 years

- Perplexity: 14 years

- Bing Copilot: 12 years

We see clearer preferences when grouping domains into younger (less than 5 years old) and older (more than 15 years old) categories.

The share of young domains cited by AI search engines:

- Bing Copilot: 18.85%

- ChatGPT: 11.99%

- Perplexity: 8.53%

- Google AIOs: 7.45%

The share of old domains cited by AI search engines:

- Google AIOs: 49.21%

- ChatGPT: 45.80%

- Perplexity: 42.31%

- Bing Copilot: 31.19%

Bing Copilot frequently links to domains less than 5 years old (18.85%), while Google AIOs have the lowest rate for this age group (7.45%). Looking at older domains (15+ years old), Google AIO references them at a 49.21% rate, compared to Bing Copilot’s at 31.19%.

ChatGPT takes the most contrasting approach to source selection. It has one of the highest percentages of citations from the oldest domains (31.67% for domains over 20 years old), but also has the second-highest citation rate for young domains (4.86% for domains 0-3 years old). It also strongly favors domains aged 5-10 years (21.97%) and 10-15 years (20.24%). For well-established historical info or core knowledge, ChatGPT likely sticks to the most reliable, time-tested sources.

Perplexity shows the most balanced distribution across middle and older age categories. Its highest percentage of citations (26.16%) goes to domains aged 10-15 years, the highest among all systems for this category. It also has substantial rates for domains aged 5-10 years (23.11%), 15-20 years (21.00%), and over 20 years (21.21%). Perplexity has a relatively low citation rate for the youngest domains (3.32% for domains 0-3 years old).

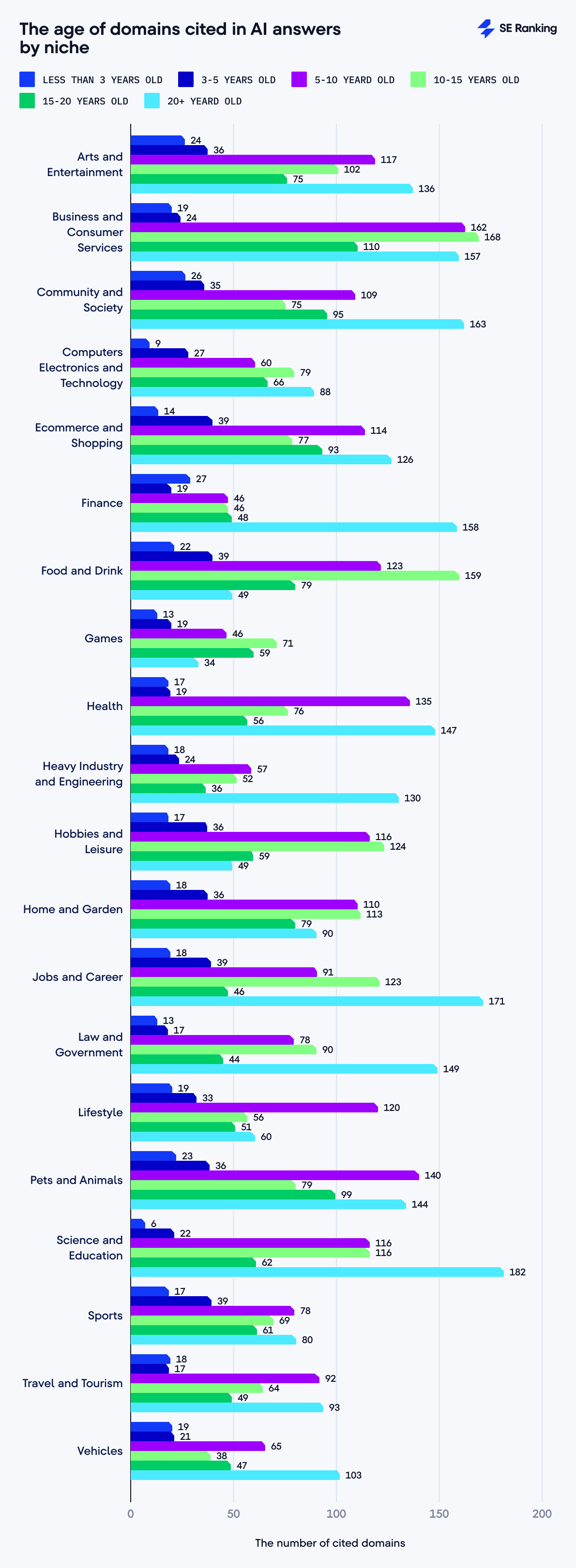

Age of domains cited in AI responses by niche

Below are the niches with the smallest share of young domains (up to 5 years old):

- Science and Education: 5.60%

- Law and Government: 7.70%

- Health: 8%

- Computers Electronics and Technology: 11%

Below are the niches with the smallest share of old domains (over 20 years):

- Food and Drink: 10.40%

- Games: 14%

- Finance stands out with one of the highest shares of older domains. Around 46% of its sources are over 20 years old. Meanwhile, younger domains (less than 5 years old) make up only 13.50%. This reflects the financial sector’s strong preference for trusted, longstanding sources, where history and reputation carry the most weight.

- Law and Government has a similar pattern. Domains over 20 years old account for approximately 38% of the total, and young domains (up to 5 years old) account for only about 7.70%.

- Jobs and Career shows around 35% for older domains (over 20 years) and 11.70% for younger domains (up to 5 years).

- Hobbies and Leisure has a many more middle-aged domains. Domains at 5-10 years and 10-15 years of age dominate this niche, accounting for about 60% of the total.

- Food and Drink (59%) and Home and Garden (59%) follow the same pattern, with middle-aged domains dominating.

Top-level domains linked in AI responses

We detected 46,299 URLs and 15,181 unique websites cited by AI search engines, and found 174 different TLDs.

Here are the top 10 most frequently cited TLDs across all the AI search engines we analyzed:

- .com: 37,477 links

- .org: 3,270 links

- .uk: 960 links

- .edu: 737 links

- .gov: 607 links

- .net: 554 links

- .au: 428 links

- .ai: 348 links

- .co: 303 links

- .io: 283 links

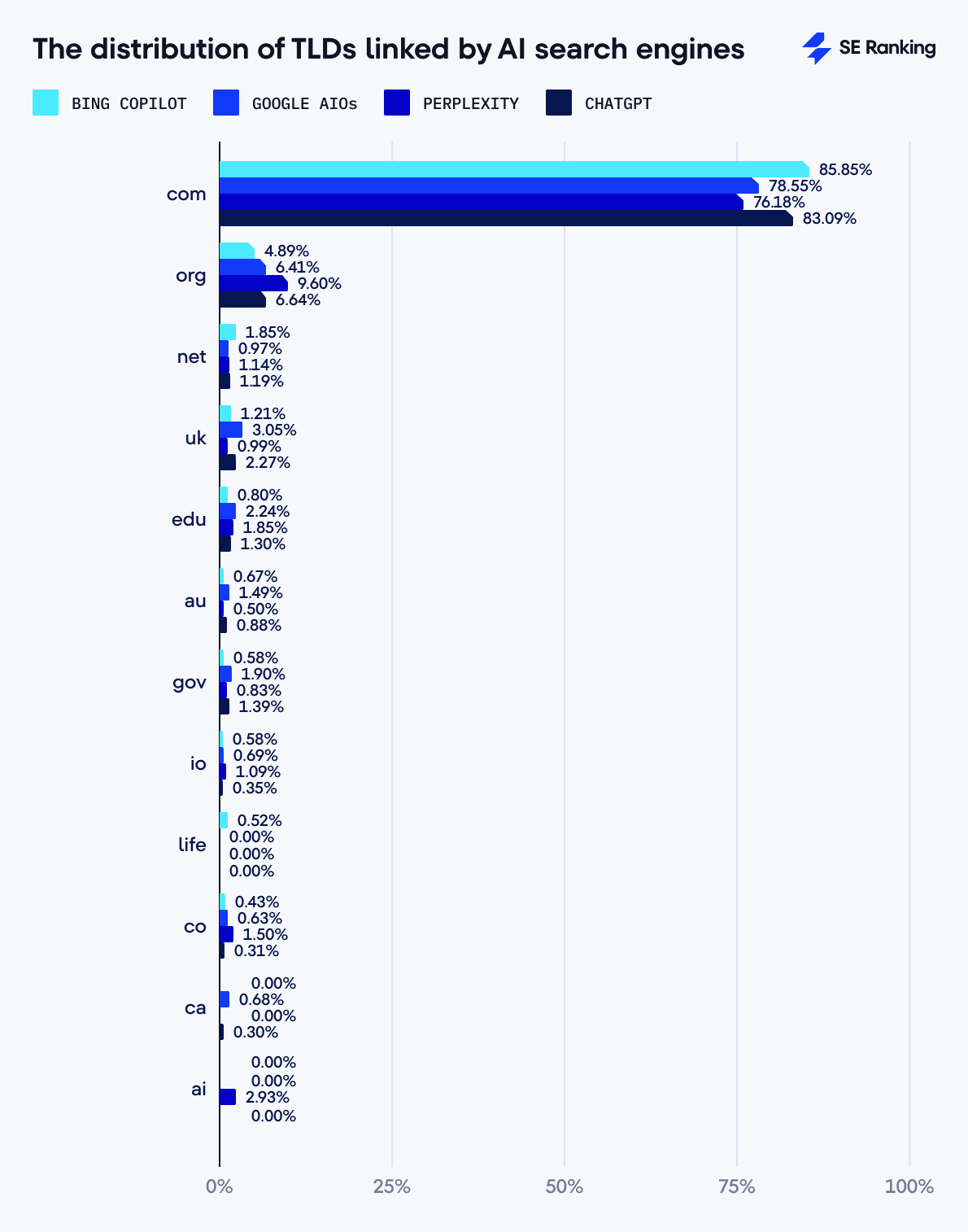

Here is our breakdown of TLDs by AI search engine:

The .com TLD is the leader across all AI search engines. Bing Copilot cites .com TLDs 85.85% of the time, followed by ChatGPT (83.09%), Google AIOs (78.55%), and Perplexity (76.18%).

The .org TLD ranks second across all AI search engines but with varying rates: 9.60% for Perplexity, 6.64% for ChatGPT, 6.41% for Google AIOs, and 4.89% for Bing.

The .uk TLD has high representation in Google AIOs (3.05%) and ChatGPT (2.27%) but much lower representation in Perplexity (0.99%) and Bing (1.21%). While the .au TLD is cited most by Google AIOs (1.49%), the rate doesn’t exceed 0.8% for other AI search engines. The .ca TLD is cited most by Google AIOs (0.68%) and ChatGPT (0.30%).

Perplexity stands out with a 2.93% rate for the .ai TLD, suggesting a greater tendency to cite AI-related sources.

Top-level domains linked in AI answers by niche

The greatest variety of TLDs appear in Health, Law and Government, and Science and Education, while the highest concentration of .com TLDs occurred for Food and Drink, Home and Garden, and Hobbies and Leisure.

We also see a correlation between topics and domain type. For example, the high percentage of .gov in the Law and Government niche (11.50%) and .edu in Science and Education (7.91%) reflects these industries’ thematic specificity.

The .ai TLD had its highest share in Business and Consumer Services (2.02%) and Science and Education (1.30%). This could reflect AI’s growing presence in these areas.

In the Games niche, the .gg TLD has a significant share (2.97%). This is much higher than its overall representation in search results, indicating its specificity to the gaming industry.

The .broadway TLD (0.23%) appears only in Arts and Entertainment, which makes sense given its specificity. Similarly, .pet (0.46%) and .fish (0.12%) appear in the Pets and Animals niche, and .bank (0.34%) in Finance.

The Heavy Industry and Engineering niche has a high share of .aero (0.19%), specific to the aviation industry.

The Computers Electronics and Technology niche shows increased representation of technical TLDs like .io (1.58%), .app (0.42%), and .codes (0.33%), reflecting the industry’s technological focus.

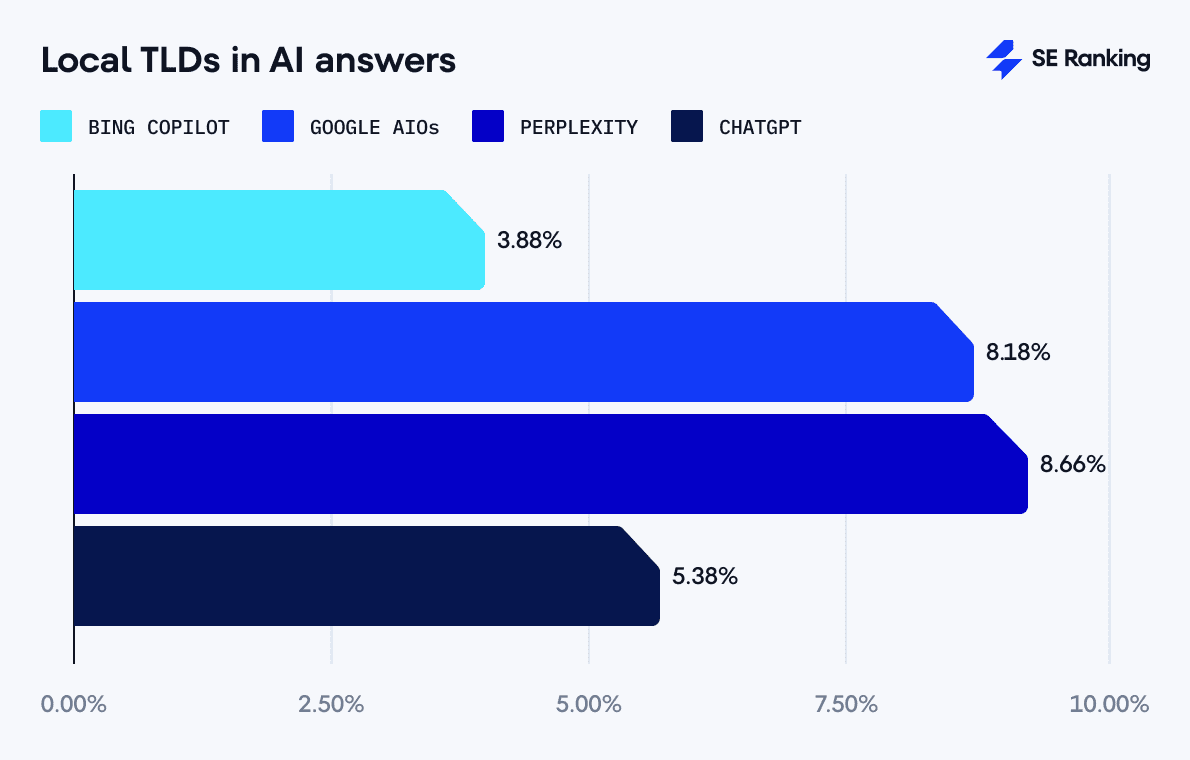

Local and thematic top-level domains in AI answers

Perplexity and Google tend to reference more local domains (country-specific domains like .uk, .de, .fr, etc.) in their answers compared to other tools:

- Perplexity: 8.66%

- Google AIO: 8.18%

- ChatGPT: 5.38%

- Bing Copilot: 3.88%

This suggests that Perplexity and Google prioritize local or region-specific sources over other tools. Bing has a much stronger preference for global over local domains when providing information.

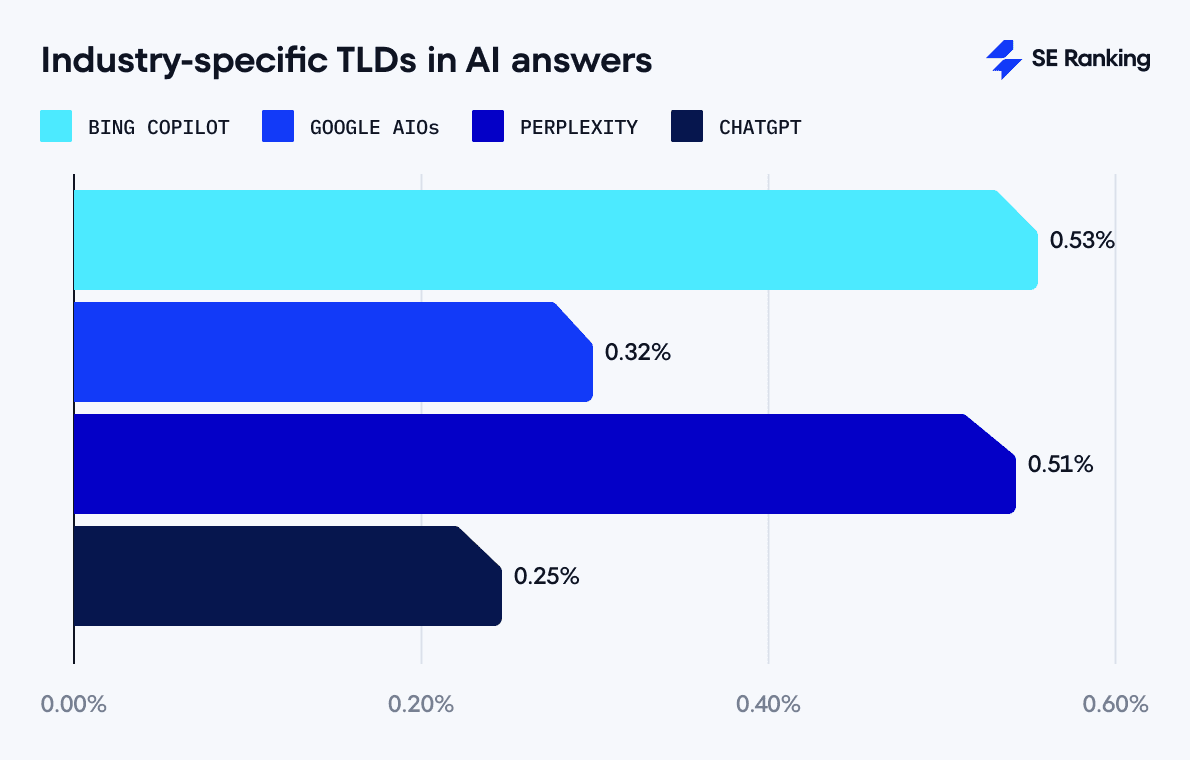

As for industry-specific domains (like .health, .pet, .bank, .art, .training, .media, etc.), Bing Copilot takes the lead:

- Bing Copilot: 0.53%

- Perplexity: 0.51%

- Google AIOs: 0.32%

- ChatGPT: 0.25%

These numbers indicate that industry-specific domain usage is low across all tools, representing less than 1% of citations. Bing and Perplexity are slightly more likely to reference specialized industry domains compared to Google and ChatGPT, but with only slight differences.

YouTube stands out as a go-to source across nearly all AI search engines. ChatGPT references it 11.30% of the time, Perplexity is close behind at 11.11%, and Google AIOs links to it in 6.31% of cases. Bing Copilot is the only AI search engine that refers to YouTube less than 1% of the time (0.86%).

ChatGPT produces reference-heavy responses, with 10.42 links per response. But it has the highest domain duplication rate (71.03%), repeatedly referencing the same websites. ChatGPT’s sources include a mix of user-generated content (Reddit, Wikipedia, TikTok), major media outlets (The Times, The Guardian), and professional sources (Investopedia, Microsoft). While it favors older domains (45.80% are over 15 years old), it also includes newer sources at a relatively high rate (11.99% are less than 5 years old).

Google AI Overviews take a balanced but reference-heavy approach, with an average of 9.26 links per response. Unlike ChatGPT, it has a lower but still high domain repetition rate (58.49%). It stands out by frequently citing professional networks (LinkedIn, Indeed) and community-driven platforms (Quora, Reddit). Google also has the largest share of citations from older domains (49.21% over 15 years old).

Perplexity follows a structured and consistent referencing strategy, most frequently citing exactly 5 sources per response (in 1,980 cases). It has a lower domain repetition rate (25.11%) than ChatGPT and Google, and sources educational and AI-related content, referencing Moodle, GitHub, Jasper.ai, and Studioglobal.ai. Perplexity mostly references 10-15-year-old websites at 26.16% rate. It shares the highest percentage of domains with ChatGPT (25.19%), suggesting a similar approach to selecting sources.

Bing Copilot takes a minimalist approach, using the fewest links per response (3.13 on average) and the lowest domain repetition rate (13.47%). It frequently relies on a small set of practical websites, such as WikiHow, Indeed, and Healthline. Unlike the other AI search engines, Bing references the highest percentage of newer domains (18.85% under 5 years old), making it more open to emerging sources. It also has the least domain overlap with other AI search engines, indicating its distinct and independent approach.

Content analysis: Answer length, similarity, complexity, objectivity, and more

Length of AI responses

Our analysis shows clear differences in the average length of answers in each AI search engine:

- Bing generates the shortest responses, averaging only 398 characters (83 words) per response.

- Perplexity ranks second, with an average of 1,310 characters (257 words) per response.

- AI Overviews come third with 997 characters (191 words).

- ChatGPT produces the longest answers, averaging 1,686 characters (318 words), roughly four times longer than Bing.

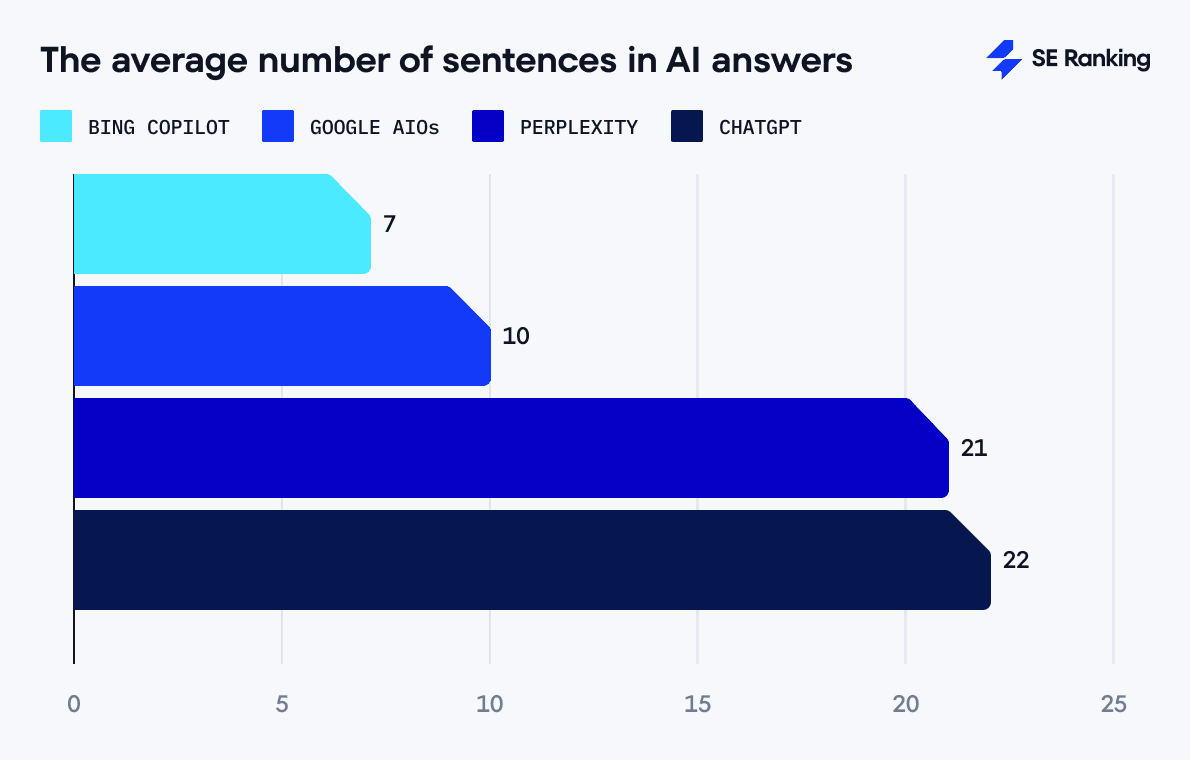

As for the average number of sentences per response, ChatGPT and Perplexity show similar results, averaging 22 and 21 sentences, respectively. Google AI Overviews contain 10 sentences per answer on average, and Bing Copilot averages only 7 sentences.

We also examined the average number of characters per sentence, calculated by dividing the total number of characters by the total number of sentences. The results show the following:

- Bing Copilot: 60 characters per sentence

- Perplexity: 63 characters per sentence

- ChatGPT: 78 characters per sentence

- Google AIO: 101 characters per sentence

These findings highlight an interesting pattern: AI search engines that produce longer answers typically use shorter sentences.

ChatGPT and Perplexity gave much longer, more detailed responses, with averages of about 1,686 and 1,310 characters, respectively. Both tools generate significantly more sentences per response (22 and 21 on average). Although this highlights their structured approach to dividing information clearly into multiple sub-points or steps, they produce smaller and easier-to-digest sentences.

Google AIO responses have pretty high character counts (997) but fewer sentences (10). This means each sentence is longer on average (101 characters per sentence). This suggests Google’s tendency to deliver information in denser, more complex sentence structures or longer explanations per sentence.

Bing Copilot gives short answers (398 characters) with fewer sentences (7). Each sentence is relatively short (approximately 60 characters per sentence). This indicates a style of brief answers suitable for quick scanning but without detailed context.

AI response length by niche and tool

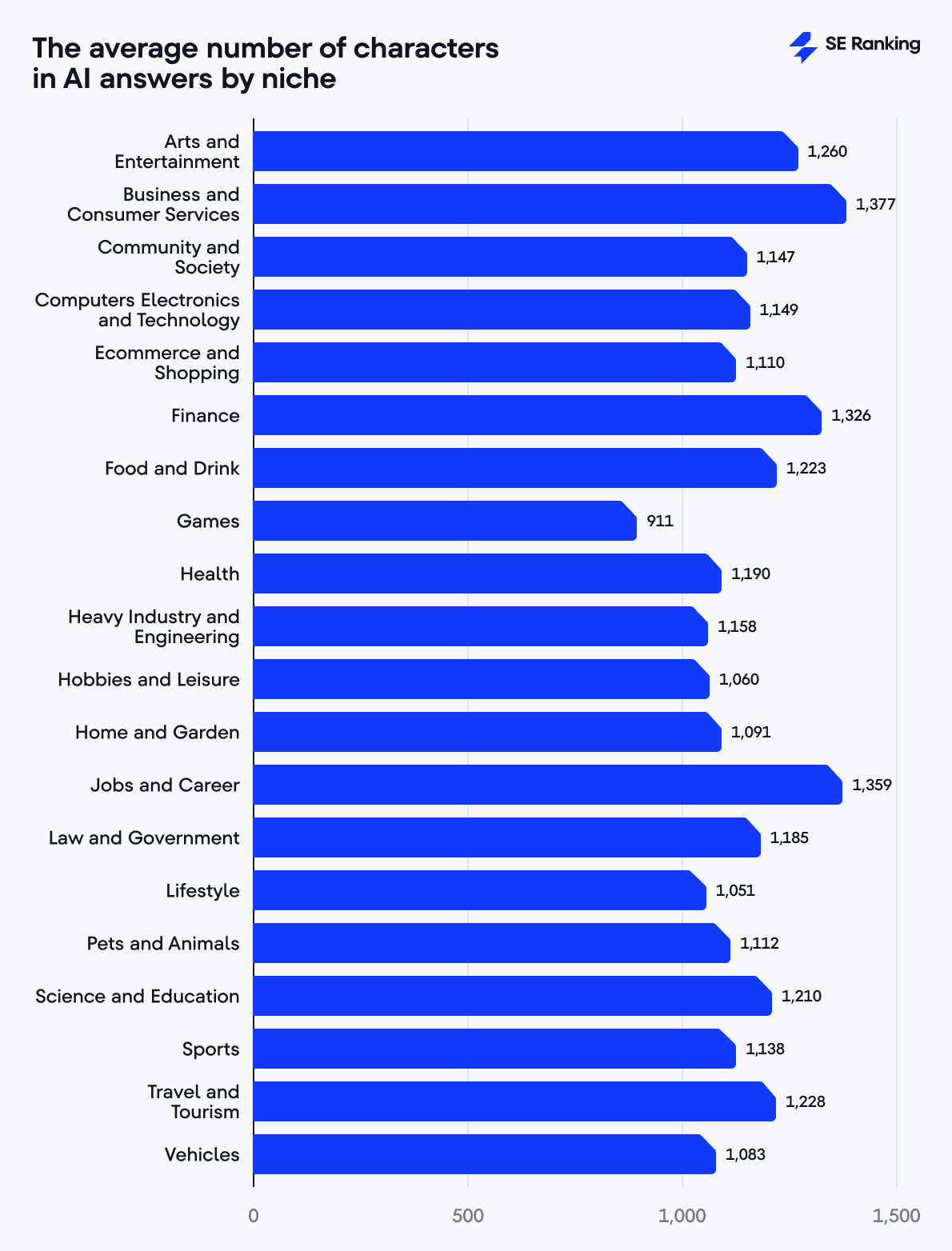

We analyzed response length by niche to find out which three industries had the longest AI answers:

- Business and Consumer Services: 1,377 characters

- Jobs and Career: 1,359 characters

- Finance: 1,326 characters

These niches tend to be more complex, leading AI to produce fuller, more detailed responses. For example, topics in Business and Consumer Services and Jobs and Career often include strategy descriptions, career guidance, or decision-making help. Answers on the Finance topic frequently require detailed and accurate explanations or multiple perspectives. This complexity naturally results in longer responses.

Niches with the shortest AI responses include:

- Games: 911 characters

- Lifestyle: 1,051 characters

- Hobbies and Leisure: 1,060 characters

These niches tend to be more direct, concise, and straightforward. Answers related to Games include quick tips, cheat codes, or game mechanics, and don’t require detailed explanations. Lifestyle and Hobbies and Leisure often result in more laid-back content with brief recommendations or simple explanations.

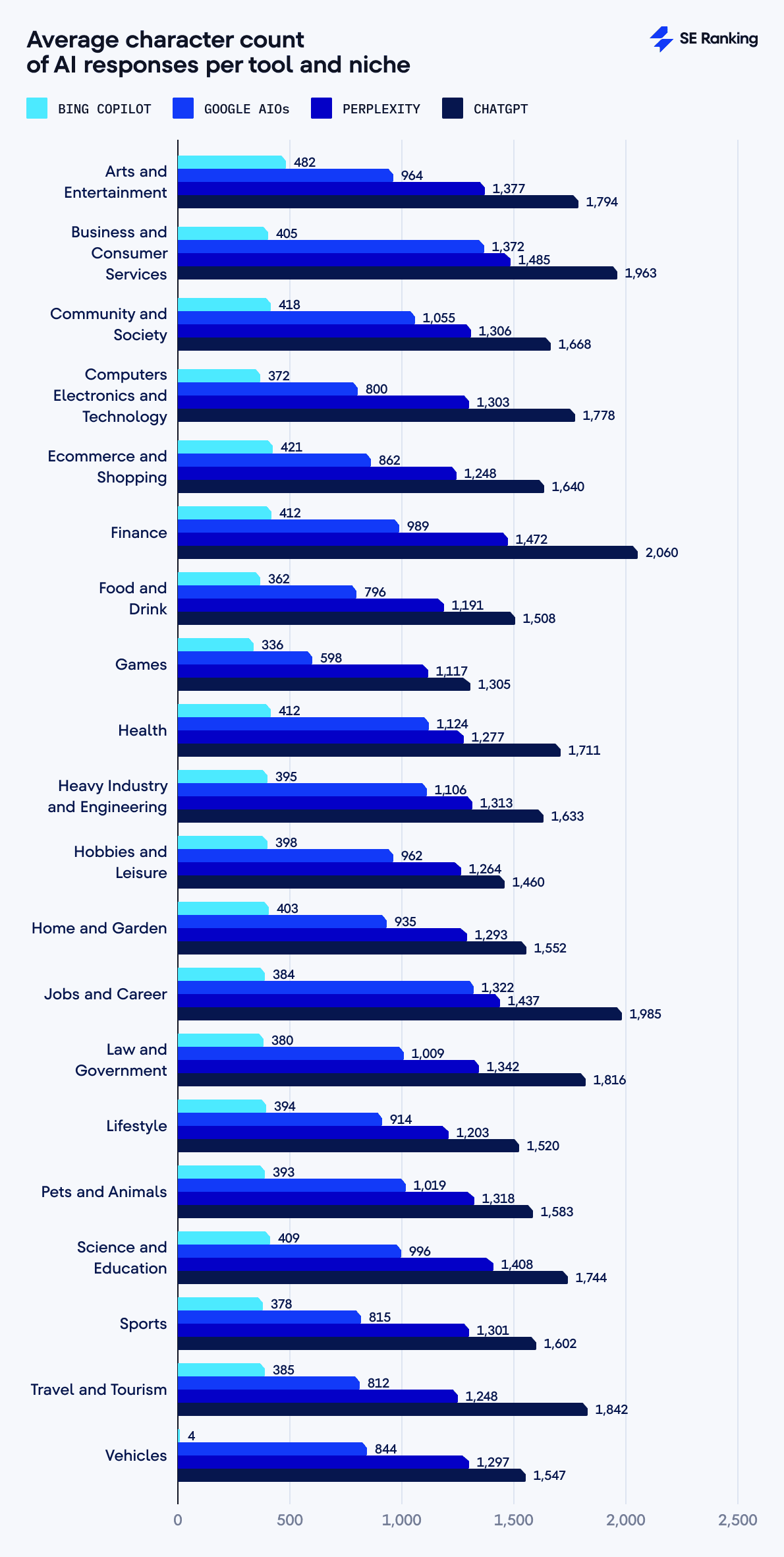

Here’s what we found when analyzing the length of AI responses in each niche:

- ChatGPT consistently provides the longest responses across nearly all niches.

- Bing Copilot consistently offers the shortest answers.

- Google AIOs and Perplexity typically occupy the middle ground, with Perplexity leaning toward slightly longer responses than Google.

All AI search engines gave the shortest answers in the Games niche:

- Bing Copilot: 336 characters

- Google AIOs: 598 characters

- Perplexity: 1,117 characters

- ChatGPT: 1,305 characters

ChatGPT gives longer answers than any other AI when responding to Finance niche-related prompts, with 2,060 characters on average. This is significantly higher than the tool’s average (1,686 characters). On the other hand, Google AI Overviews gives similar results for the Business and Consumer Services niche (1,372 characters), with responses over 35% longer than its typical average (997 characters).

Perplexity gave the longest responses in the Business and Consumer Services niche, with 1,485 characters, while Bing Copilot led in the Arts and Entertainment niche, with 482 characters per response.

Semantic similarity of AI responses

We used a two-step approach to assess how semantically similar AI-generated responses from different search tools are. First, we used the sentence-transformers library to turn response text into vector embeddings. Then, we applied the cosine similarity function from the sklearn library to calculate similarity based on the cosine of the angle between pairs of embeddings.

A higher cosine similarity score (on a scale from 0 to 1) indicates greater semantic closeness between response pairs.

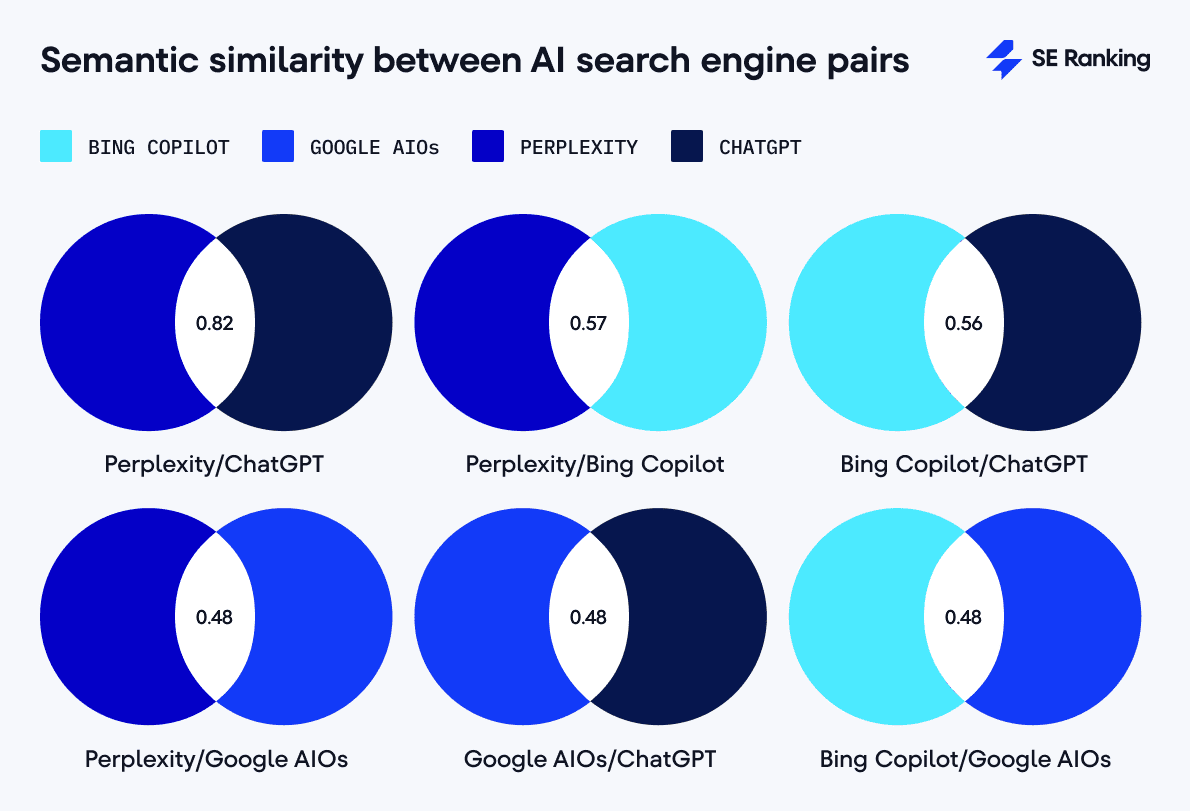

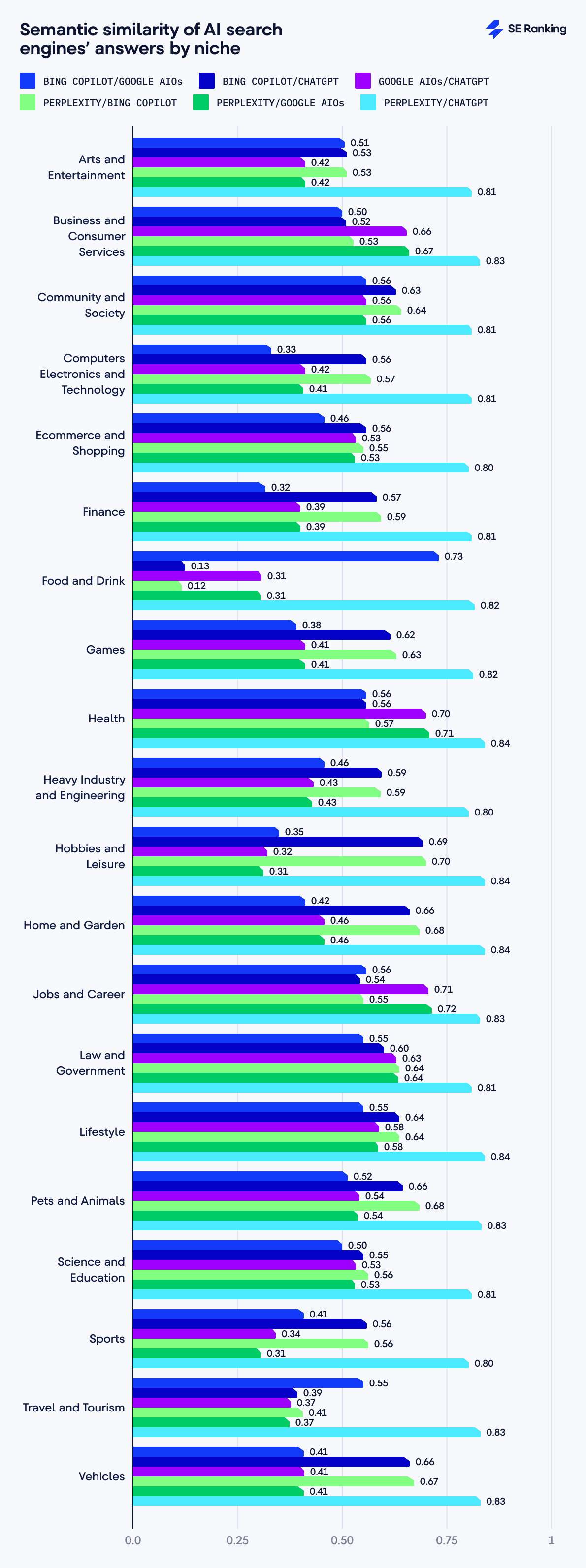

Here are our results after comparing responses across tool pairs:

- Perplexity/ChatGPT: 0.82

- Perplexity/Bing Copilot: 0.57

- Bing Copilot/ChatGPT: 0.56

- Perplexity/Google AIO: 0.48

- Google AIO/ChatGPT: 0.48

- Bing Copilot/Google AIO: 0.48

Perplexity and ChatGPT have the strongest semantic similarity (0.82). This suggests that the responses from these tools are closely aligned in content. Both platforms might use similar generative AI techniques for interpreting queries or training their methods.

Google AI Overviews show consistently lower semantic similarity with other AI (0.48). This suggests a distinct approach to generating answers. Google produces moderate-length responses that differ significantly from the detailed, extensive explanations typical of Perplexity and ChatGPT.

Bing is moderately similar to Perplexity and ChatGPT in semantics. It shares some semantic traits with both despite its tendency to generate shorter answers. Bing may have some overlap in key areas, but its responses have less depth.

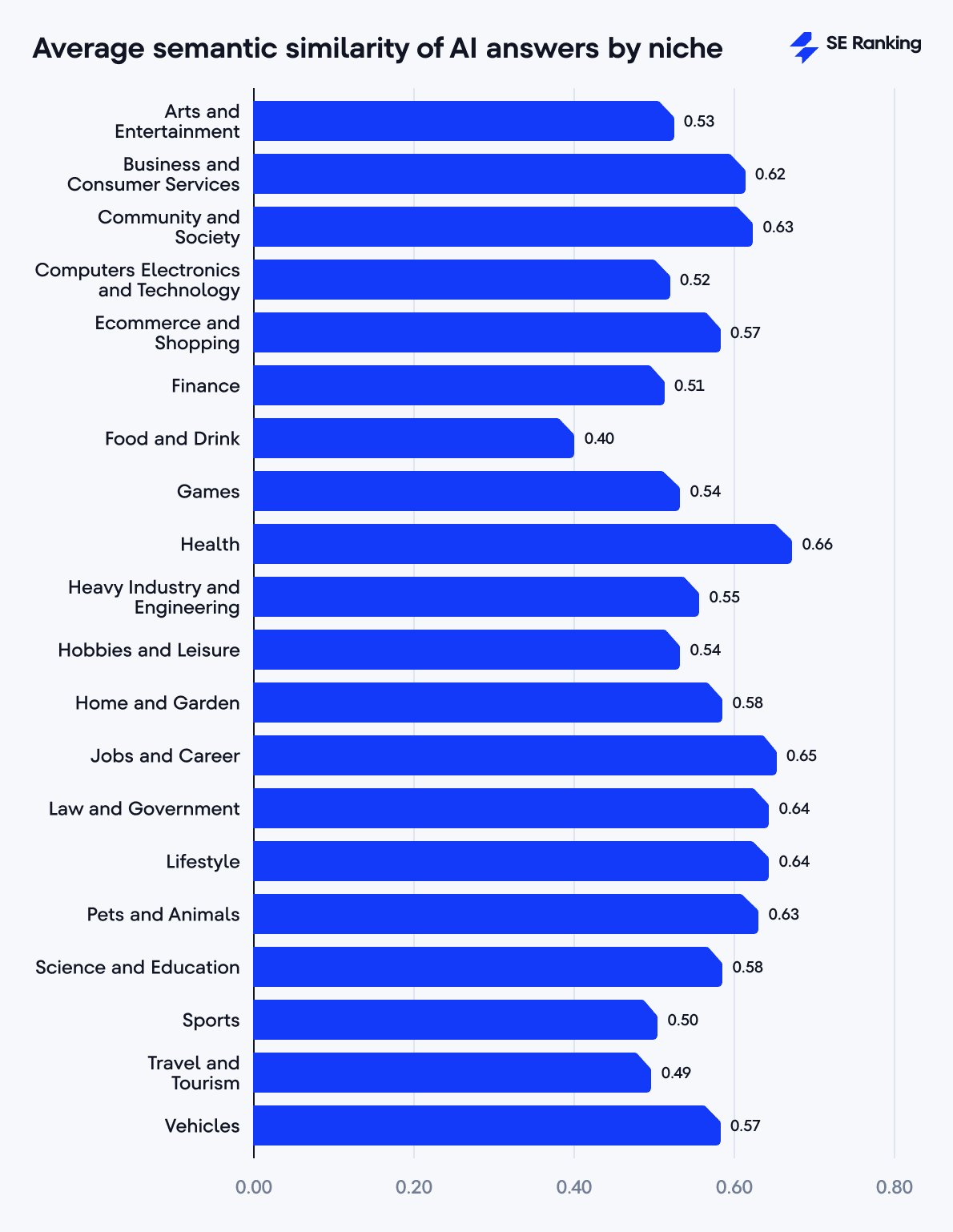

The semantic similarity of AI responses by niche and tool

Here are the niches where each tool gives the most semantically similar answers:

- Health: 0.66

- Jobs and Career: 0.65

- Law and Government & Lifestyle: 0.64

- Community and Society & Pets and Animals: 0.63

- Business and Consumer Services: 0.62

Niches with the lowest similarity scores include:

- Computers Electronics and Technology: 0.52

- Finance: 0.51

- Sports: 0.50

- Travel and Tourism: 0.49

- Food and Drink: 0.4

The semantic similarity between ChatGPT and Perplexity remains equally high across all niches (from 0.80 to 0.84), even for industries where other AI tool pairs show notable differences.

The semantic similarity score between these two tools reaches its peak (0.84) for the Health, Hobbies and Leisure, Home and Garden, and Lifestyle niches. This could be explained by the higher subjectiveness of these topics. Perplexity and ChatGPT are so alike in these areas that they could easily substitute each other.

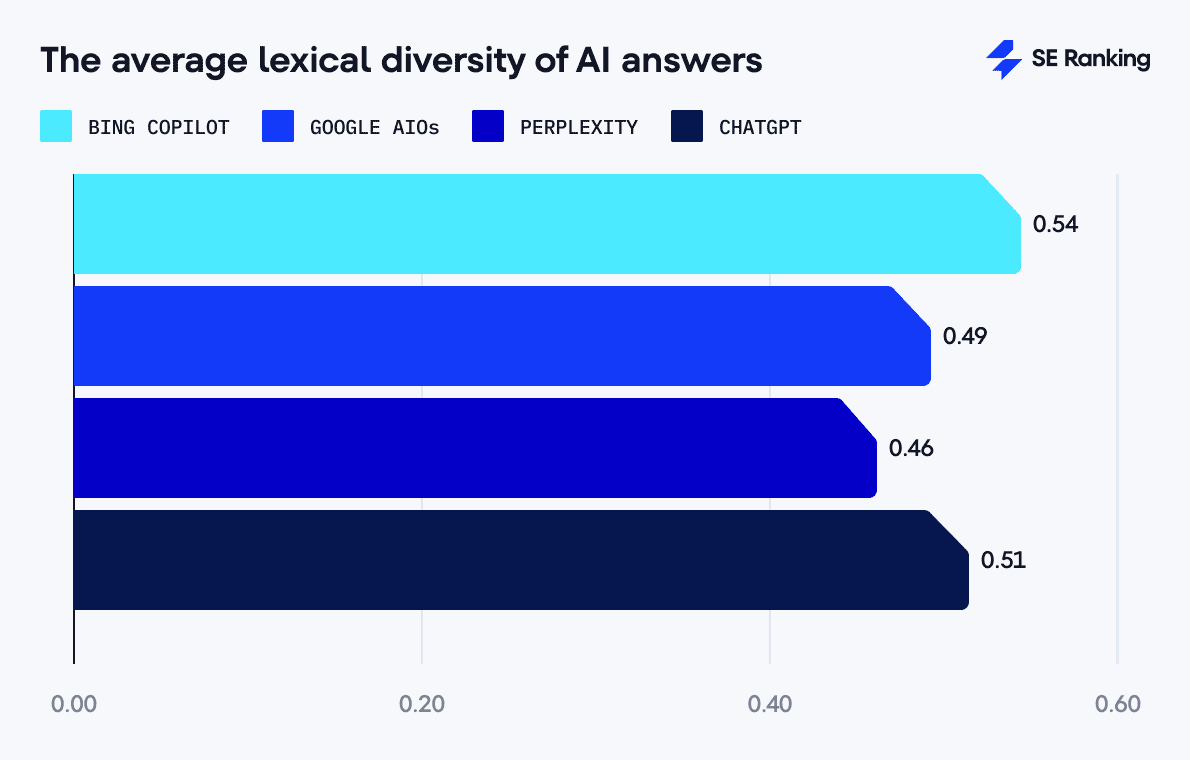

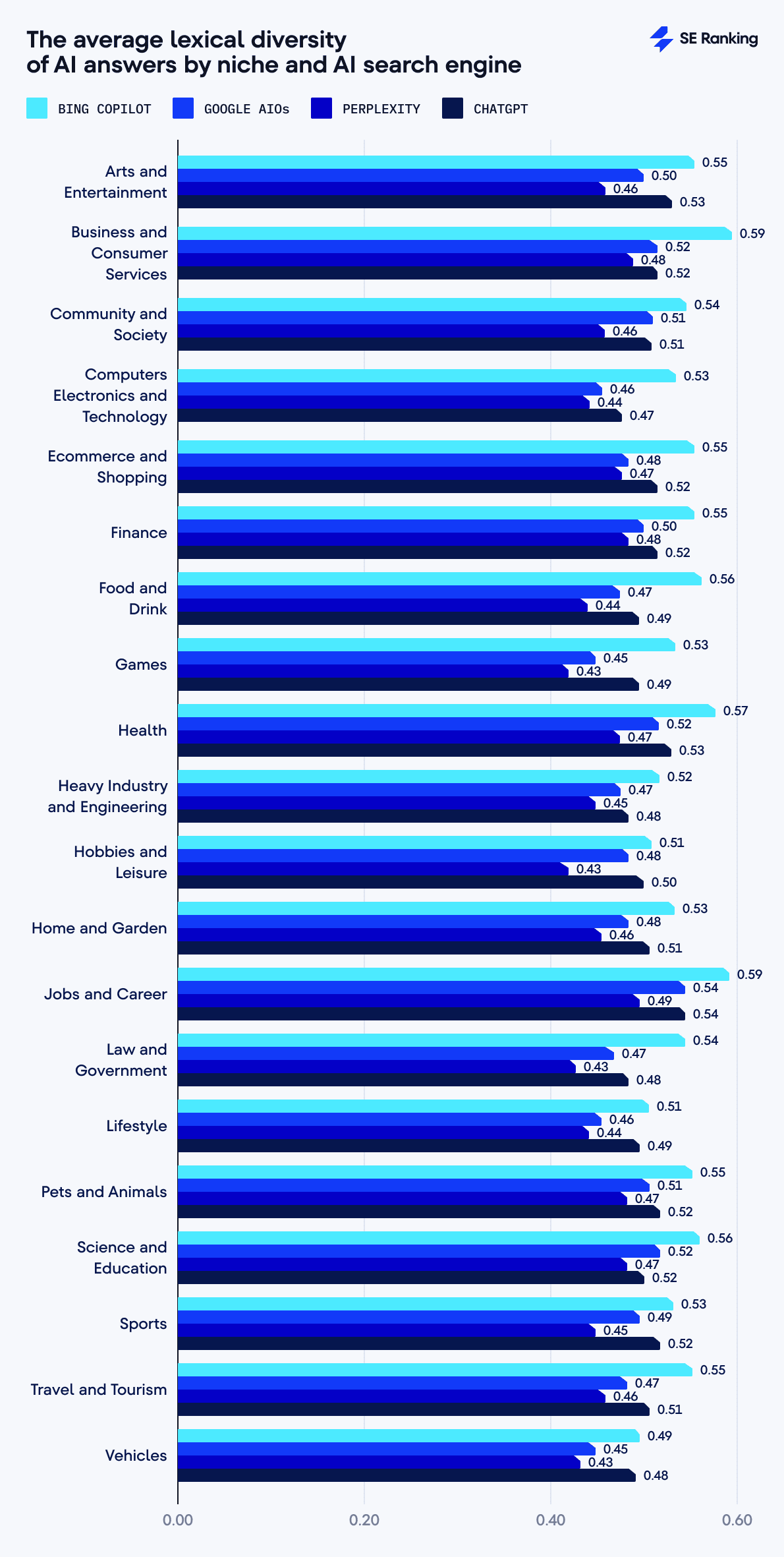

Lexical diversity of AI responses

The lexical diversity score shows the text’s variability in vocabularity. Higher scores suggest a broader vocabularity range, while lower scores indicate higher word repetition.

For our analysis, we calculated the ratio of unique words to all words in the responses and aggregated the data by tool to get the average value for each. We excluded stop words like to, and, a, it, is, etc., provided by the NLTK Stopword List.

Below are the results:

- Bing Copilot: 0.54

- ChatGPT: 0.51

- Google AIOs: 0.49

- Perplexity: 0.46

We were surprised to see that Bing got the highest lexical diversity score. It generates the shortest answers but uses the most diverse vocabulary, though this could be due to the fact that shorter answers tend to have less repetition. If each sentence communicates unique information, it could lead to more diverse vocabulary usage.

ChatGPT provides lengthy and detailed answers with relatively high lexical diversity. Google AIOs have slightly lower lexical diversity, leading to repeatedly used keywords or standardized phrasing. Perplexity had the lowest lexical diversity.

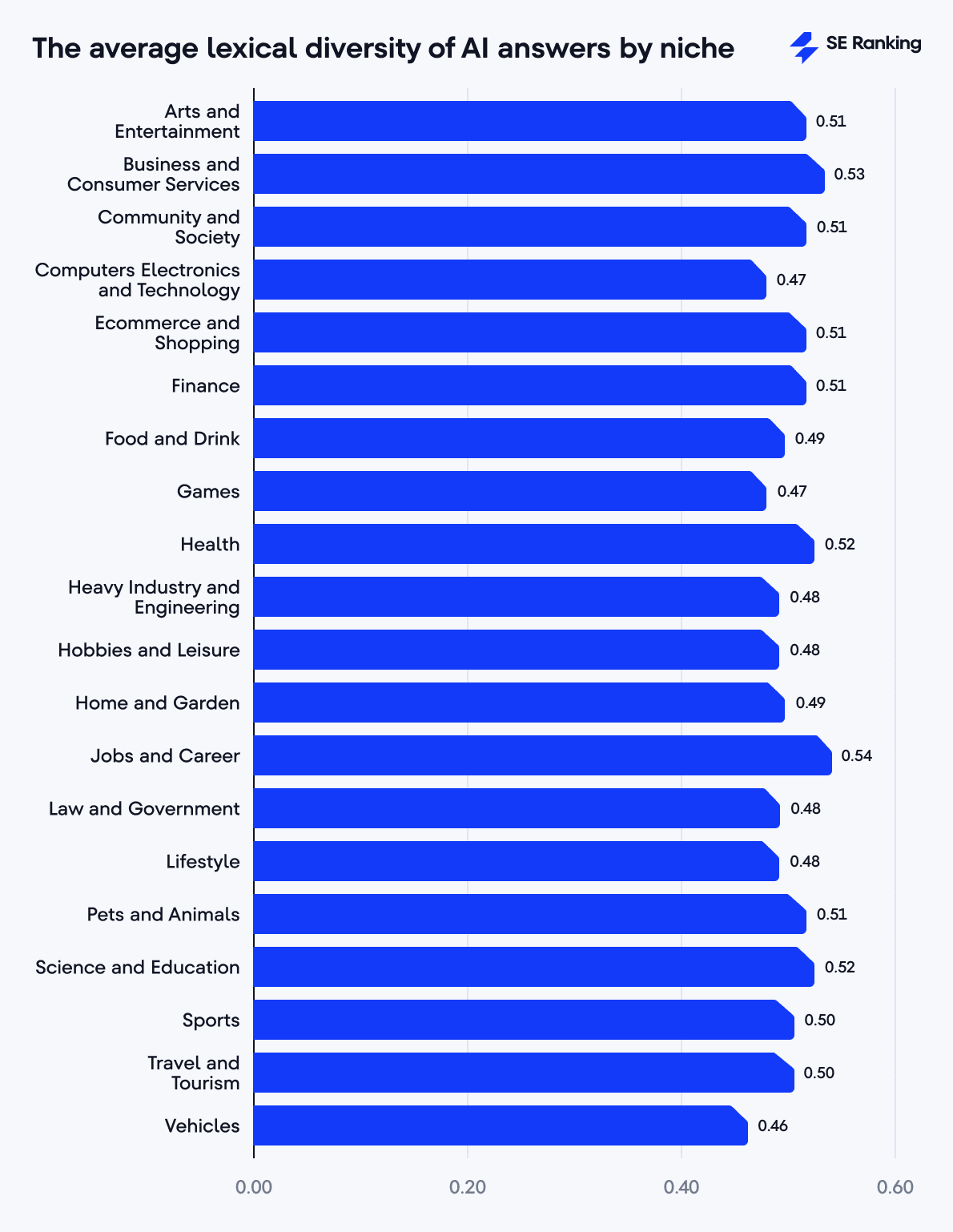

Lexical diversity of AI responses by niche and tool

As for the average lexical diversity by niche, the top-scoring ones include:

- Jobs and Career: 0.54

- Business and Consumer Services: 0.53

- Health & Science and Education: 0.52

These niches typically cover complex, detailed topics that call for nuanced explanations, broad vocabularies, and specific terminology. According to our data, these industries also tend to have longer answers, with Business and Consumer Services producing 1,377-character long answers, and Jobs and Career getting 1,359-character long answers.

Niches with the lowest lexical diversity are:

- Heavy Industry and Engineering, Hobbies and Leisure, Law and Government, & Lifestyle: 0.48

- Computers Electronics and Technology & Games: 0.47

- Vehicles: 0.46

We again see some connection with the average length of AI answers, particularly for niches such as Games (911 characters), Lifestyle (1,051 characters), and Hobbies and Leisure (1,060 characters).

Lower lexical diversity in these niches could indicate more repetitive language usage, possibly due to simpler or highly specialized content. For example, Vehicles or Computers ElectronicsandTechnology might repeatedly reference technical specifications, models, or features. Niches like Games or Food and Drink might reuse common descriptive phrases or instructions.

When looking at the lexical diversity of AI responses generated by the tools we analyzed for each niche, we see Bing Copilot and Perplexity on opposite sides. Bing Copilot consistently provides responses with the highest lexical diversity across all niches, while Perplexity consistently exhibits the lowest lexical diversity scores.

Niches with the longest answers have the highest lexical diversity across all tools. For example, in the Jobs and Career niche, the lexical diversity for Bing is 0.59, Google AIOs are 0.54, Perplexity is 0.49, and ChatGPT is 0.54, showing that each tool has higher scores in this niche than their overall averages (Bing’s is 0.54, Google AIOs’ have 0.49, Perplexity has 0.46, and ChatGPT’s average is 0.51).

This indicates that the topic’s complexity affects each answer’s length and linguistic richness.

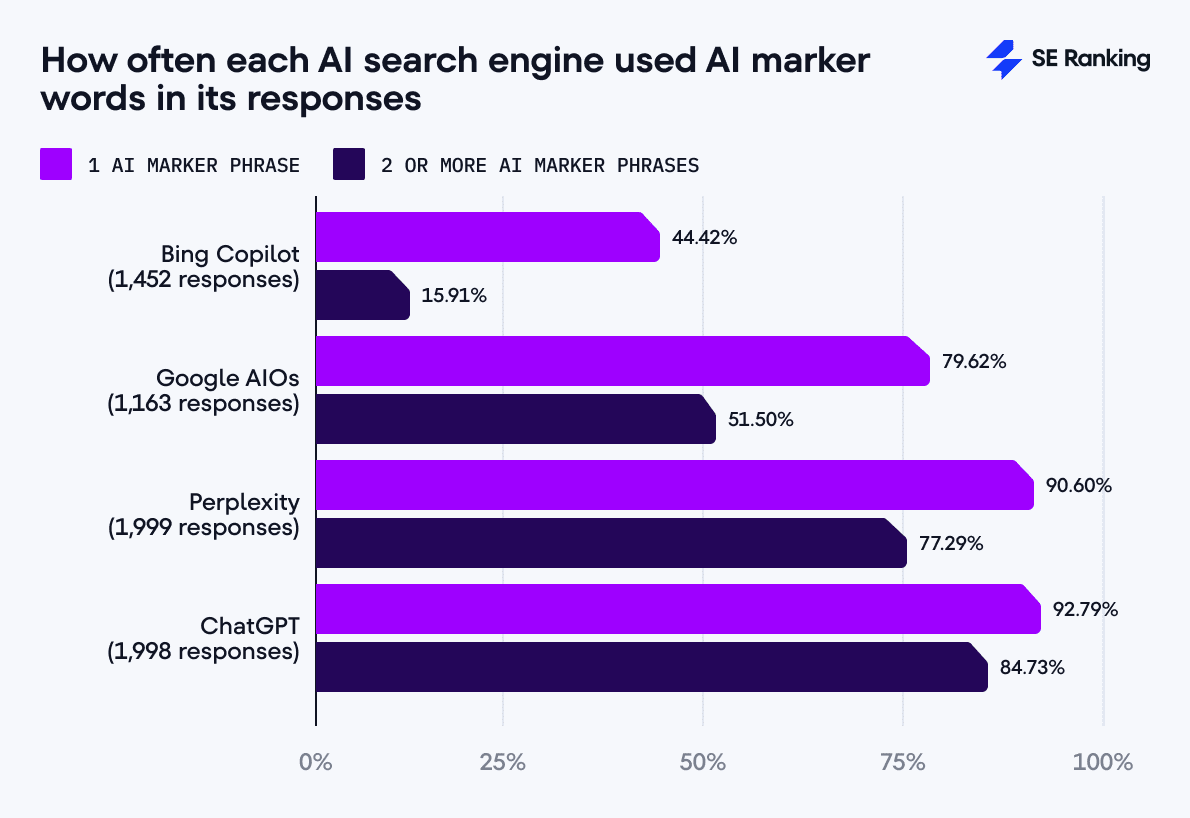

Marker phrases in AI responses

We also checked responses for words and phrases associated with AI-generated texts. We used our own list of 137 words and phrases commonly found in AI-generated text (we’ll call them “AI marker phrases”). Some examples are: in today’s digital landscape, harnessing the power of, a game-changer in the field, navigate the complexities, etc.

Out of the 6,612 answers we analyzed:

- 79.19% (5,236 responses) contained at least one AI marker phrase

- 61.52% (4,068 responses) featured two or more AI marker phrases.

Our tool-by-tool analysis shows the following results:

- ChatGPT displayed the highest occurrence rate. Out of 1,998 answers, 1,854 (92.79%) contained at least one AI marker phrase, and 1,693 (84.73%) answers contained two or more AI marker phrases.

- Perplexity also shows the high usage of AI words. Out of 1,999 responses, 1,811 (90.6%) featured at least one AI marker phrase, and 1,545 (77.29%) answers included two or more AI marker phrases.

- Google AIOs had a moderate occurrence rate. Out of 1,163 answers, 926 (79.62%) contained at least one AI marker phrase, and 599 (51.5%) answers featured two or more AI marker phrases.

- Bing Copilot exhibited the lowest frequency. Out of 1,452 responses, only 645 (44.42%) included at least one AI marker phrase, and 231 (15.91%) answers contained two or more AI marker phrases.

We also noticed that the tools with the highest percentage of AI marker phrases (ChatGPT and Perplexity) also have the highest semantic similarity score (0.82). This indicates a possible “linguistic convergence,” meaning that advanced AI models can have similar voices.

The data on lexical diversity, semantic similarity, and AI marker phrase usage also reveal the various approaches used by each AI search engine.

For example:

- Bing has the highest lexical diversity (0.54), the lowest use of AI marker phrases (44.42%), and the shortest answers (383 characters). This looks like a maximum information density strategy.

- ChatGPT shows moderate lexical diversity (0.51), excessive use of AI marker phrases (92.79%), and the longest answers (1,686 characters). This suggests persuasion through sheer volume and confidence.

We see two fundamentally different approaches: Bing’s minimalist approach focusing on conciseness and clarity, and ChatGPT’s maximalist approach with detailed explanations and confident, authoritative language.

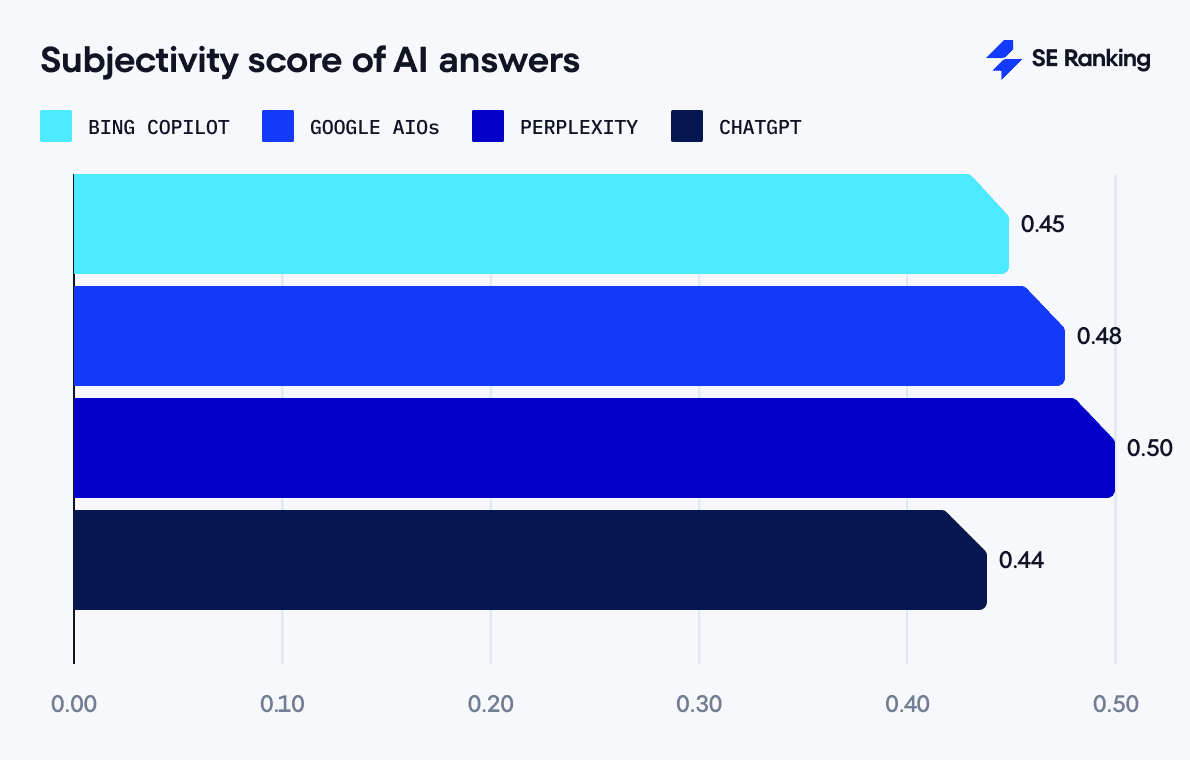

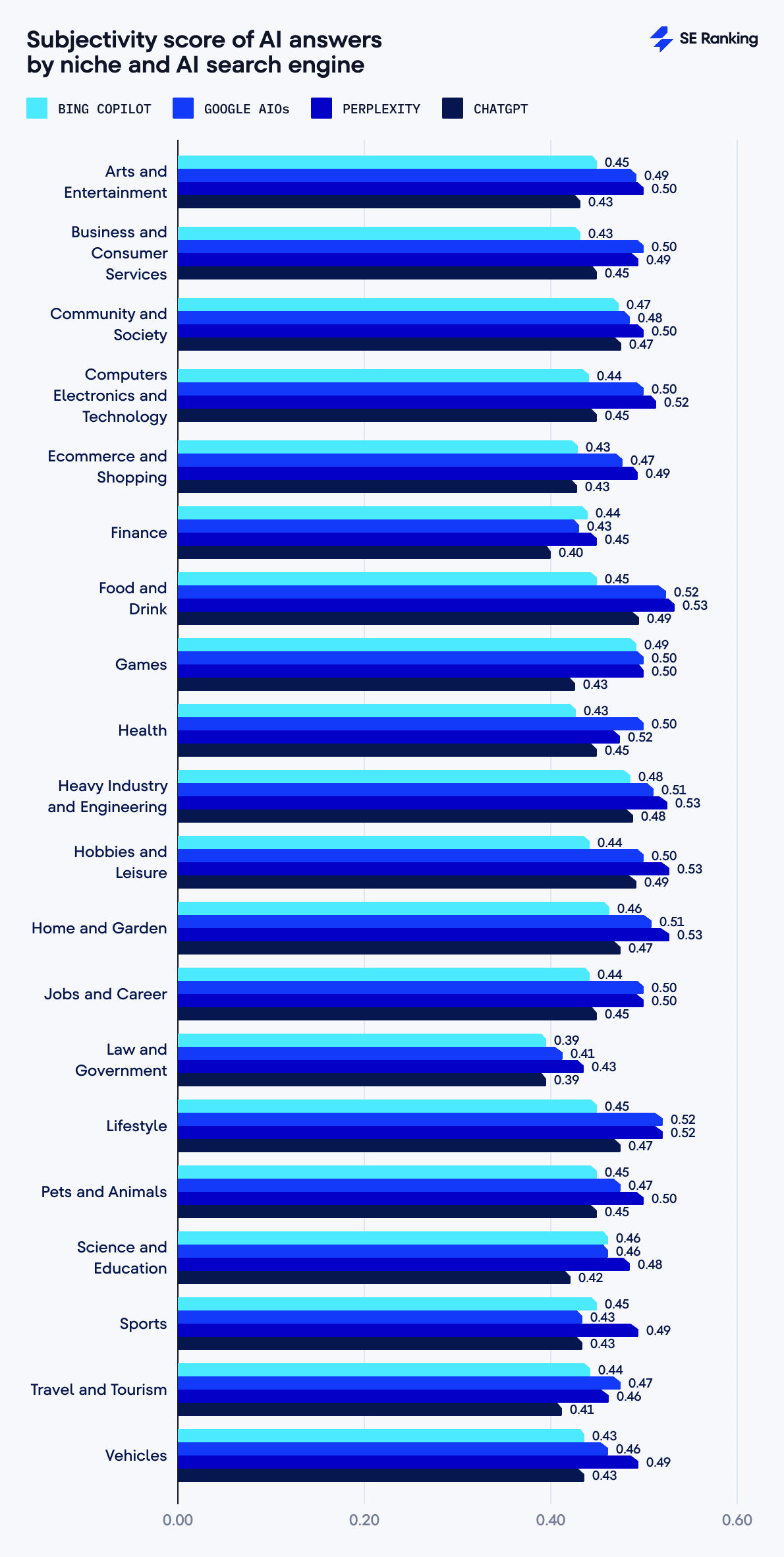

Objectivity of AI responses

We calculated the subjectivity score of AI answers with the help of TextBlob library, a Python-based tool for text analysis. It measures the presence of opinionated language. A score of 1 indicates the highest subjectivity, and a 0 score means the tool provides completely objective answers.

The overall objectivity level of all tools averages from 0.44 – 0.50, with the following distribution:

- Perplexity (highest subjectivity score of 0.50): Incorporates opinions or interpretations.

- Google AIOs (moderate subjectivity score of 0.48): Uses a more balanced approach, mixing factual information with persuasive or evaluative language.

- Bing Copilot (moderate subjectivity score of 0.45): Occasionally uses opinion-influenced phrasing.

- ChatGPT (lowest subjectivity score of 0.44): Uses a more factual style.

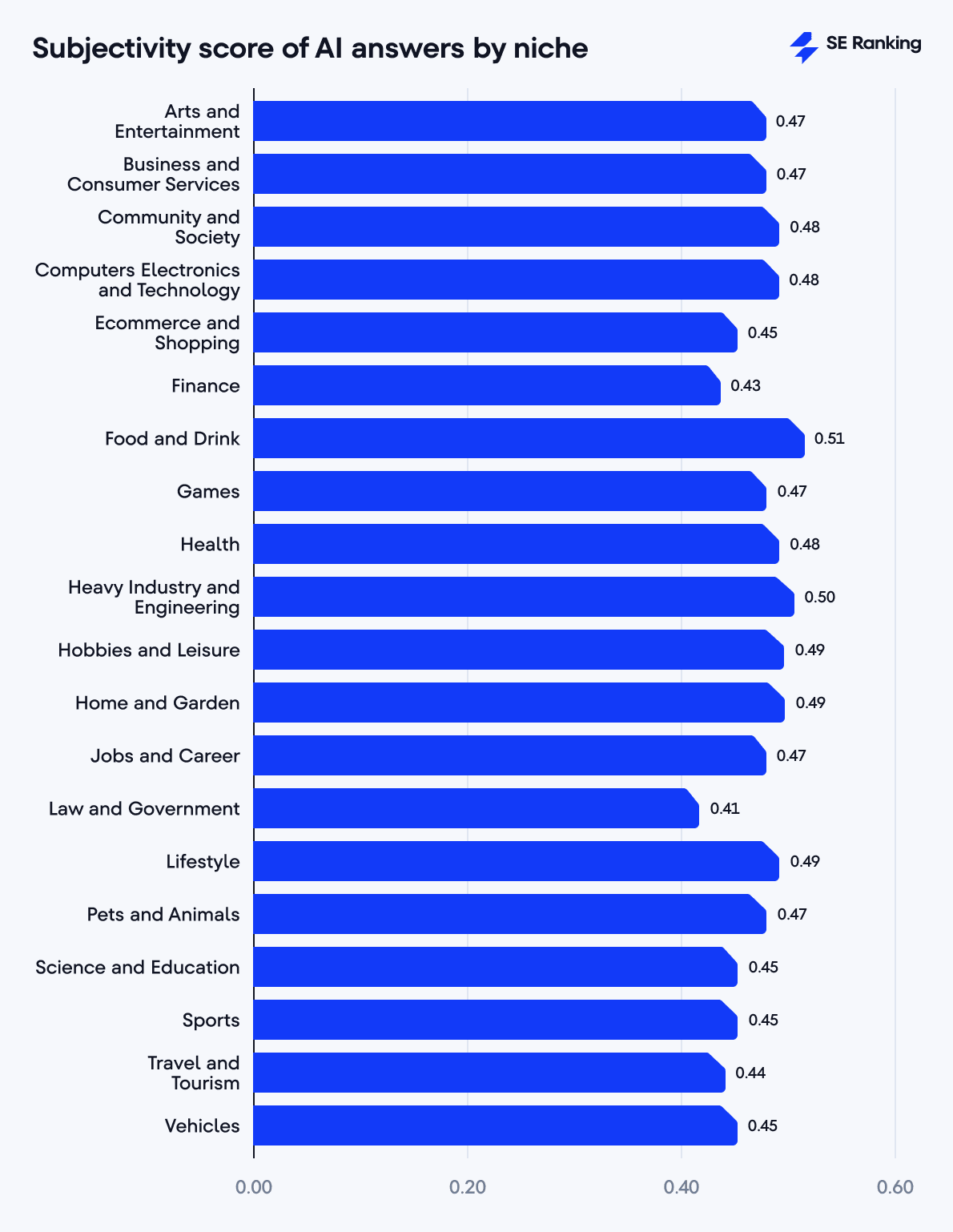

AI response objectivity by niche and tool

The three tools below give the most objective responses:

- Law and Government: 0.41

- Finance: 0.43

- Travel and Tourism: 0.44

The highest level of objectivity is observed in YMYL categories like Law and Government and Finance, where accuracy and bias avoidance are critical. These niches are information-focused, factual, or procedural, leaving minimal room for subjective interpretation.

Niches with the most subjective AI responses include:

- Hobbies and Leisure: 0.49

- Heavy Industry and Engineering: 0.50

- Food and Drink: 0.51

Some of these niches may include recommendations or evaluative content, resulting in higher subjectivity. For example, Food and Drink often include taste descriptions, while Hobbies and Leisure may include recommendations, which can be subjective.

Here’s how subjectivity scores vary by niche and AI tool:

- Bing Copilot generates the most objective answers for Law and Government (0.39) and the most subjective responses for Games (0.49).

- Google AIOs produce the most objective outputs for Law and Government (0.41), and the most subjective ones for Food and Drink (0.52) and Lifestyle (0.52).

- Perplexity has the lowest objectivity in many niches. It provides its most objective answers for the Law and Government (0.43) niche, which is actually the worst among all the tools. It is the most subjective for Food and Drink, Heavy Industry and Engineering, Home and Garden, Hobbies and Leisure (0.53 for each niche).

- ChatGPT is the most objective for Law and Government (0.39), and the most subjective for Food and Drink (0.49) and Hobbies and Leisure (0.49).

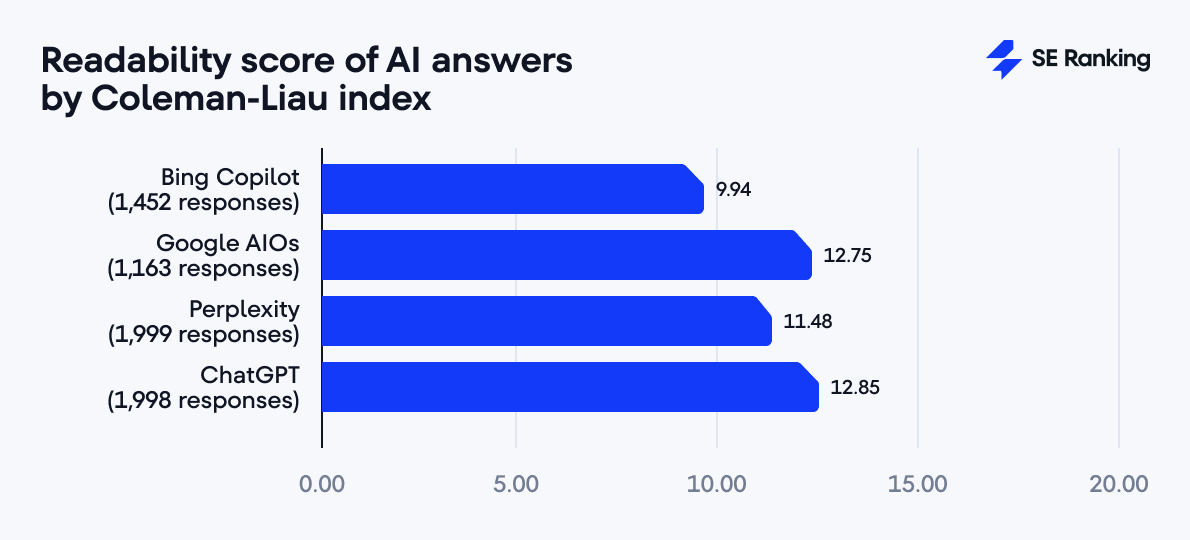

Readability of AI responses

To analyze the readability of AI responses, we used the Coleman-Liau Index, which focuses on letter and word counts. It’s less sensitive to sentence structure or formatting irregularities. It also calculates the U.S. grade level required to understand the text.

Coleman-Liau Readability Index scale:

- 0-1 values correspond to 3-7 year-old readers (Basic level)

- 1-5 values correspond to 7-11 year-old readers (Easy to read)

- 5-8 values correspond to 11-14 year-old readers (Ideal for average readers)

- 8-11 values correspond to 14-17 year-old readers (Fairly difficult to read)

- 11+ values correspond to 17+ year-old readers (Too hard to read)

Our results show that Bing comes out on top with a score of 9.94, indicating its wider audience focus. Perplexity maintains a good position with a score of 11.48, while ChatGPT and Google AIOs again show increased complexity with scores of 12.85 and 12.75, respectively.

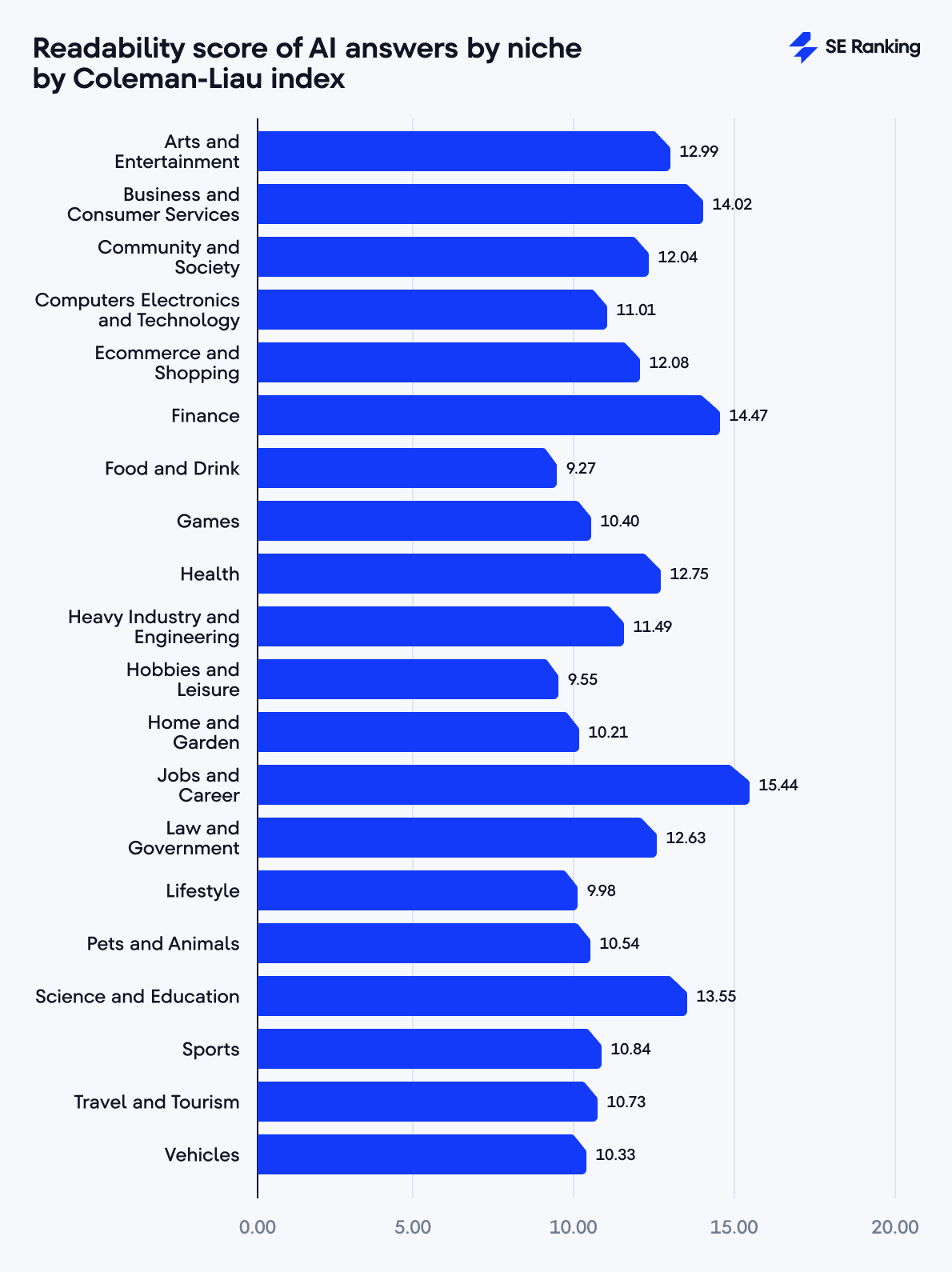

As for the distribution of scores by niche, the following industries stand out for having the highest complexity, where AI responses are the hardest to understand:

- Jobs and Career: 15.44

- Finance: 14.47

- Business and Consumer Services: 14.02

These niches typically require a higher educational background due to terminology, complex concepts, and sophisticated language.

Niches with the lowest complexity (AI responses are the easiest to understand) include:

- Lifestyle: 9.98

- Hobbies and Leisure: 9.55

- Food and Drink: 9.27

Responses in these niches are simpler and more accessible. The lower readability scores suggest answers are optimized for a broader, more general audience without specialized knowledge.

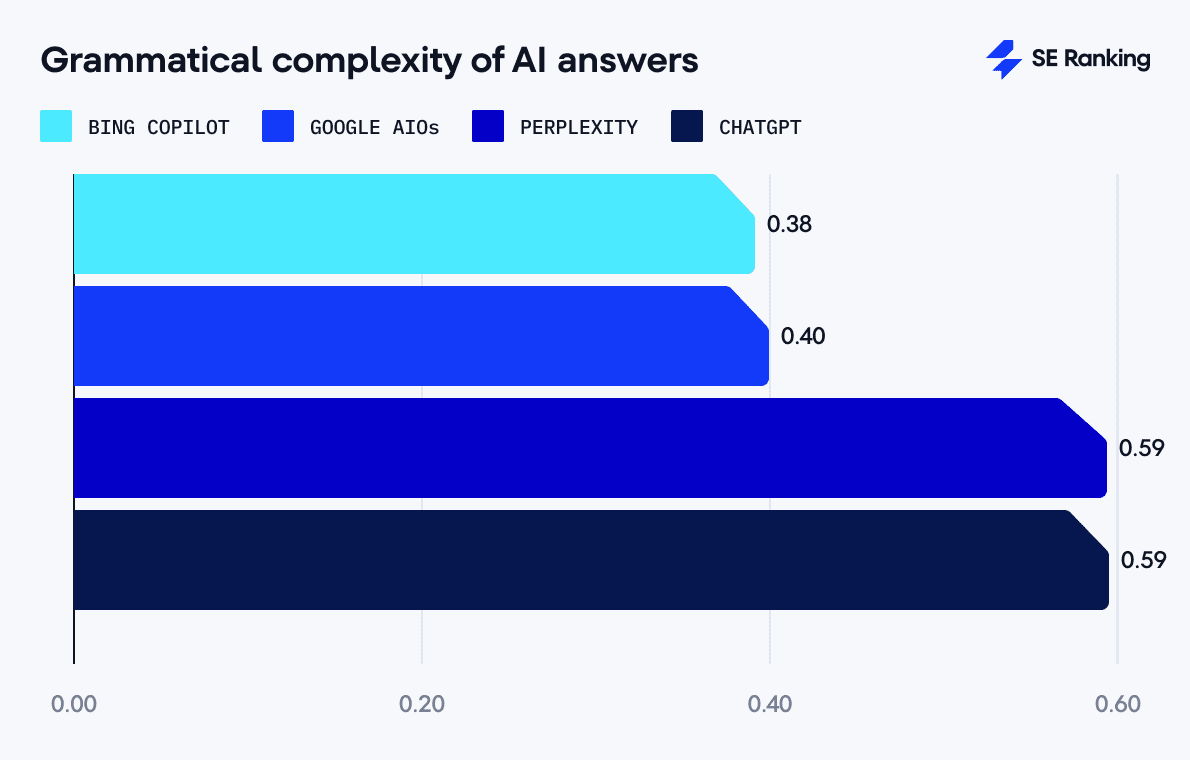

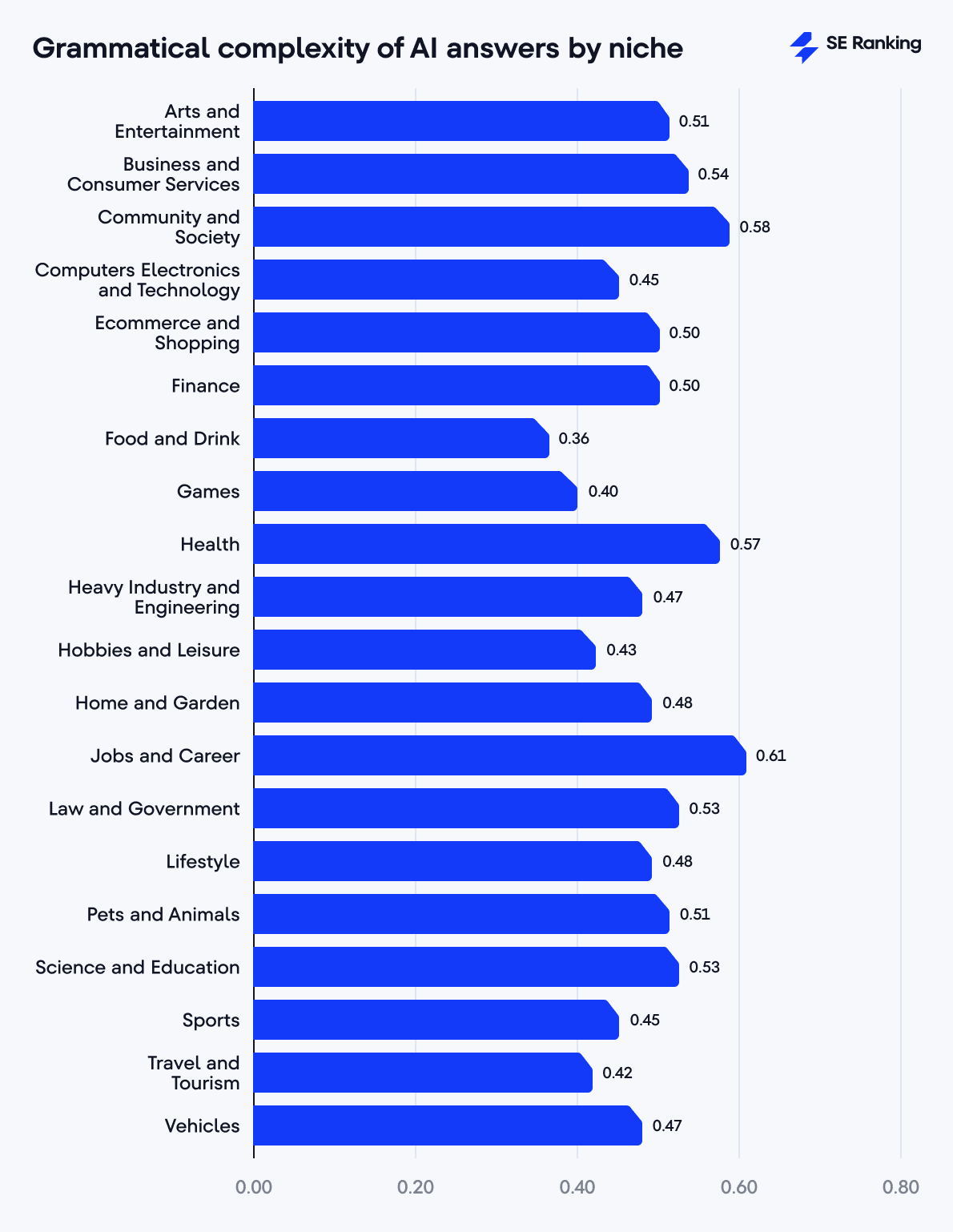

Grammatical complexity of AI responses

To analyze grammatical complexity in AI responses, we used the spaCy library. This allowed us to quantify complex grammatical structures and measure the proportion of complex sentences (sentences containing multiple clauses or subordinate structures) within each tool’s responses.

The results were different for each tool:

- Bing Copilot has the lowest grammatical complexity score (0.38). It uses straightforward sentences that reflect its concise response style.

- Google AIOs exhibited moderate grammatical complexity (0.40). This approach made their responses accessible and easy to understand.

- ChatGPT and Perplexity demonstrated the highest grammatical complexity, each with a 0.59 score. These tools often use complex sentence structures, which reflects their longer responses.

Niches with the highest grammatical complexity include:

- Jobs and Career: 0.61

- Community and Society: 0.58

- Health: 0.57

This makes sense given that these niches have complex topics and use professional language. They use multi-clause sentences or subordinate clauses to communicate detailed concepts.

The following niches had the lowest grammatical complexity:

- Food and Drink: 0.36

- Games: 0.40

- Travel and Tourism: 0.42

Answers for these industries include short and straightforward sentences.

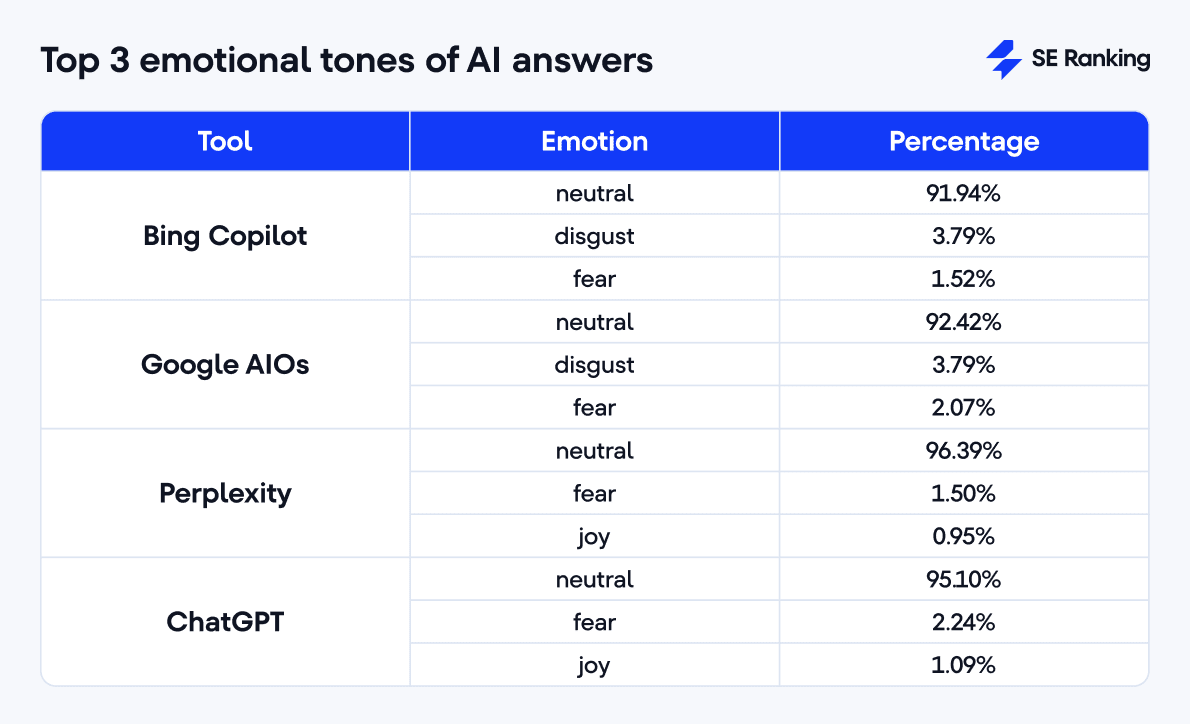

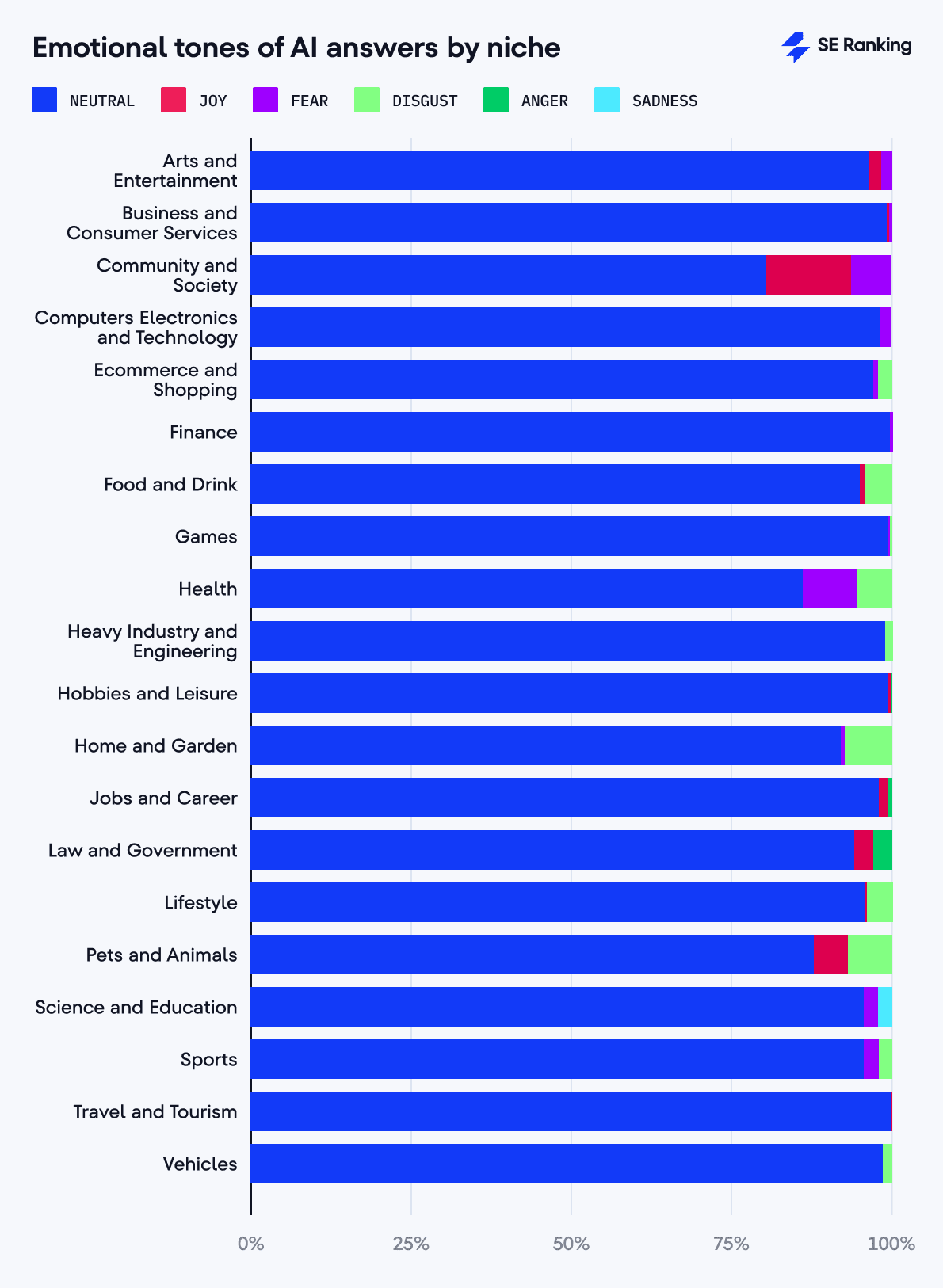

Emotional tone of AI responses

We evaluated the emotional tone of AI responses using the Transformers library along with the pre-trained Emotion English DistilRoBERTa-base model for emotion classification. The results showed that all tools have a high level of neutrality in their answers, which is expected and desirable for search engines.

- Perplexity has the highest level of neutrality (96.39%)

- Bing has the lowest level of neutrality (91.94%)

- ChatGPT is closer to Perplexity (95.10%)

- Google AIOs are closer to Bing Copilot (92.42%)

AI search engines do express emotions other than neutrality. For Perplexity and ChatGPT, the third most frequent emotion is joy. Also, all four tools exhibited a small presence of negative tones like disgust or fear, with Bing Copilot and Google AI Overviews sharing both. This can be explained by the tools’ cautionary or warning language for risk-related and sensitive content like physical or mental health.

A deeper analysis of responses categorized as expressing joy shows that they usually contain positive language. Words such as enjoy, happy, love, wonderful, and good appear consistently throughout these responses. More than half of the responses in the joy category contained the word enjoy, often suggesting that users should find pleasure in particular actions.

Perplexity and ChatGPT use an encouraging tone in their communications. These models phrase their responses in an optimistic, friendly manner, using exclamation points and emotionally positive comments (e.g., That’s a great idea! or That could be a fun project!). They provide both facts and steps and make their responses supportive and optimistic.

In terms of niches, we observed the highest neutrality in the Finance niche (99.67%), where accuracy and objectivity are critical. The lowest neutrality and the highest joy scores were recorded in the Community and Society niche (73.84% neutrality and 11.92% joy), reflecting social topics’ emotional nature.

Perplexity and ChatGPT demonstrate the highest semantic similarity (0.82). Their responses are almost identical in content across all niches, especially for Health or Hobbies and Leisure (0.84). These tools also share a common communication style. They have similar grammatical complexity (0.59) and tend to have long answers. Both tend to use an encouraging tone and express “joy” as the second most frequent emotion after neutrality.

Bing Copilot differs significantly from other tools in its “maximum information density” strategy. It has high text readability (Coleman-Liau index of 9.94) and simplified grammatical structure (grammatical complexity index of 0.38). Although Bing has some semantic similarities with ChatGPT, its approach to providing information is different—minimalistic and focused on a general audience rather than experts.

Google AI Overviews show the lowest semantic similarity with the other tools (0.48). It generates medium-length answers (997 characters) but is the most difficult to read (Coleman-Liau index of 12.75). This indicates that it targets a more educated audience. AI Overviews have moderate grammatical complexity (0.40), similar to Bing. Google has the highest correlation between answer length and the number of links (0.38), indicating its commitment to supporting claims with sources.

This analysis also clearly shows that the topic of the query significantly affects the answer’s characteristics. Professional niches like Finance or Career have more complex but more objective answers, reflecting the need for accuracy and following industry standards. Everyday topics like Food or Hobbies offer simpler and more subjective answers, recognizing the role of personal preference in these areas. Social topics show the most emotion and variety of tones, reflecting the complex nature of social issues.

Brief methodology

For our analysis, we selected 2,000 how-to keywords from 20 niches, making it 100 keywords per category.

We ran our checks during the period of 26.02.2025-03.03.2025 with the following parameters:

- Search Engines: ChatGPT (SearchGPT), Google AI Overviews, Bing Copilot, Perplexity (Search)

- Location: USA

- Language: English (en)

SE Ranking tools used for analysis:

Additional tools and libraries used:

- sentence_transformers library

- Cosine Similarity function from sklearn library

- yake automatic keyword extraction

- Stanza package

- Coleman-Liau Index

- spaCy library

- Transformers library

- Emotion English DistilRoBERTa-base

- TextBlob

Conclusion

AI search engines use different approaches to present content, select sources, and support their answers.

ChatGPT offers long and thoroughly referenced responses. Its approach is very similar to Perplexity in terms of length and communication style. Bing Copilot uses a minimalist approach, providing concise and actionable responses with fewer references. Google AOIs generate medium-length answers, but are harder to read.

Since being featured in AI answers helps with online visibility, You’ll want to align your content strategies with each AI search engines’ nuances.

Stay tuned to our blog as we continue to research this topic and provide you with more insights.